🎯 SciVisAgentBench Evaluation Report

📊 Overall Performance

Overall Score

45.1%

1095/2430 Points

Test Cases

31/48

Completed Successfully

Avg Vision Score

38.7%

Visualization Quality

634/1670

PSNR (Scaled)

12.08 dB

Peak SNR (12/31 valid)

SSIM (Scaled)

0.5741

Structural Similarity

LPIPS (Scaled)

0.4273

Perceptual Distance

Completion Rate

64.6%

Tasks Completed

ℹ️ About Scaled Metrics

Scaled metrics account for completion rate to enable fair comparison across different evaluation modes. Formula: PSNRscaled = (completed_cases / total_cases) × avg(PSNR), SSIMscaled = (completed_cases / total_cases) × avg(SSIM), LPIPSscaled = 1.0 - (completed_cases / total_cases) × (1.0 - avg(LPIPS)). Cases with infinite PSNR (perfect match) are excluded from the PSNR calculation.

🔧 Configuration

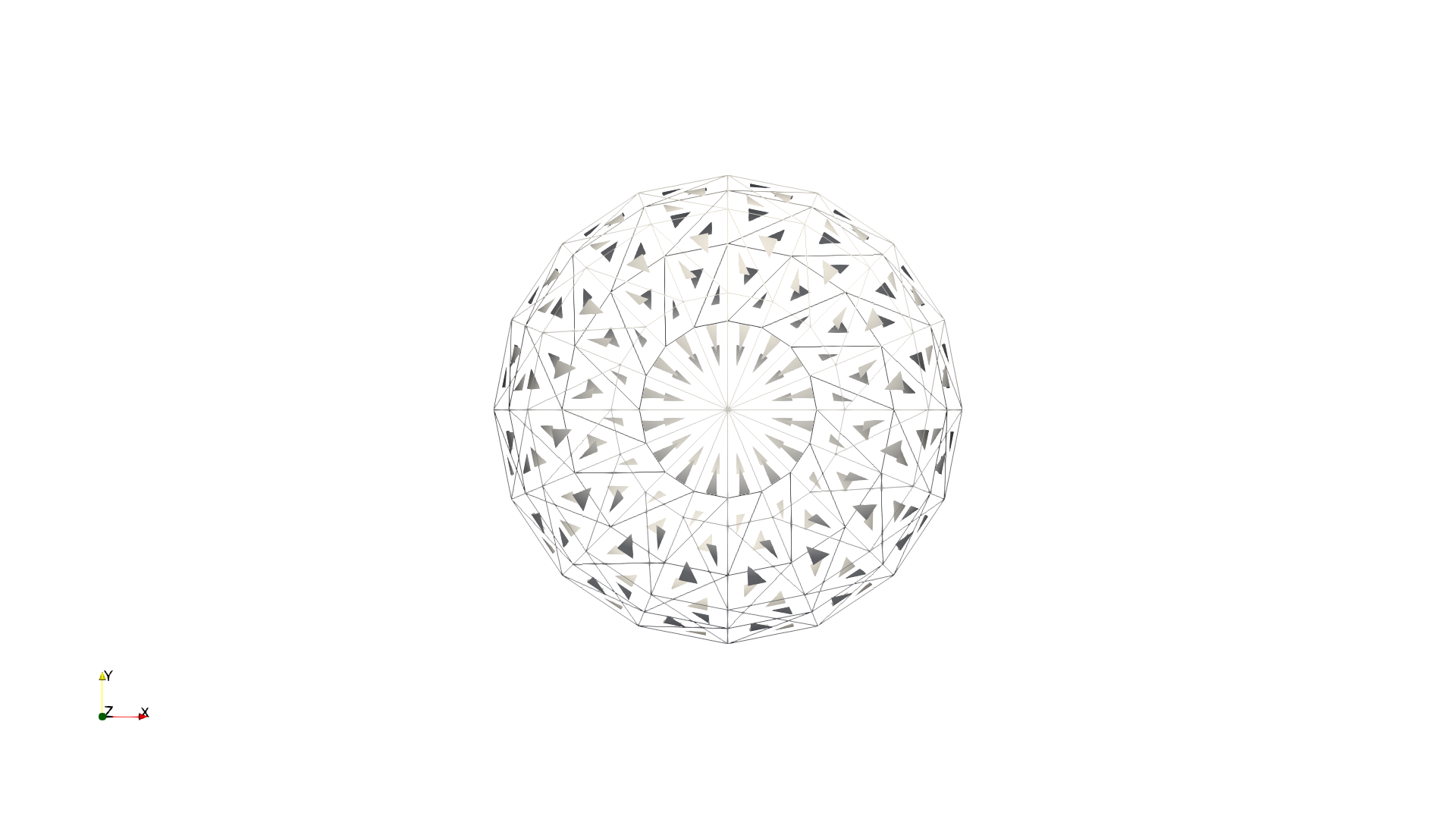

📝 ABC

44/45 (97.8%)

📋 Task Description

Your agent_mode is "chatvis_claude-sonnet-4-5_exp1", use it when saving results. Your working directory is "D:\Code\SciVisAgentBench\SciVisAgentBench-tasks\paraview", and you should have access to it. In the following prompts, we will use relative path with respect to your working path. But remember, when you load or save any file, always stick to absolute path.

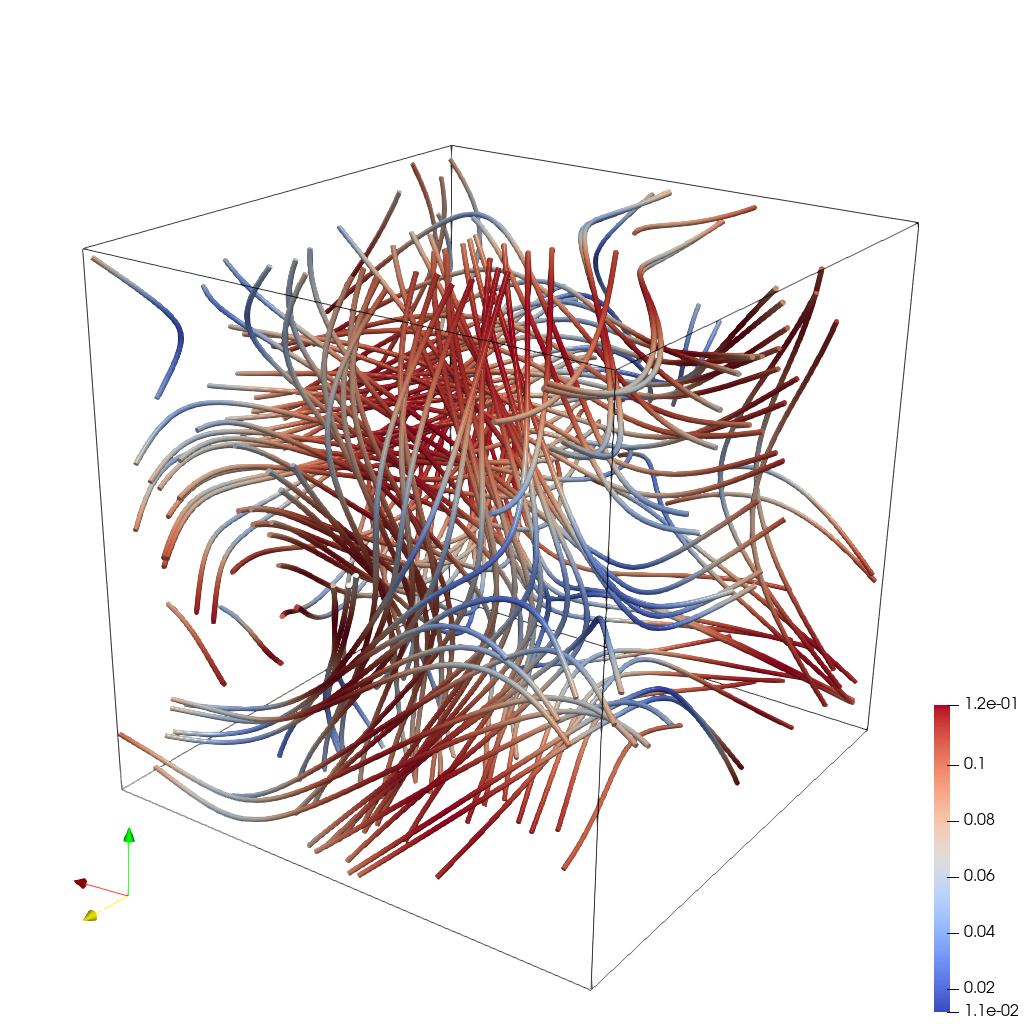

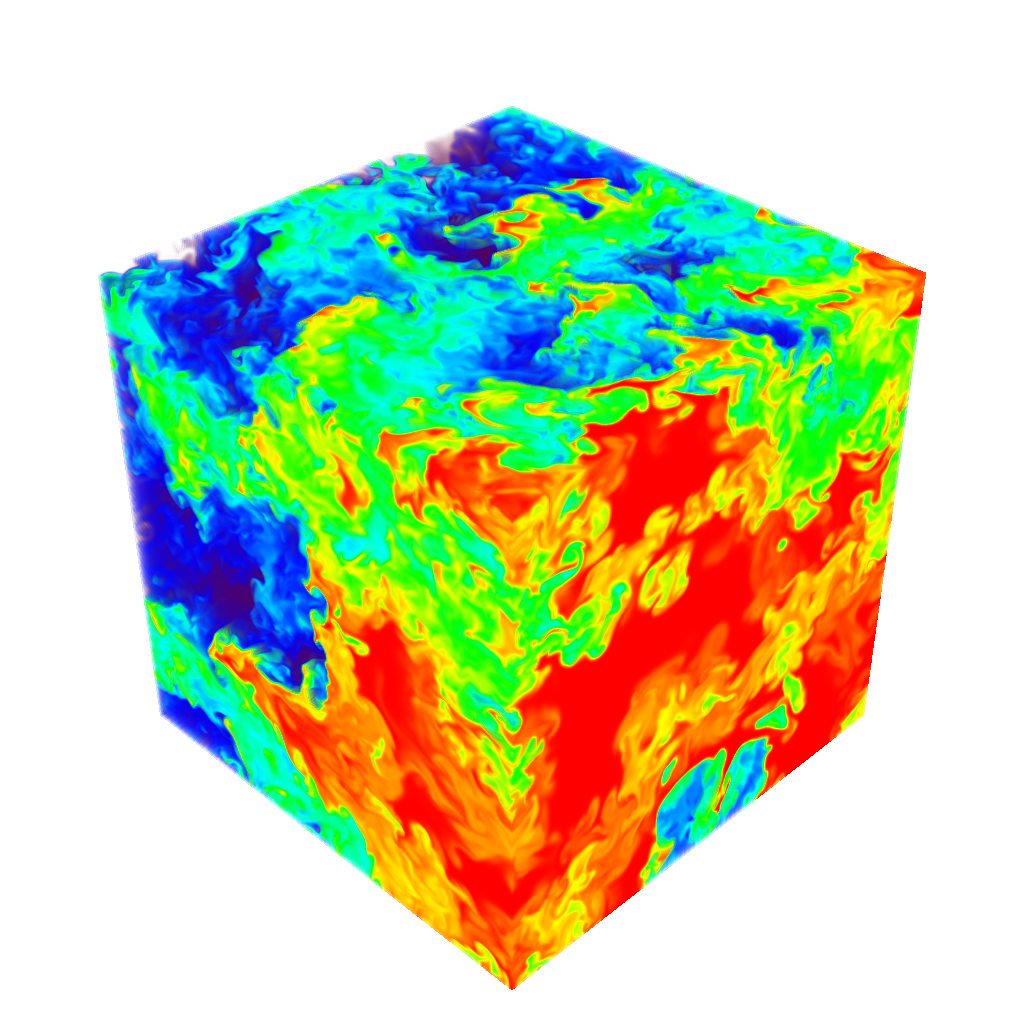

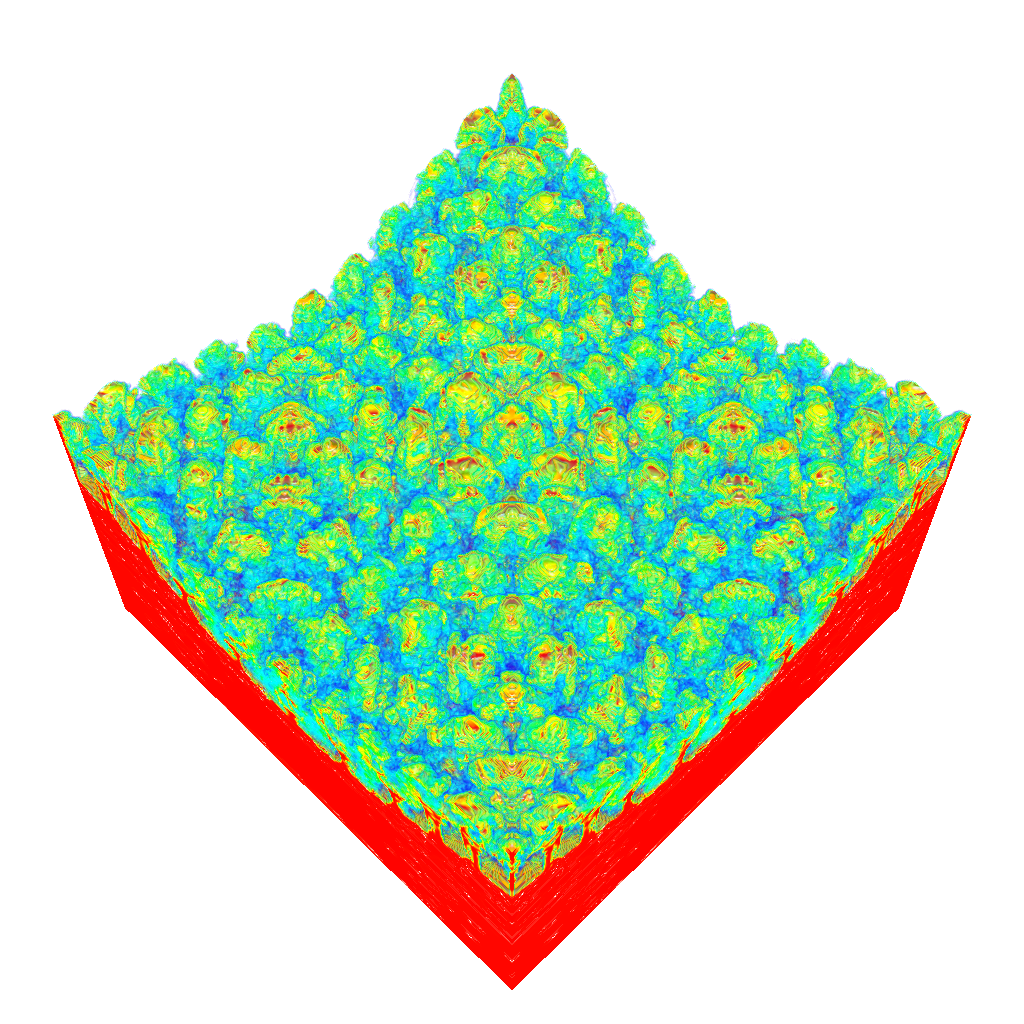

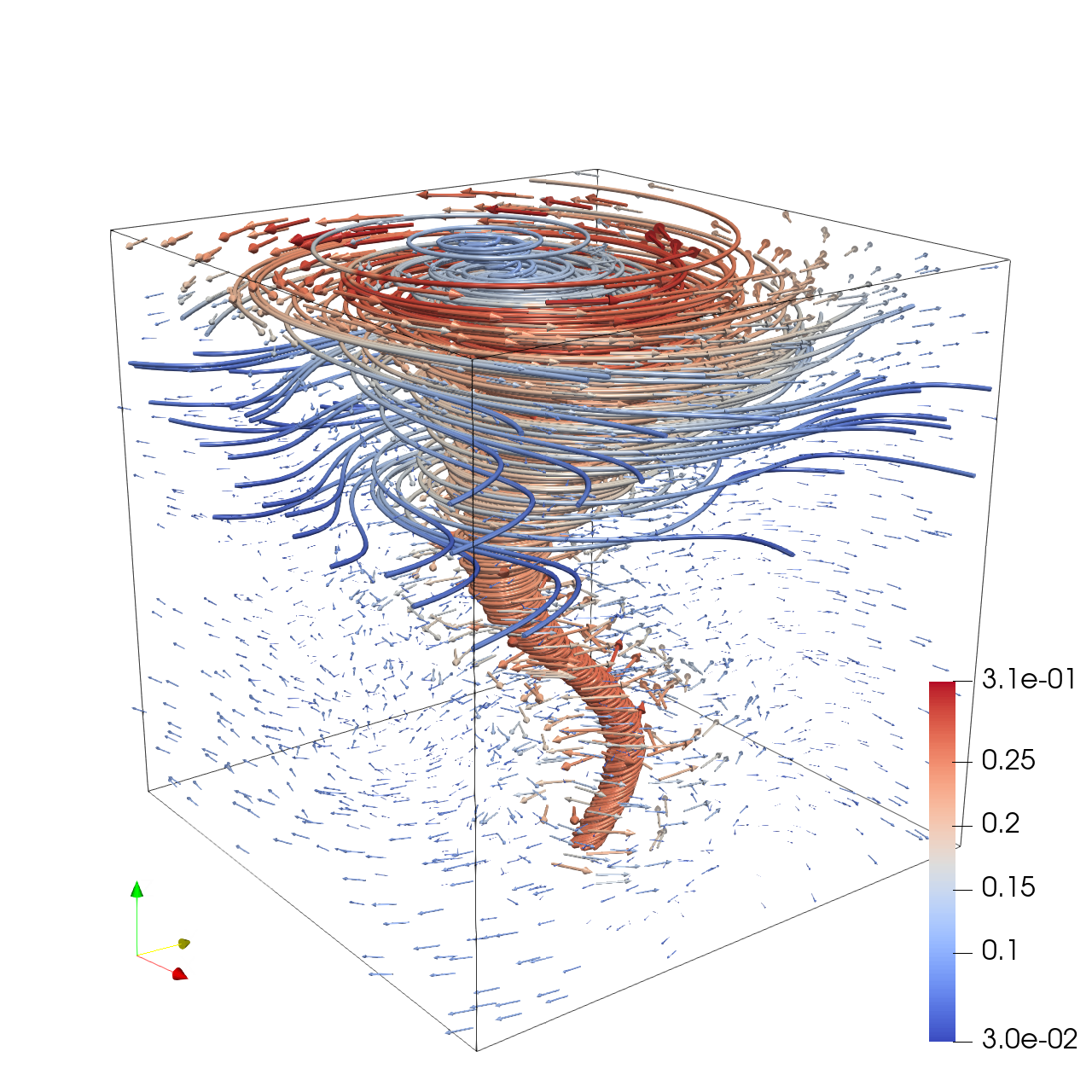

Load the ABC (Arnold-Beltrami-Childress) flow vector field from "ABC/data/ABC_128x128x128_float32_scalar3.raw", the information about this dataset:

ABC Flow (Vector)

Data Scalar Type: float

Data Byte Order: Little Endian

Data Extent: 128x128x128

Number of Scalar Components: 3

Data loading is very important, make sure you correctly load the dataset according to their features.

Create streamlines using a "Stream Tracer" filter with "Point Cloud" seed type. Set the seed center to [73.77, 63.25, 71.65], with 150 seed points and a radius of 75.0. Set integration direction to "BOTH" and maximum streamline length to 150.0.

Add a "Tube" filter on the stream tracer to enhance visualization. Set tube radius to 0.57 with 12 sides.

Color the tubes by Vorticity magnitude using the 'Cool to Warm (Diverging)' colormap.

Show the dataset bounding box as an outline.

Use a white background. Render at 1024x1024.

Set the viewpoint parameters as: [-150.99, 391.75, 219.64] to position; [32.38, 120.41, 81.63] to focal point; [0.23, -0.31, 0.92] to camera up direction.

Save the visualization image as "ABC/results/{agent_mode}/ABC.png".

(Optional, but must save if use paraview) Save the paraview state as "ABC/results/{agent_mode}/ABC.pvsm".

(Optional, but must save if use python script) Save the python script as "ABC/results/{agent_mode}/ABC.py".

Do not save any other files, and always save the visualization image.

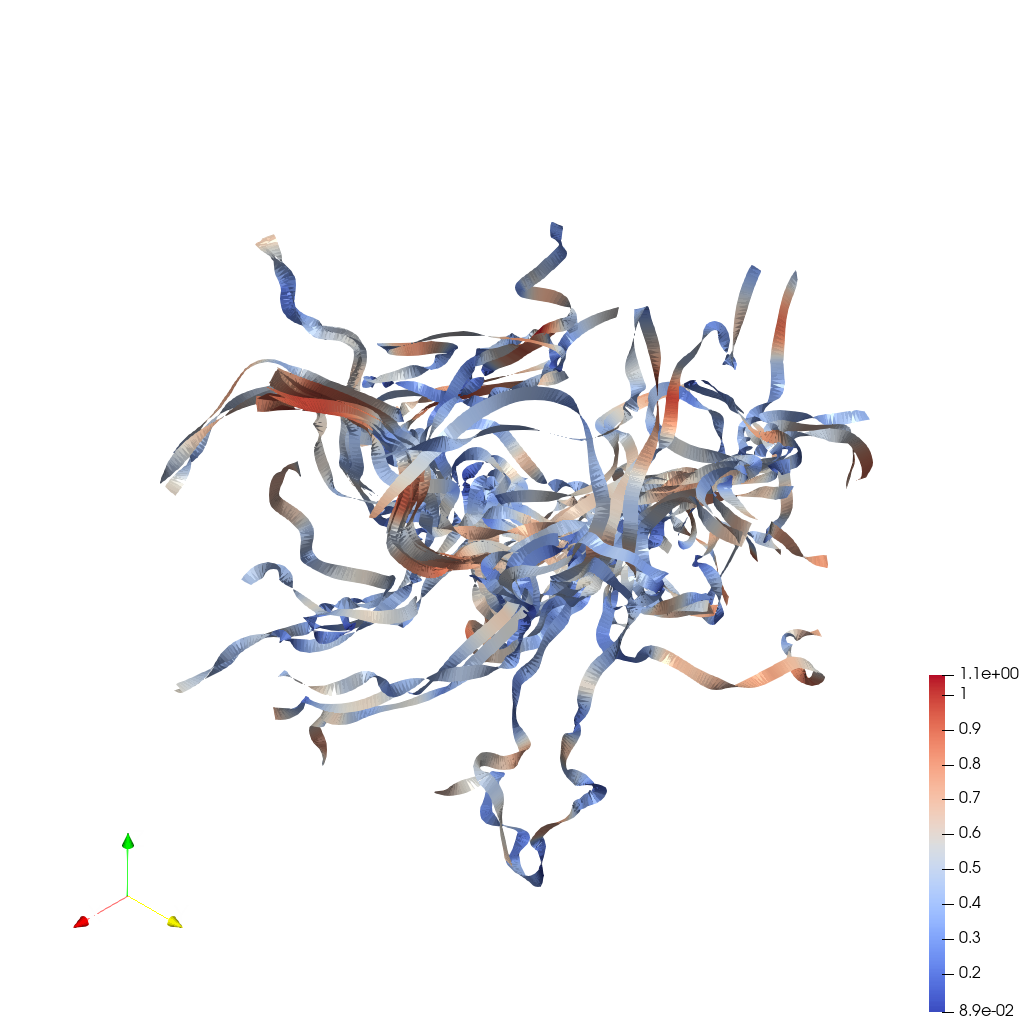

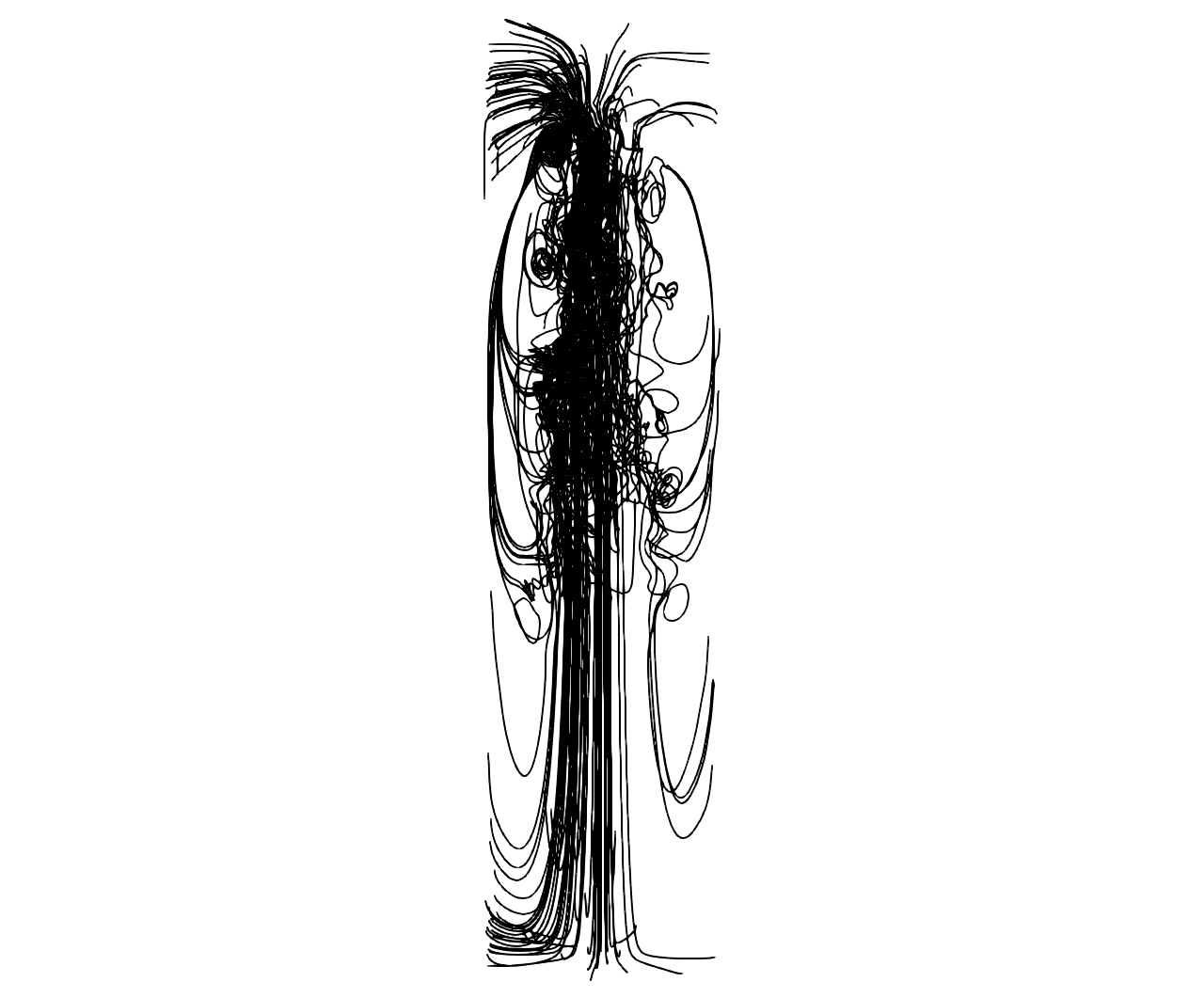

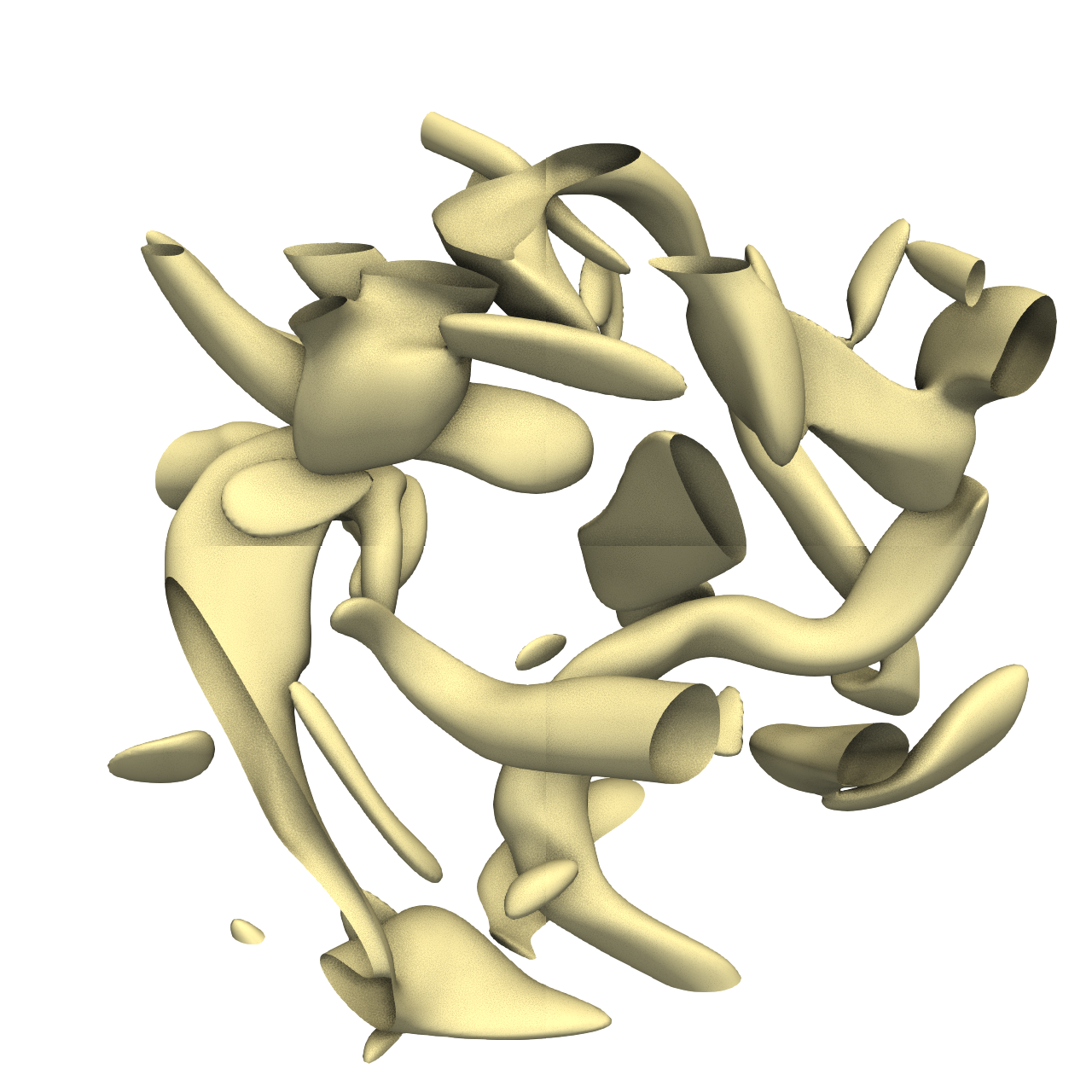

🖼️ Visualization Comparison

Ground Truth

Agent Result

📏 Vision Evaluation Rubrics

📊 Detailed Metrics

Visualization Quality

29/30

Output Generation

5/5

Efficiency

10/10

Completed in 33.80 seconds (excellent)

PSNR

14.51 dB

SSIM

0.8044

LPIPS

0.1430

Input Tokens

1,935

Output Tokens

2,612

Total Tokens

4,547

Total Cost

$0.0450

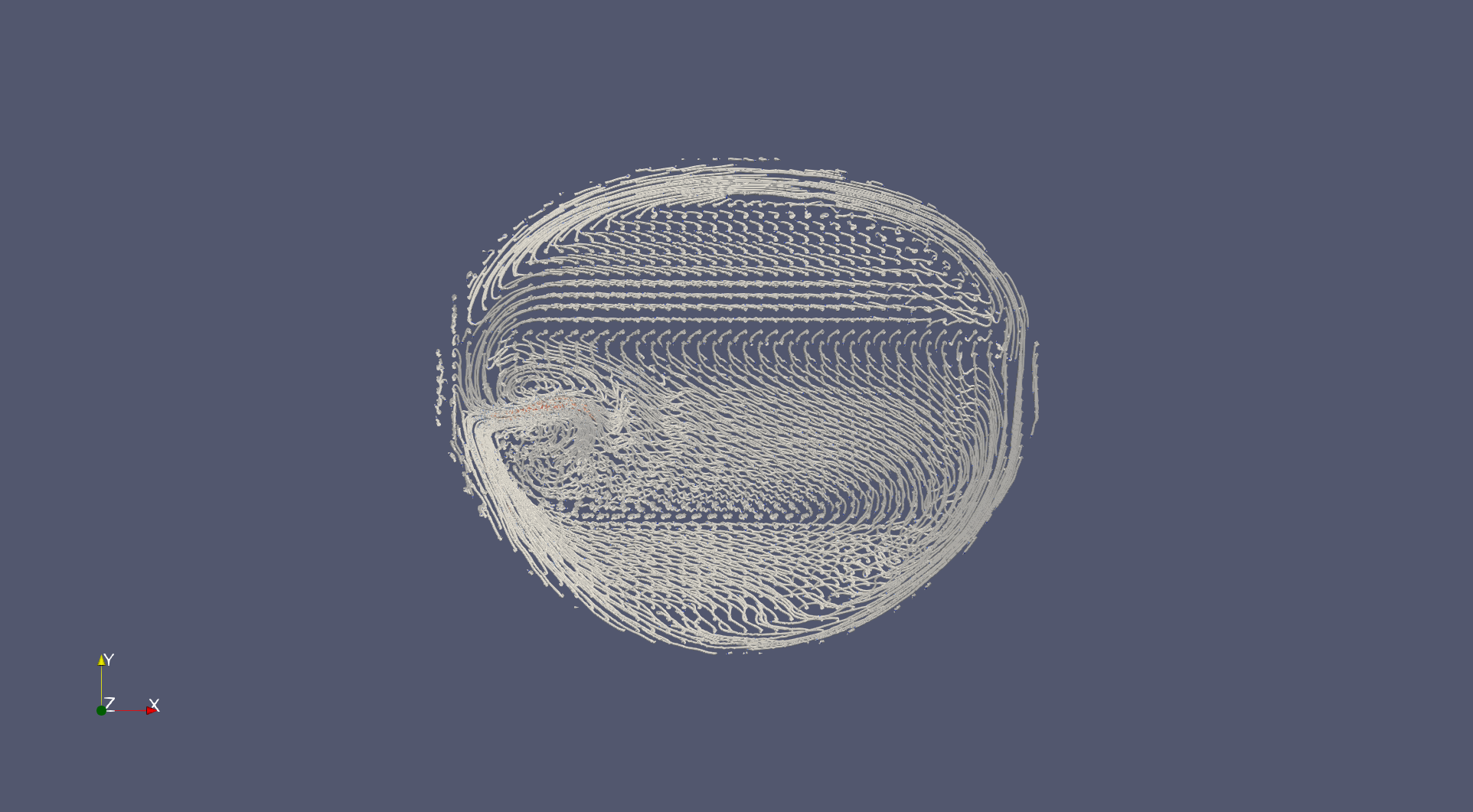

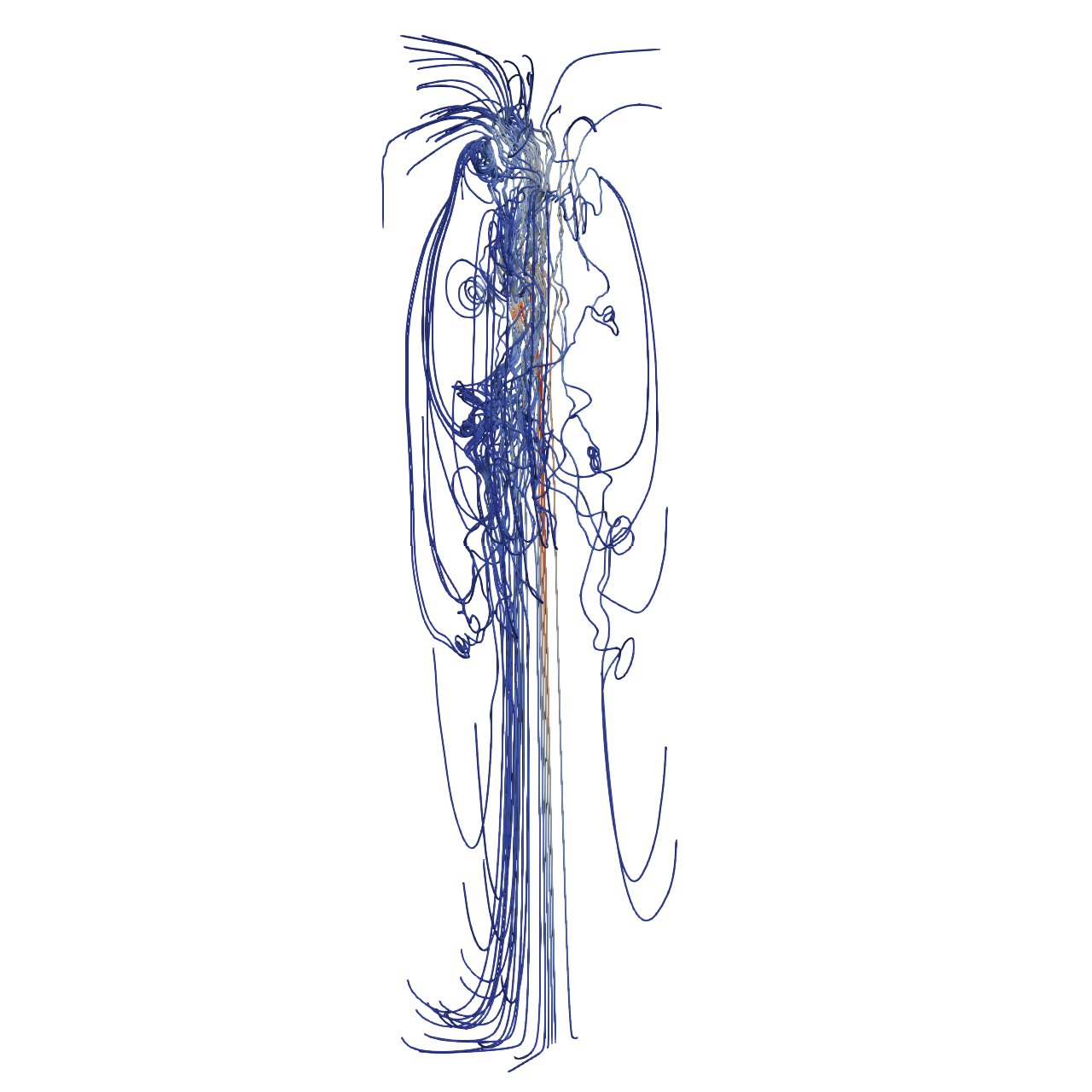

📝 Bernard

41/45 (91.1%)

📋 Task Description

Your agent_mode is "chatvis_claude-sonnet-4-5_exp1", use it when saving results. Your working directory is "D:\Code\SciVisAgentBench\SciVisAgentBench-tasks\paraview", and you should have access to it. In the following prompts, we will use relative path with respect to your working path. But remember, when you load or save any file, always stick to absolute path.

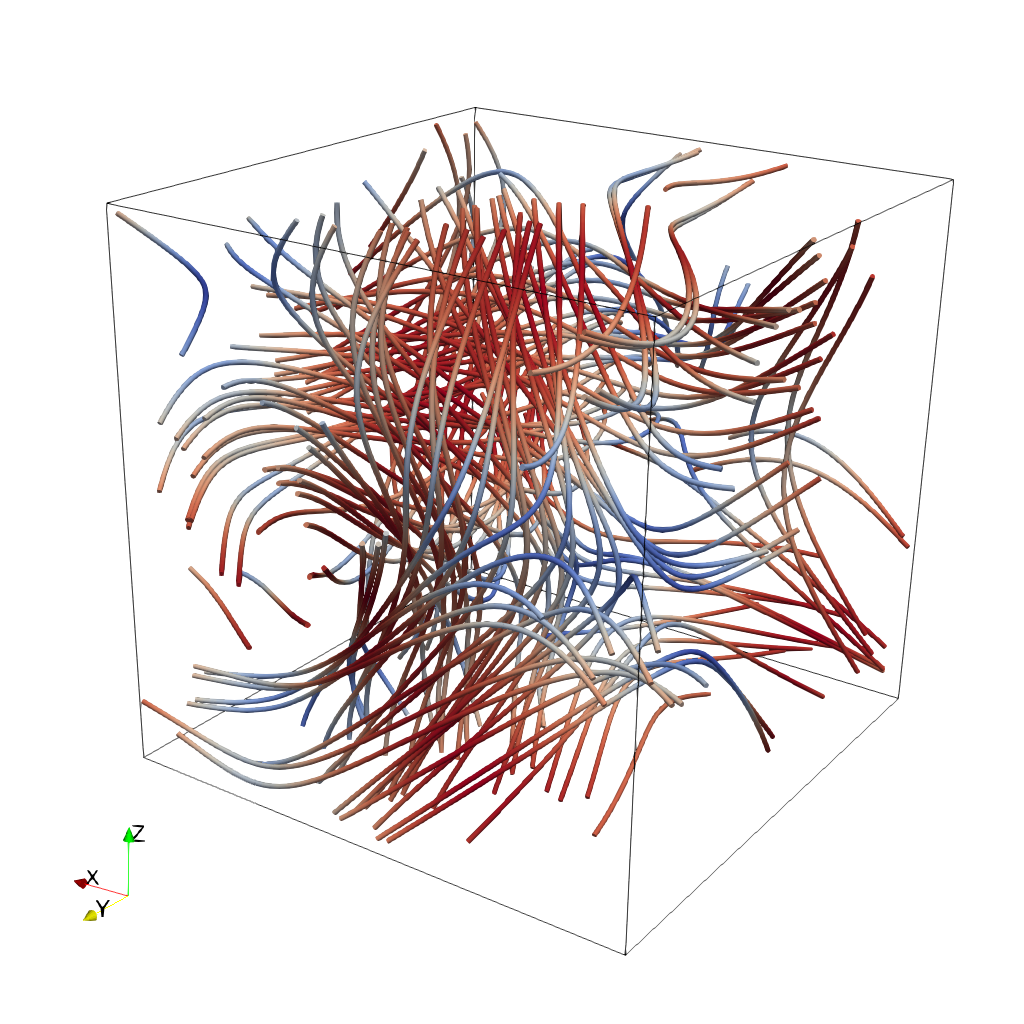

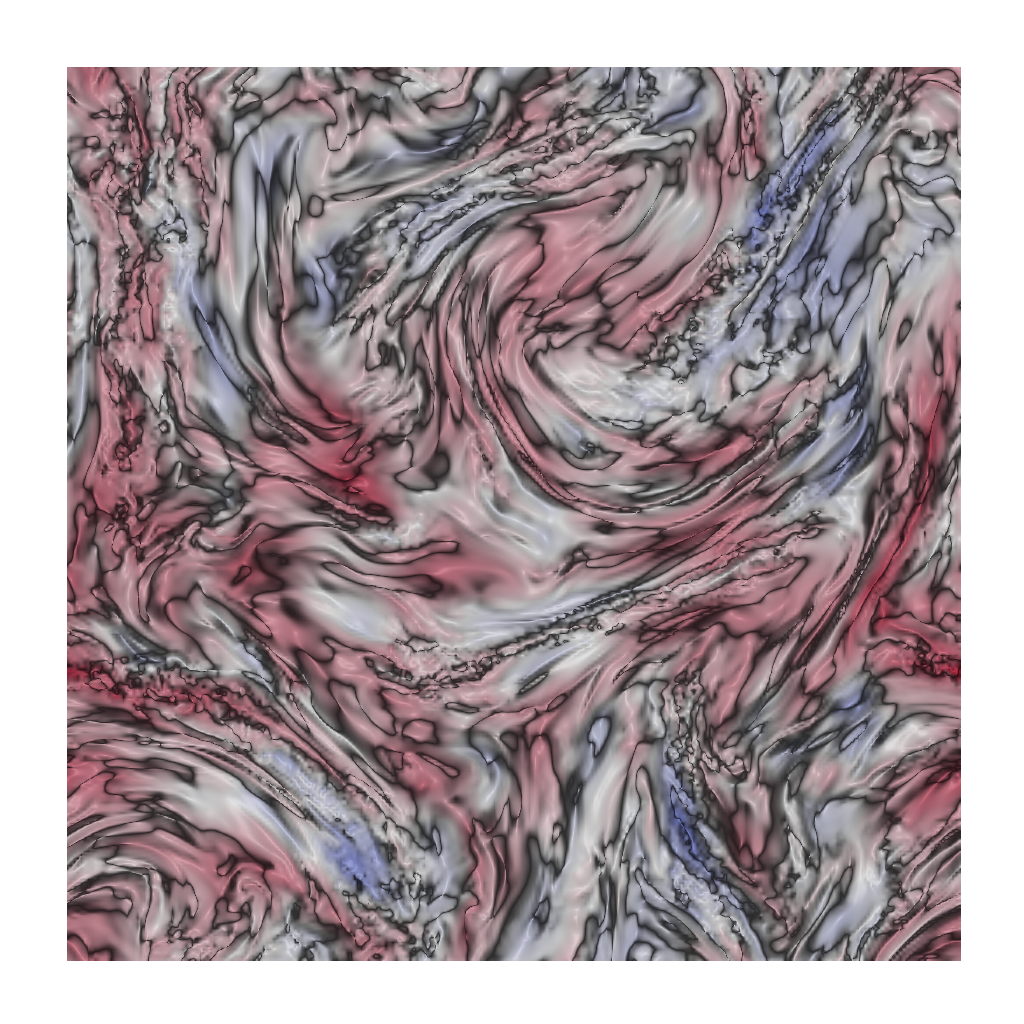

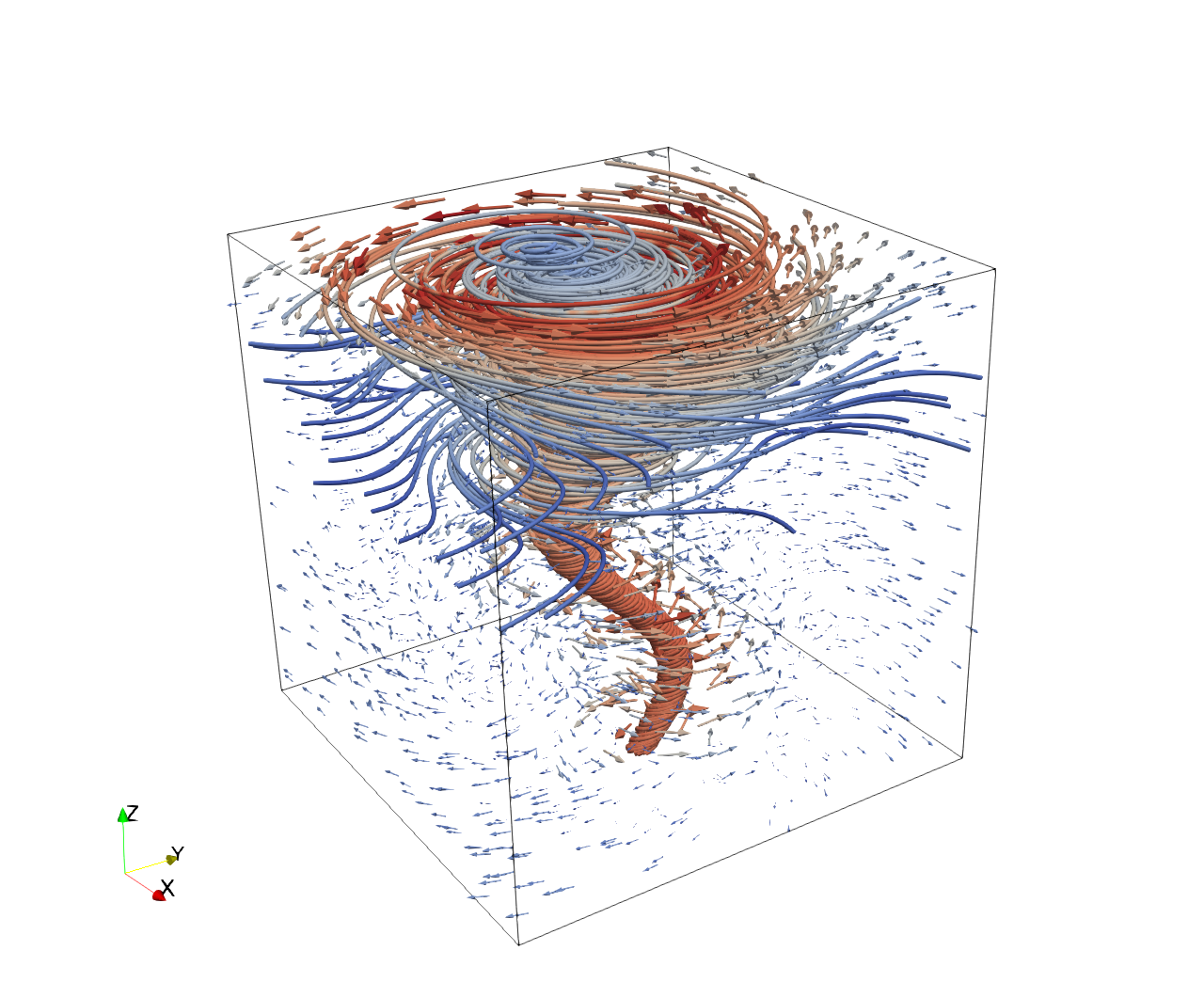

Load the Rayleigh-Benard convection vector field from "Bernard/data/Bernard_128x32x64_float32_scalar3.raw", the information about this dataset:

Rayleigh-Benard Convection (Vector)

Data Scalar Type: float

Data Byte Order: Little Endian

Data Extent: 128x32x64

Number of Scalar Components: 3

Data loading is very important, make sure you correctly load the dataset according to their features.

Create four streamline sets using "Stream Tracer" filters with "Point Cloud" seed type, each with 100 seed points and radius 12.7:

- Streamline 1: Seed center at [30.69, 14.61, 47.99]. Apply a "Tube" filter (radius 0.3, 12 sides). Color with solid blue (RGB: 0.0, 0.67, 1.0).

- Streamline 2: Seed center at [91.10, 14.65, 45.70]. Apply a "Tube" filter (radius 0.3, 12 sides). Color with solid orange (RGB: 1.0, 0.33, 0.0).

- Streamline 3: Seed center at [31.87, 12.76, 15.89]. Apply a "Tube" filter (radius 0.3, 12 sides). Color by velocity magnitude using the 'Cool to Warm (Diverging)' colormap.

- Streamline 4: Seed center at [92.09, 10.50, 15.32]. Apply a "Tube" filter (radius 0.3, 12 sides). Color with solid green (RGB: 0.33, 0.67, 0.0).

In the pipeline browser panel, hide all stream tracers and only show the tube filters.

Use a gray-blue background (RGB: 0.329, 0.349, 0.427). Render at 1280x1280. Do not show a color bar.

Set the viewpoint parameters as: [-81.99, -141.45, 89.86] to position; [65.58, 26.29, 28.48] to focal point; [0.18, 0.20, 0.96] to camera up direction.

Save the visualization image as "Bernard/results/{agent_mode}/Bernard.png".

(Optional, but must save if use paraview) Save the paraview state as "Bernard/results/{agent_mode}/Bernard.pvsm".

(Optional, but must save if use pvpython script) Save the python script as "Bernard/results/{agent_mode}/Bernard.py".

Do not save any other files, and always save the visualization image.

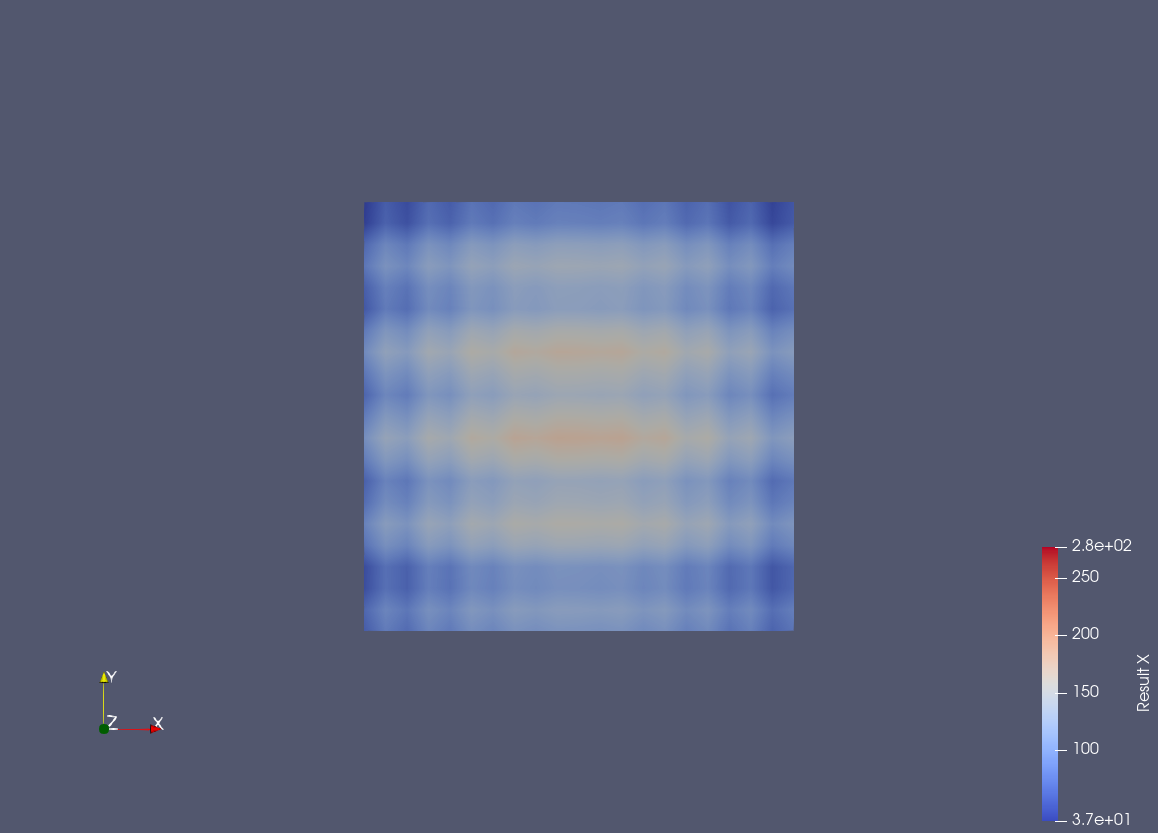

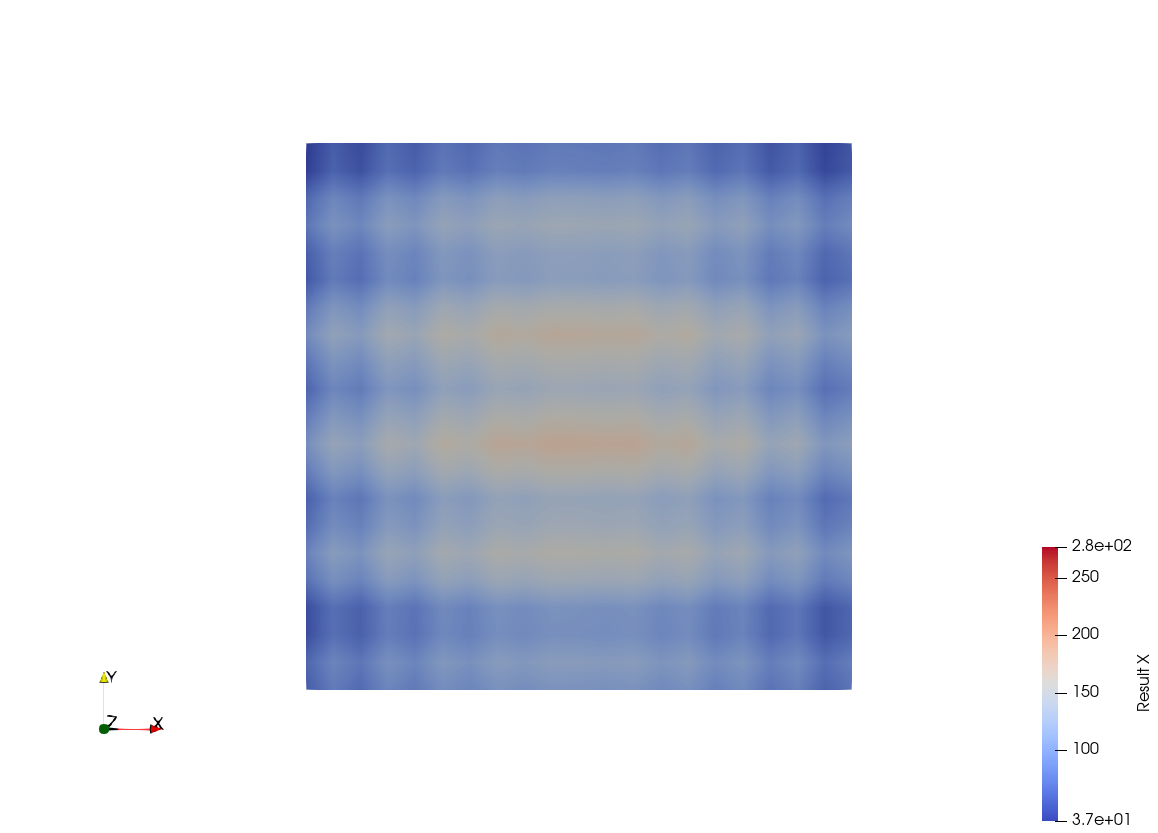

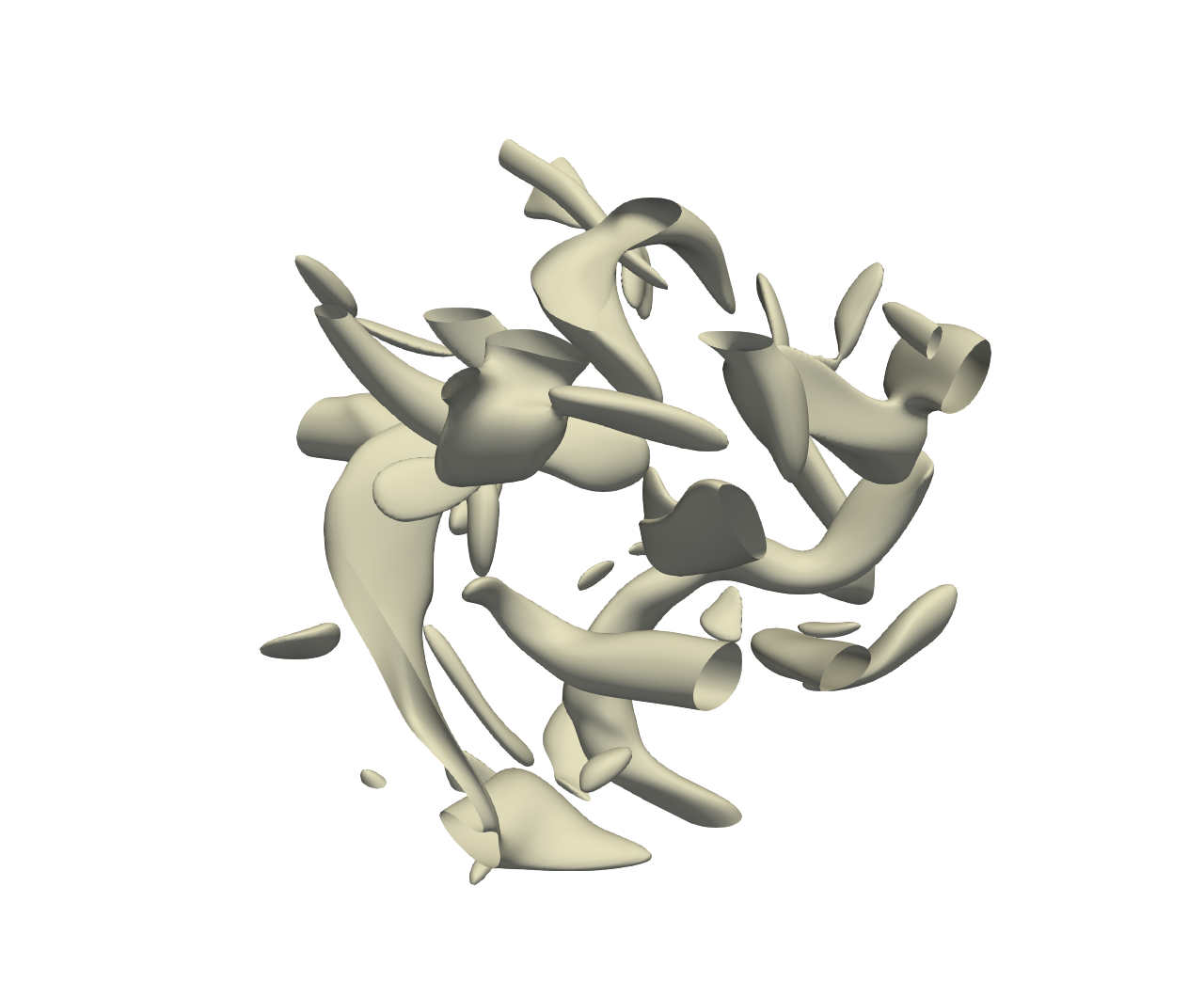

🖼️ Visualization Comparison

Ground Truth

Agent Result

📏 Vision Evaluation Rubrics

📊 Detailed Metrics

Visualization Quality

26/30

Output Generation

5/5

Efficiency

10/10

Completed in 44.77 seconds (excellent)

PSNR

18.81 dB

SSIM

0.8847

LPIPS

0.0652

Input Tokens

2,828

Output Tokens

3,966

Total Tokens

6,794

Total Cost

$0.0680

📝 argon-bubble

❌ FAILED0/45 (0.0%)

📋 Task Description

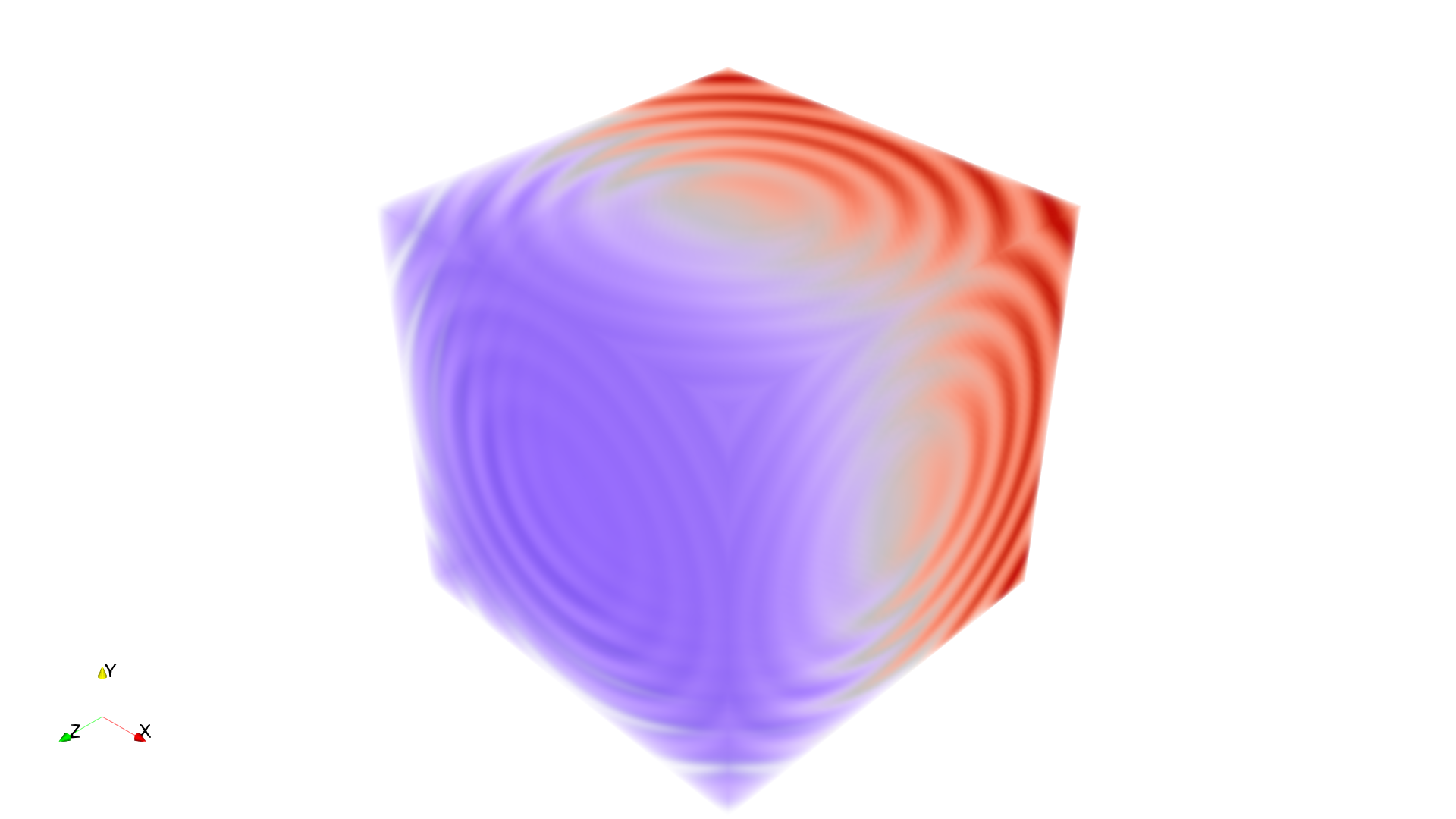

Task:

Load the Argon Bubble dataset from "argon-bubble/data/argon-bubble_128x128x256_float32.vtk".

Generate a visualization image of the Argon Bubble scalar field dataset with the following visualization settings:

1) Create volume rendering

2) Set the opacity transfer function as a ramp function across values of the volumetric data, assigning opacity 0 to value 0 and assigning opacity 1 to value 1.

3) Set the color transfer function to assign a warm red color [0.71, 0.02, 0.15] to the highest value, a cool color [0.23, 0.29, 0.75] to the lowest value, and a grey color[0.87, 0.87, 0.87] to the midrange value

4) Set the viewpoint parameters as: [0, 450, 0] to position; [0, 0, -15] to focal point; [0, 0, -1] to camera up direction

5) Visualization image resolution is 1024x1024. White background. Shade turned off. Volume rendering ray casting sample distance is 0.1

6) Don't show color/scalar bar or coordinate axes.

Save the visualization image as "argon-bubble/results/{agent_mode}/argon-bubble.png".

(Optional, but must save if use paraview) Save the paraview state as "argon-bubble/results/{agent_mode}/argon-bubble.pvsm".

(Optional, but must save if use pvpython script) Save the python script as "argon-bubble/results/{agent_mode}/argon-bubble.py".

(Optional, but must save if use VTK) Save the cxx code script as "argon-bubble/results/{agent_mode}/argon-bubble.cxx"

Do not save any other files, and always save the visualization image.

🖼️ Visualization Comparison

Ground Truth

Agent Result

📏 Vision Evaluation Rubrics

📊 Detailed Metrics

Visualization Quality

0/30

Output Generation

5/5

Efficiency

10/10

Completed in 37.92 seconds (excellent)

Input Tokens

2,777

Output Tokens

3,038

Total Tokens

5,815

Total Cost

$0.0539

📝 bonsai

❌ FAILED0/55 (0.0%)

📋 Task Description

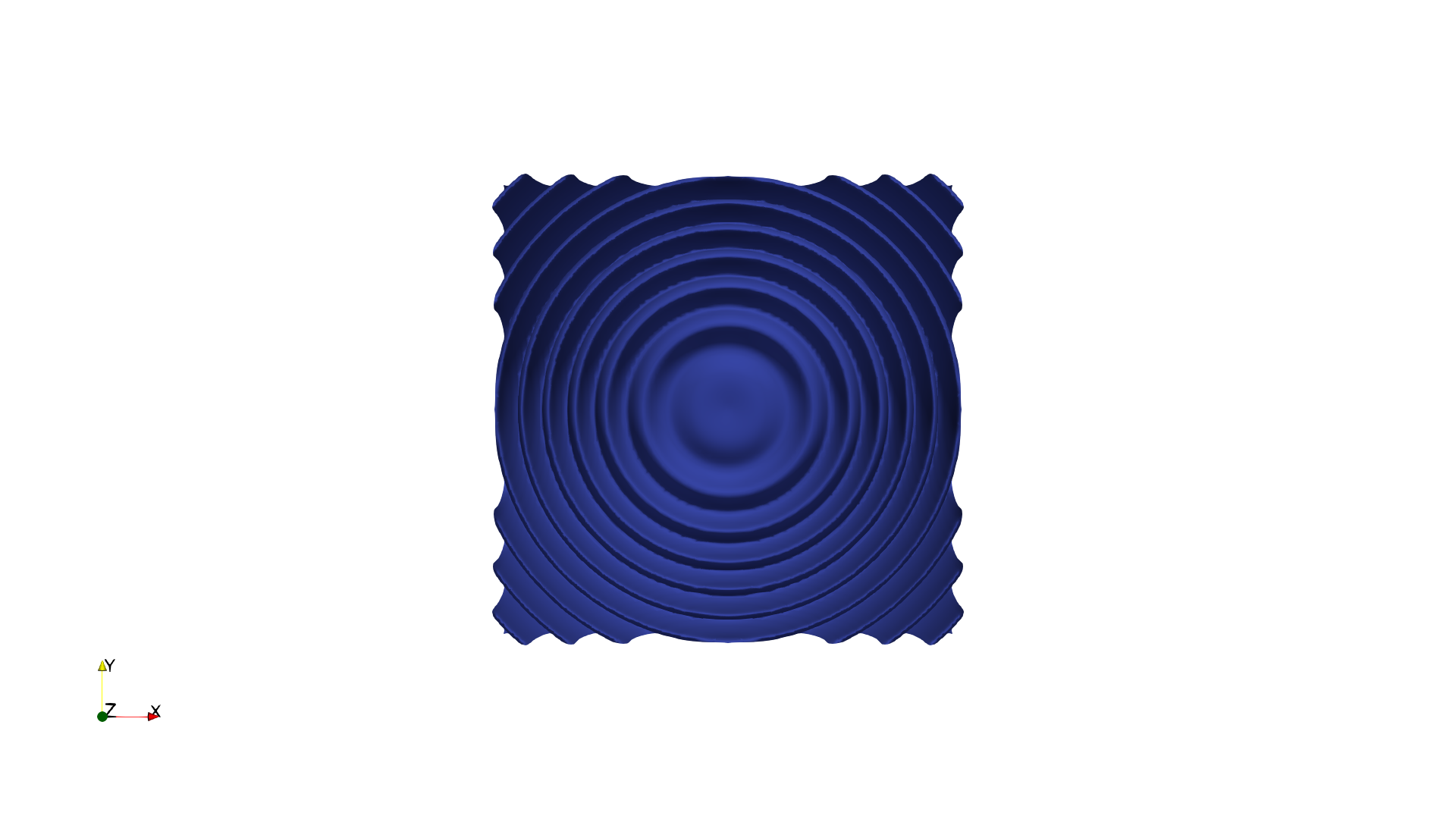

Task:

Load the bonsai dataset from "bonsai/data/bonsai_256x256x256_uint8.raw", the information about this dataset:

Bonsai (Scalar)

Data Scalar Type: unsigned char

Data Byte Order: little Endian

Data Spacing: 1x1x1

Data Extent: 256x256x256

Then visualize it with volume rendering, modify the transfer function and reach the visualization goal as: "A potted tree with brown pot silver branch and golden leaves."

Please think step by step and make sure to fulfill all the visualization goals mentioned above.

Use a white background. Render at 1280x1280. Do not show a color bar or coordinate axes.

Set the viewpoint parameters as: [-765.09, 413.55, 487.84] to position; [-22.76, 153.30, 157.32] to focal point; [0.30, 0.95, -0.07] to camera up direction.

Save the visualization image as "bonsai/results/{agent_mode}/bonsai.png".

(Optional, but must save if use paraview) Save the paraview state as "bonsai/results/{agent_mode}/bonsai.pvsm".

(Optional, but must save if use pvpython script) Save the python script as "bonsai/results/{agent_mode}/bonsai.py".

Do not save any other files, and always save the visualization image.

🖼️ Visualization Comparison

Ground Truth

Agent Result

📏 Vision Evaluation Rubrics

📊 Detailed Metrics

Visualization Quality

2/40

Output Generation

5/5

Efficiency

10/10

Completed in 46.02 seconds (excellent)

PSNR

15.82 dB

SSIM

0.9191

LPIPS

0.1924

Input Tokens

3,042

Output Tokens

3,763

Total Tokens

6,805

Total Cost

$0.0656

📝 carp

⚠️ LOW SCORE31/65 (47.7%)

📋 Task Description

Task:

Load the carp dataset from "carp/data/carp_256x256x512_uint16.raw", the information about this dataset:

Carp (Scalar)

Data Scalar Type: unsigned short

Data Byte Order: little Endian

Data Spacing: 0.78125x0.390625x1

Data Extent: 256x256x512

Instructions:

1. Load the dataset into ParaView.

2. Apply volume rendering to visualize the carp skeleton.

3. Adjust the transfer function to highlight only the bony structures with the original bone color.

4. Optimize the viewpoint to display the full skeleton, ensuring the head, spine, and fins are all clearly visible in a single frame.

5. Analyze the visualization and answer the following questions:

Q1: Which of the following options correctly describes the fins visible in the carp skeleton visualization?

A. 5 fins: 1 dorsal, 2 pectoral, 2 pelvic

B. 6 fins: 1 dorsal, 2 pectoral, 2 pelvic, 1 caudal

C. 7 fins: 1 dorsal, 2 pectoral, 2 pelvic, 1 anal, 1 caudal

D. 8 fins: 2 dorsal, 2 pectoral, 2 pelvic, 1 anal, 1 caudal

Q2: Based on the visualization, what is the approximate ratio of skull length to total body length?

A. ~15%

B. ~22%

C. ~30%

D. ~40%

6. Use a white background. Find an optimal view. Render at 1280x1280. Do not show a color bar or coordinate axes.

7. Set the viewpoint parameters as: [265.81, 1024.69, 131.23] to position; [141.24, 216.61, 243.16] to focal point; [0.99, -0.14, 0.07] to camera up direction.

8. Save your work:

Save the visualization image as "carp/results/{agent_mode}/carp.png".

Save the answers to the analysis questions in plain text as "carp/results/{agent_mode}/answers.txt".

(Optional, but must save if use paraview) Save the paraview state as "carp/results/{agent_mode}/carp.pvsm".

(Optional, but must save if use pvpython script) Save the python script as "carp/results/{agent_mode}/carp.py".

(Optional, but must save if use VTK) Save the cxx code script as "carp/results/{agent_mode}/carp.cxx"

Do not save any other files, and always save the visualization image and the text file.

🖼️ Visualization Comparison

Ground Truth

Agent Result

📏 Vision Evaluation Rubrics

📝 Text-Based Q&A Evaluation

📊 Detailed Metrics

Visualization Quality

7/30

Output Generation

5/5

Efficiency

9/10

Completed in 69.68 seconds (very good)

PSNR

30.02 dB

SSIM

0.9796

LPIPS

0.0431

Text Q&A Score

10/20

50.0%

Input Tokens

6,052

Output Tokens

6,172

Total Tokens

12,224

Total Cost

$0.1107

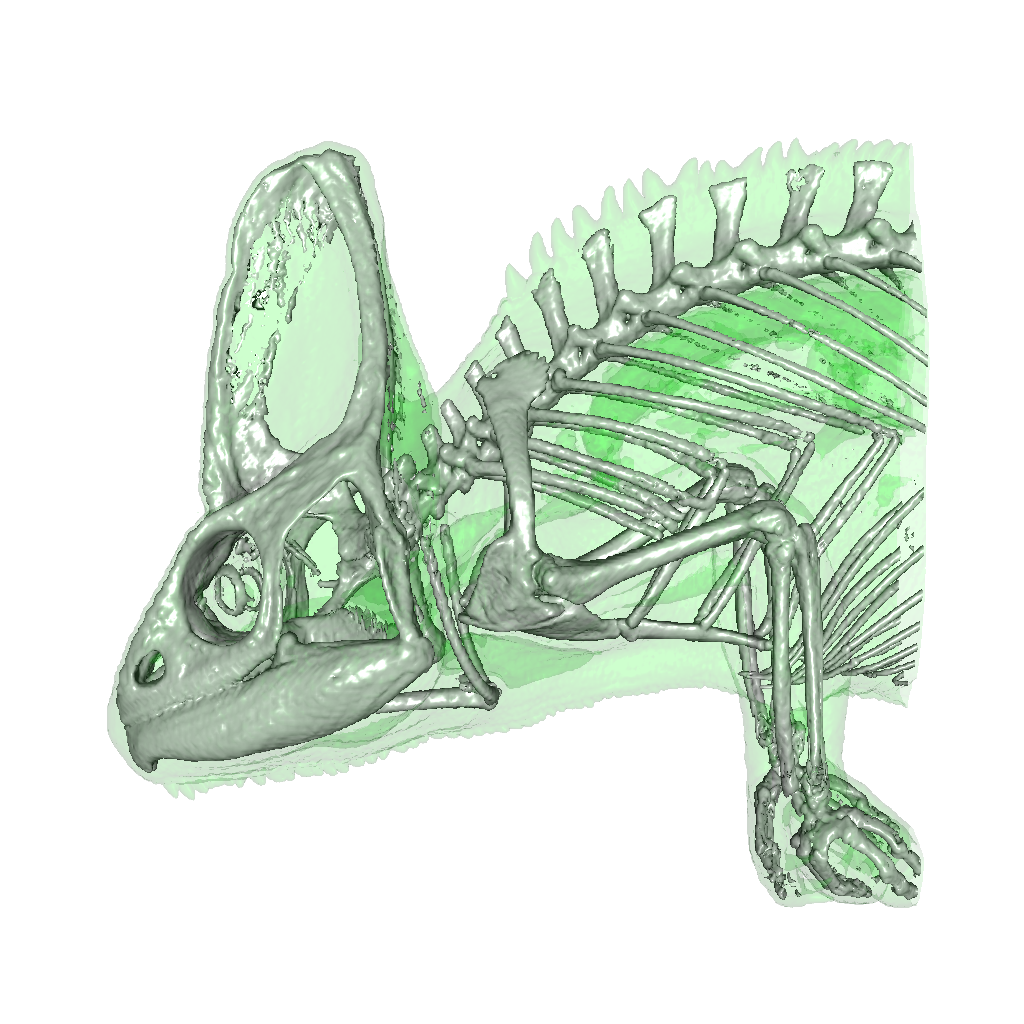

📝 chameleon_isosurface

❌ FAILED0/45 (0.0%)

📋 Task Description

Task:

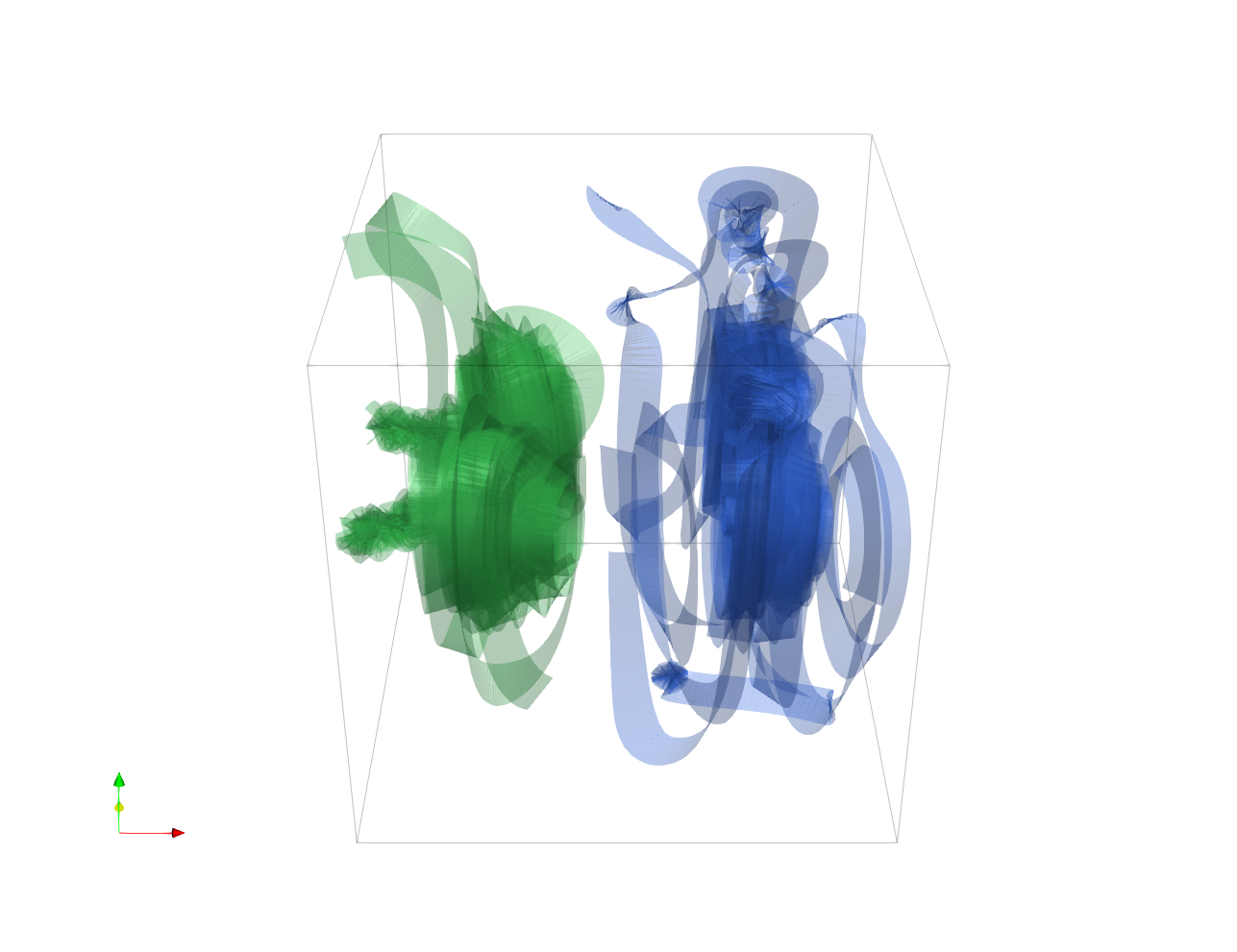

Load the chameleon dataset from "chameleon_isosurface/data/chameleon_isosurface_256x256x256_float32.vtk".

Generate a visualization image of 2 isosurfaces of the Chameleon scalar field dataset with the following visualization settings:

1) Create isosurfaces of Iso_1 with a value of 0.12 and Iso_2 with a value of 0.45

2) Assign RGB color of [0.0, 1.0, 0.0] to Iso_1, and color of [1.0, 1.0, 1.0] to Iso_2

3) Assign opacity of 0.1 to Iso_1, and opacity of 0.99 to Iso_2

4) Set the lighting parameter as: 0.1 to Ambient; 0.7 to Diffuse; 0.6 to Specular

5) Set the viewpoint parameters as: [600, 0, 0] to position; [0, 0, 0] to focal point; [0, -1, 0] to camera up direction

6) White background

7) Visualization image resolution is 1024x1024

8) Don't show color/scalar bar or coordinate axes.

Save the visualization image as "chameleon_isosurface/results/{agent_mode}/chameleon_isosurface.png".

(Optional, but must save if use paraview) Save the paraview state as "chameleon_isosurface/results/{agent_mode}/chameleon_isosurface.pvsm".

(Optional, but must save if use pvpython script) Save the python script as "chameleon_isosurface/results/{agent_mode}/chameleon_isosurface.py".

(Optional, but must save if use VTK) Save the cxx code script as "chameleon_isosurface/results/{agent_mode}/chameleon_isosurface.cxx"

Do not save any other files, and always save the visualization image.

🖼️ Visualization Comparison

Ground Truth

Agent Result

📏 Vision Evaluation Rubrics

📊 Detailed Metrics

Visualization Quality

1/30

Output Generation

5/5

Efficiency

10/10

Completed in 24.76 seconds (excellent)

Input Tokens

714

Output Tokens

1,282

Total Tokens

1,996

Total Cost

$0.0214

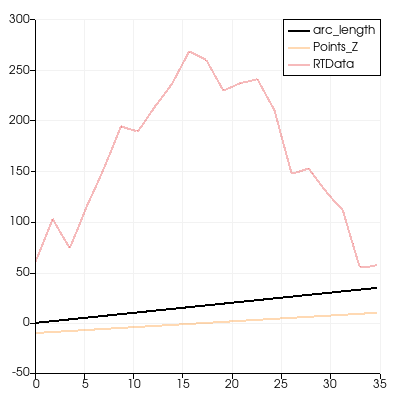

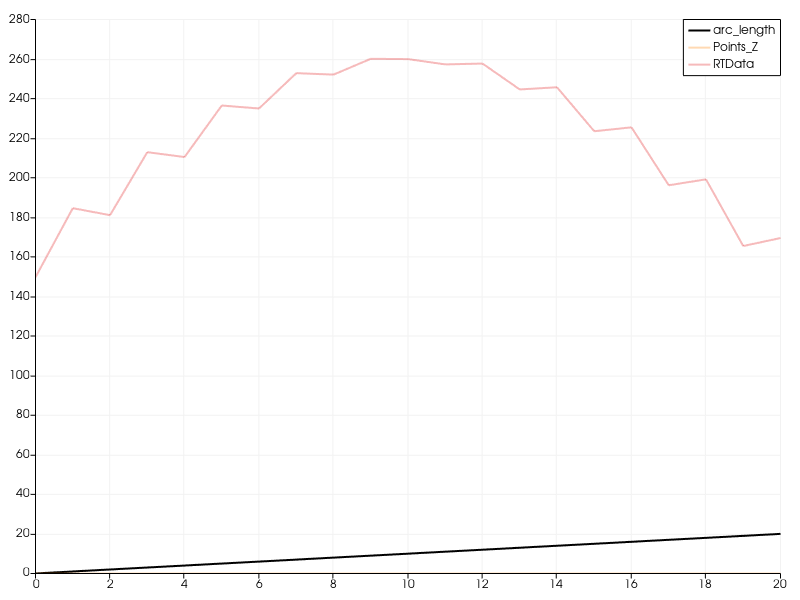

📝 chart-opacity

35/55 (63.6%)

📋 Task Description

Create a wavelet object.

Create a plot over line chart from the wavelet with three paths: arc_length, Points_Z, and RTData variables with opacity for arc_length 1 and opacity for Points_Z and RTData 0.3.

Save the visualization image as "chart-opacity/results/{agent_mode}/chart-opacity.png".

(Optional, but must save if use paraview) Save the paraview state as "chart-opacity/results/{agent_mode}/chart-opacity.pvsm".

(Optional, but must save if use python script) Save the python script as "chart-opacity/results/{agent_mode}/chart-opacity.py".

Do not save any other files, and always save the visualization image.

🖼️ Visualization Comparison

Ground Truth

Agent Result

📏 Vision Evaluation Rubrics

📊 Detailed Metrics

Visualization Quality

20/40

Output Generation

5/5

Efficiency

10/10

Completed in 15.23 seconds (excellent)

Input Tokens

381

Output Tokens

846

Total Tokens

1,227

Total Cost

$0.0138

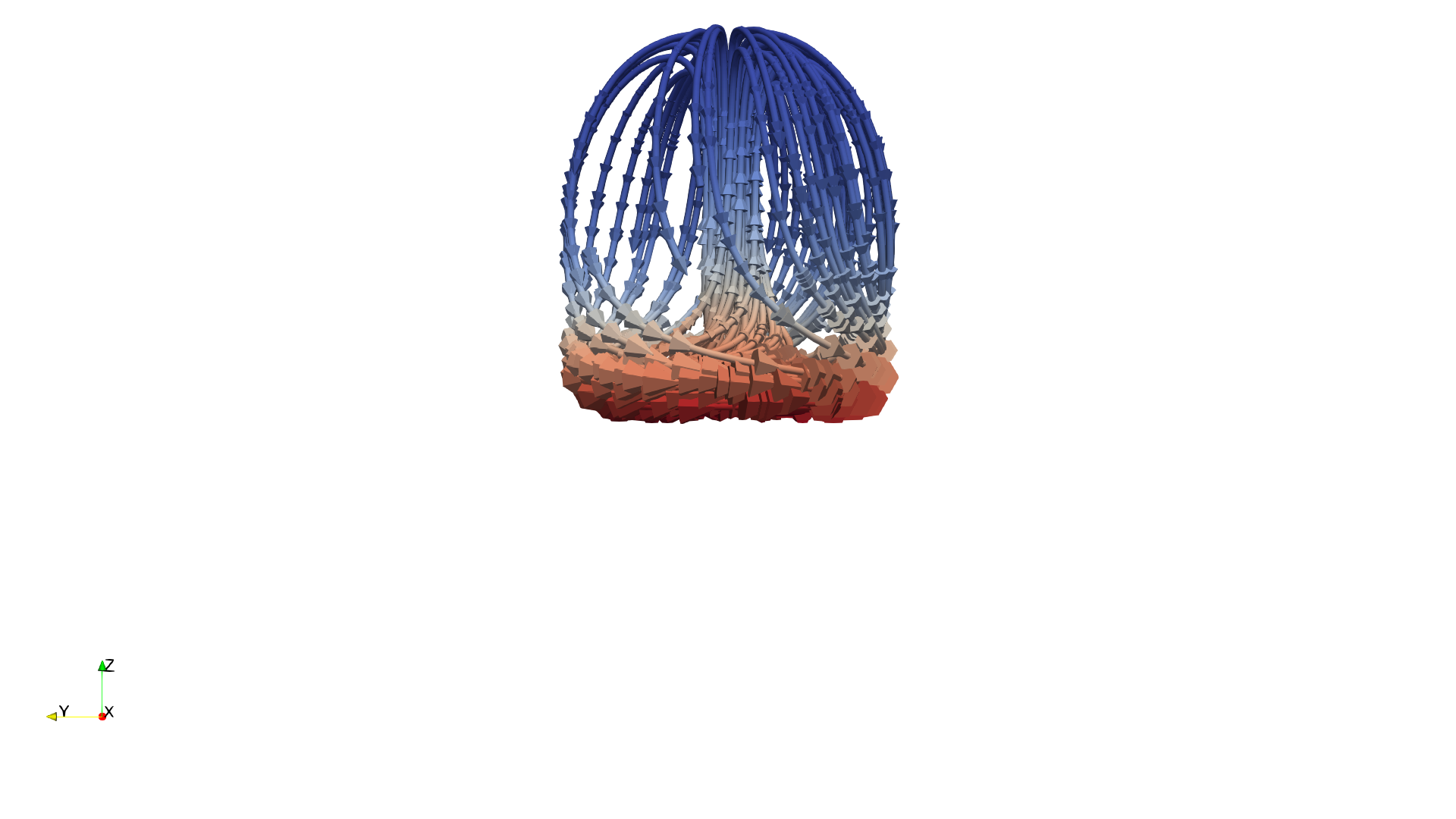

📝 climate

28/45 (62.2%)

📋 Task Description

I would like to use ParaView to visualize a dataset of ocean currents.

Read in the file named "climate/data/climate.vtp".

Apply a calculator filter to compute the following function:

(-velocity_X*sin(coordsX*0.0174533) + velocity_Y*cos(coordsX*0.0174533)) * iHat + (-velocity_X * sin(coordsY*0.0174533) * cos(coordsX*0.0174533) - velocity_Y * sin(coordsY*0.0174533) * sin(coordsX*0.0174533) + velocity_Z * cos(coordsY*0.0174533)) * jHat + 0*kHat

Render the computed values using a tube filter with 0.05 as the tube radius.

Color the tubes by the magnitude of the velocity.

Light the tubes with the maximum shininess and include normals in the lighting.

Add cone glyphs to show the direction of the velocity.

The glyphs are composed of 10 polygons, having a radius 0 0.15, a height of 0.5, and a scaling factor of 0.5.

View the result in the -z direction.

Adjust the view so that the tubes occupy the 90% of the image.

Save a screenshot of the result, 2294 x 1440 pixels, white background, in the filename "climate/results/{agent_mode}/climate.png".

(Optional, but must save if use paraview) Save the paraview state as "climate/results/{agent_mode}/climate.pvsm".

(Optional, but must save if use python script) Save the python script as "climate/results/{agent_mode}/climate.py".

Do not save any other files, and always save the visualization image.

🖼️ Visualization Comparison

Ground Truth

Agent Result

📏 Vision Evaluation Rubrics

📊 Detailed Metrics

Visualization Quality

13/30

Output Generation

5/5

Efficiency

10/10

Completed in 44.64 seconds (excellent)

Input Tokens

2,123

Output Tokens

2,968

Total Tokens

5,091

Total Cost

$0.0509

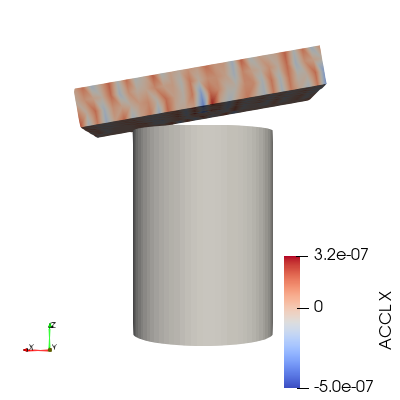

📝 color-blocks

❌ FAILED0/55 (0.0%)

📋 Task Description

I would like to use ParaView to visualize a dataset.

Set the background to a blue-gray palette.

Read the file "color-blocks/data/color-blocks.ex2".

This is a multiblock dataset.

Color the dataset by the vtkBlockColors field.

Retrieve the color map for vtkBlockColors.

Retrieve the opacity transfer function for vtkBlockColors.

Retrieve the 2D transfer function for vtkBlockColors.

Set block coloring for the block at /IOSS/element_blocks/block_2 using the variable ACCL on the x component of the points.

Rescale the block's color and opacity maps to match the current data range of block_2.

Retrieve the color transfer function for the ACCL variable of block_2.

Enable the color bar for block_2.

Apply a cool to warm color preset to the color map for block_2.

Set the camera to look down the -y direction and to see the entire dataset.

Save the visualization image as "color-blocks/results/{agent_mode}/color-blocks.png".

(Optional, but must save if use paraview) Save the paraview state as "color-blocks/results/{agent_mode}/color-blocks.pvsm".

(Optional, but must save if use python script) Save the python script as "color-blocks/results/{agent_mode}/color-blocks.py".

Do not save any other files, and always save the visualization image.

🖼️ Visualization Comparison

Ground Truth

Agent Result

📏 Vision Evaluation Rubrics

📊 Detailed Metrics

Visualization Quality

0/40

Output Generation

5/5

Efficiency

10/10

Completed in 51.39 seconds (excellent)

Input Tokens

4,296

Output Tokens

4,369

Total Tokens

8,665

Total Cost

$0.0784

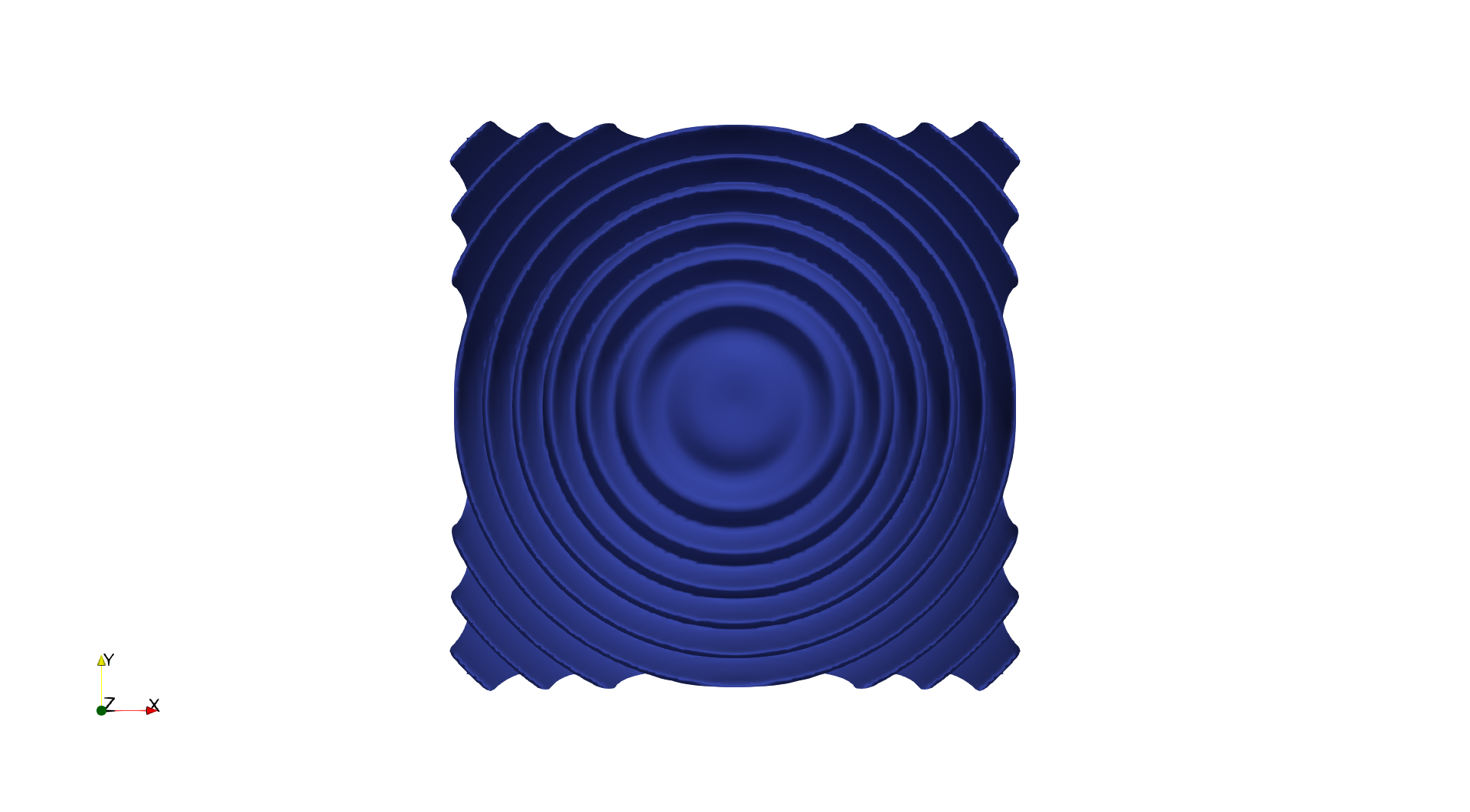

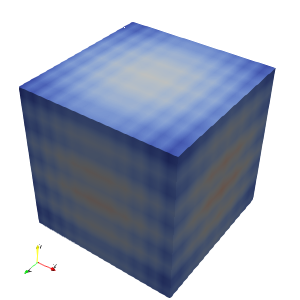

📝 color-data

43/45 (95.6%)

📋 Task Description

Create a wavelet object.

Create a new calculator with the function 'RTData*iHat + ln(RTData)*jHat + coordsZ*kHat'.

Get a color transfer function/color map and opacity transfer function/opacity map for the result of the calculation, scaling the color and/or opacity maps to the data range.

For a surface representation, color by the x coordinate of the result using a cool to warm color map, show the color bar/color legend, and save a screenshot of size 1158 x 833 pixels in "color-data/results/{agent_mode}/color-data.png".

(Optional, but must save if use paraview) Save the paraview state as "color-data/results/{agent_mode}/color-data.pvsm".

(Optional, but must save if use python script) Save the python script as "color-data/results/{agent_mode}/color-data.py".

Do not save any other files, and always save the visualization image.

🖼️ Visualization Comparison

Ground Truth

Agent Result

📏 Vision Evaluation Rubrics

📊 Detailed Metrics

Visualization Quality

28/30

Output Generation

5/5

Efficiency

10/10

Completed in 25.42 seconds (excellent)

Input Tokens

1,198

Output Tokens

1,478

Total Tokens

2,676

Total Cost

$0.0258

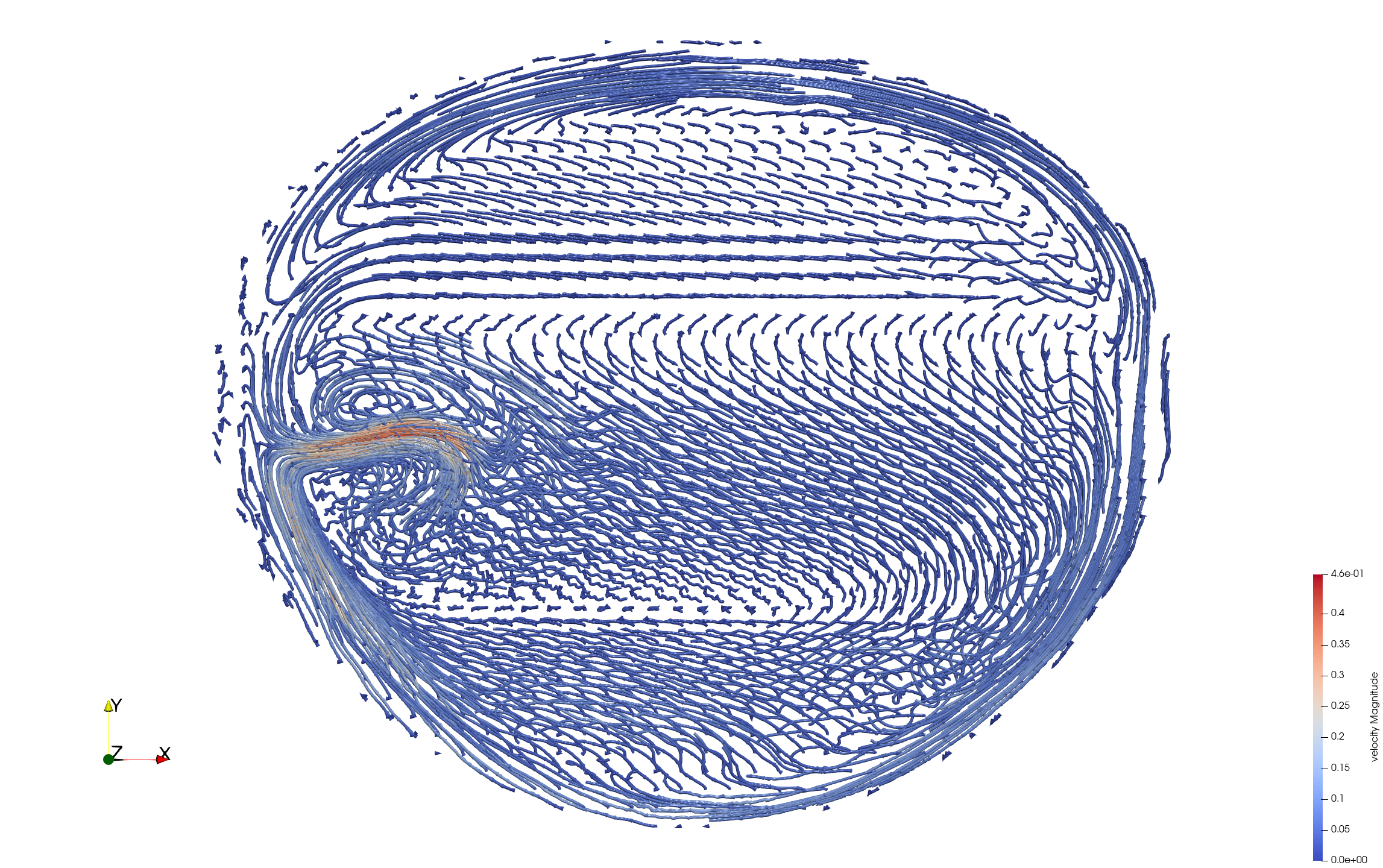

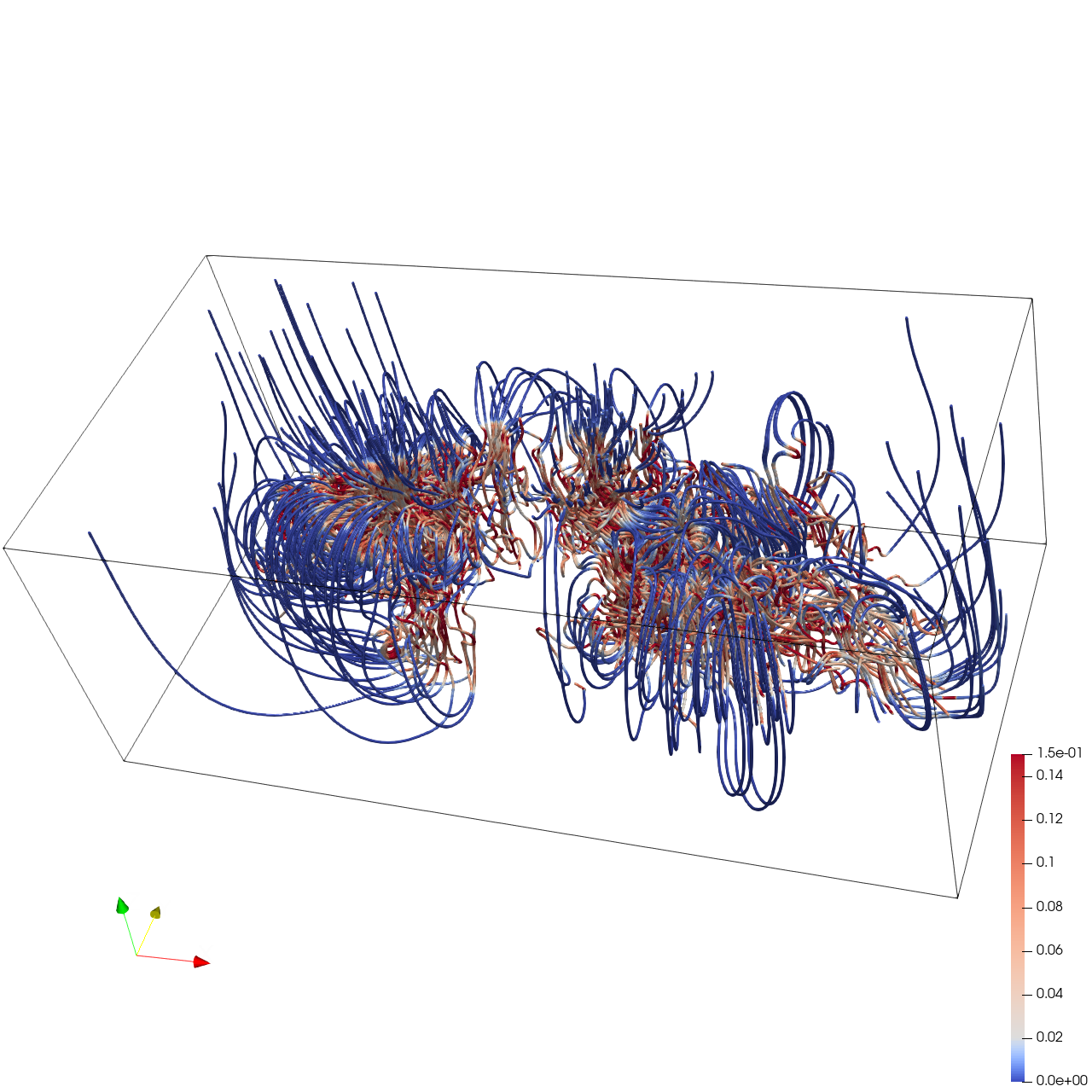

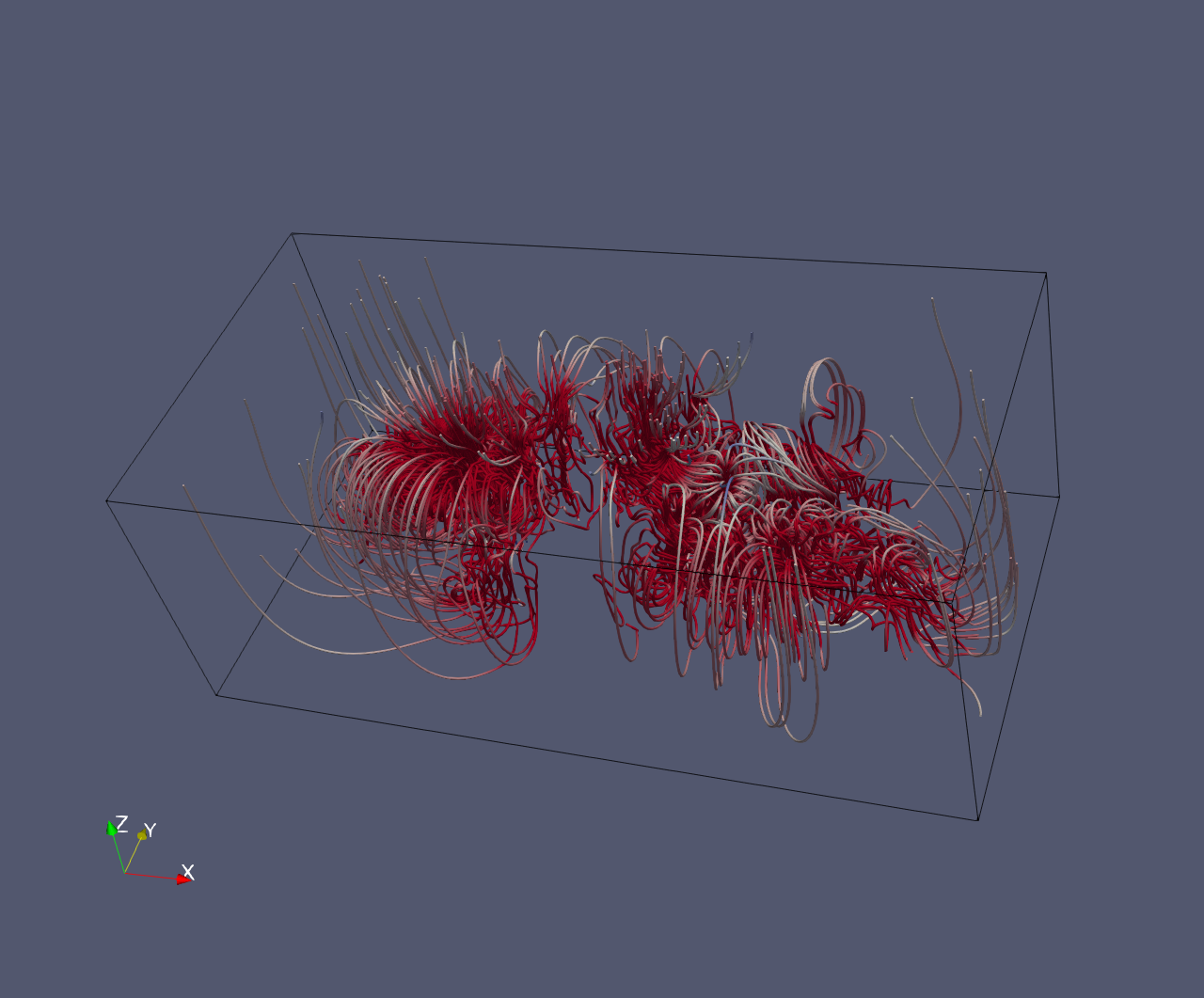

📝 crayfish_streamline

32/45 (71.1%)

📋 Task Description

Load the Crayfish flow vector field from "crayfish_streamline/data/crayfish_streamline_322x162x119_float32_scalar3.raw", the information about this dataset:

Crayfish Flow (Vector)

Data Scalar Type: float

Data Byte Order: Little Endian

Data Extent: 322x162x119

Number of Scalar Components: 3

Data loading is very important, make sure you correctly load the dataset according to their features.

Create two streamline sets using "Stream Tracer" filters with "Point Cloud" seed type, each with 100 seed points and radius 32.2:

- Streamline 1: Seed center at [107.33, 81.0, 59.5]. Apply a "Tube" filter (radius 0.5, 12 sides). Color by Velocity magnitude using a diverging colormap with the following RGB control points:

- Value 0.0 -> RGB(0.231, 0.298, 0.753) (blue)

- Value 0.02 -> RGB(0.865, 0.865, 0.865) (white)

- Value 0.15 -> RGB(0.706, 0.016, 0.149) (red)

- Streamline 2: Seed center at [214.67, 81.0, 59.5]. Apply a "Tube" filter (radius 0.5, 12 sides). Color by Velocity magnitude using the same colormap.

Show the dataset bounding box as an outline (black).

In the pipeline browser panel, hide all stream tracers and only show the tube filters and the outline.

Use a white background. Render at 1280x1280.

Set the viewpoint parameters as: [436.67, -370.55, 562.71] to position; [160.5, 80.5, 59.07] to focal point; [-0.099, 0.714, 0.693] to camera up direction

Save the paraview state as "crayfish_streamline/results/{agent_mode}/crayfish_streamline.pvsm".

Save the visualization image as "crayfish_streamline/results/{agent_mode}/crayfish_streamline.png".

(Optional, if use python script) Save the python script as "crayfish_streamline/results/{agent_mode}/crayfish_streamline.py".

Do not save any other files, and always save the visualization image.

🖼️ Visualization Comparison

Ground Truth

Agent Result

📏 Vision Evaluation Rubrics

📊 Detailed Metrics

Visualization Quality

18/30

Output Generation

5/5

Efficiency

9/10

Completed in 67.64 seconds (very good)

PSNR

17.07 dB

SSIM

0.8815

LPIPS

0.1054

Input Tokens

4,531

Output Tokens

5,516

Total Tokens

10,047

Total Cost

$0.0963

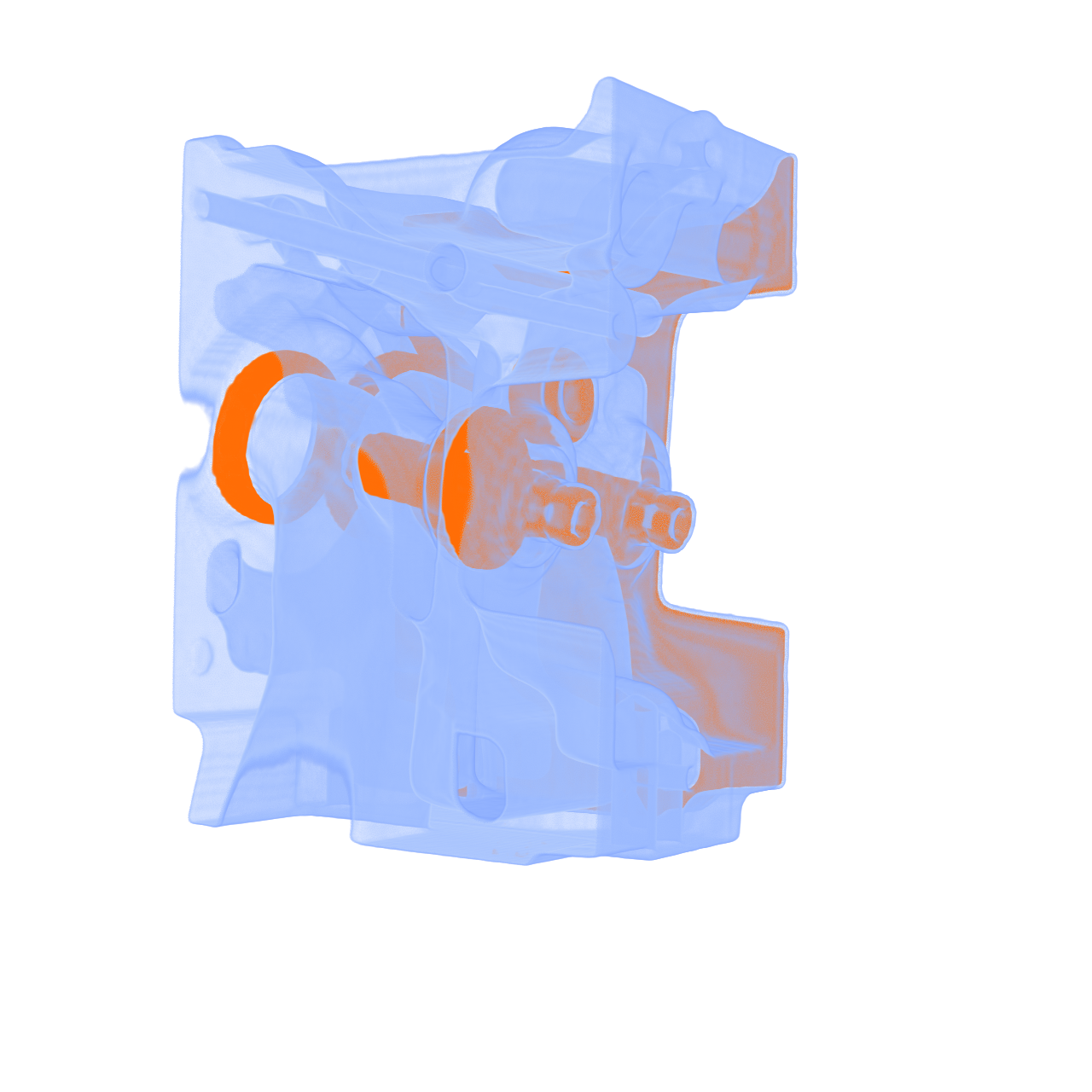

📝 engine

⚠️ LOW SCORE20/55 (36.4%)

📋 Task Description

Task:

Load the vortex dataset from "engine/data/engine_256x256x128_uint8.raw", the information about this dataset:

engine (Scalar)

Data Scalar Type: float

Data Byte Order: little Endian

Data Extent: 256x256x128

Number of Scalar Components: 1

Instructions:

1. Load the dataset into ParaView.

2. Apply the volume rendering to visualize the engine dataset

3. Adjust the transfer function, let the outer part more transparent and the inner part more solid. Use light blue for the outer part and orange for the inner part.

4. Use a white background. Find an optimal view. Render at 1280x1280. Do not show a color bar or coordinate axes.

5. Set the viewpoint parameters as: [-184.58, 109.48, -431.72] to position; [134.05, 105.62, 88.92] to focal point; [0.01, 1.0, -0.001] to camera up direction.

6. Save your work:

Save the visualization image as "engine/results/{agent_mode}/engine.png".

(Optional, but must save if use paraview) Save the paraview state as "engine/results/{agent_mode}/engine.pvsm".

(Optional, but must save if use pvpython script) Save the python script as "engine/results/{agent_mode}/engine.py".

(Optional, but must save if use VTK) Save the cxx code script as "engine/results/{agent_mode}/engine.cxx"

Do not save any other files, and always save the visualization image.

🖼️ Visualization Comparison

Ground Truth

Agent Result

📏 Vision Evaluation Rubrics

📊 Detailed Metrics

Visualization Quality

5/40

Output Generation

5/5

Efficiency

10/10

Completed in 19.12 seconds (excellent)

PSNR

7.19 dB

SSIM

0.7527

LPIPS

0.2979

Input Tokens

596

Output Tokens

1,106

Total Tokens

1,702

Total Cost

$0.0184

📝 export-gltf

52/55 (94.5%)

📋 Task Description

Create a wavelet object.

Create a surface rendering of the wavelet object and color by RTData.

Scale the color map to the data, and don't display the color bar or the orientation axes.

Export the view to "export-gltf/results/{agent_mode}/ExportedGLTF.gltf".

Next load the file "export-gltf/results/{agent_mode}/ExportedGLTF.gltf" and display it as a surface.

Color this object by TEXCOORD_0.

Scale the color map to the data, and don't display the color bar or the orientation axes.

Use the 'Cool to Warm' colormap. Set the background color to white.

Save the visualization image as "export-gltf/results/{agent_mode}/export-gltf.png".

(Optional, but must save if use paraview) Save the paraview state as "export-gltf/results/{agent_mode}/export-gltf.pvsm".

(Optional, but must save if use python script) Save the python script as "export-gltf/results/{agent_mode}/export-gltf.py".

Do not save any other files, and always save the visualization image.

🖼️ Visualization Comparison

Ground Truth

Agent Result

📏 Vision Evaluation Rubrics

📊 Detailed Metrics

Visualization Quality

37/40

Output Generation

5/5

Efficiency

10/10

Completed in 59.61 seconds (excellent)

Input Tokens

4,744

Output Tokens

5,013

Total Tokens

9,757

Total Cost

$0.0894

📝 foot

35/45 (77.8%)

📋 Task Description

Task:

Load the Foot dataset from "foot/data/foot_256x256x256_uint8.raw", the information about this dataset:

Foot

Description: Rotational C-arm x-ray scan of a human foot. Tissue and bone are present in the dataset.

Data Type: uint8

Data Byte Order: little Endian

Data Spacing: 1x1x1

Data Extent: 256x256x256

Data loading is very important, make sure you correctly load the dataset according to their features.

Visualize the anatomical structures:

1. Apply volume rendering with an X-ray transfer function that distinguishes soft tissues and bones. Bones with darker color, and soft tissue with lighter color.

2. Analyze the visualization and answer the following questions:

Q1: Based on the X-ray style volume rendering of the foot dataset, which of the following best describes the visibility of bony structures?

A. Both the phalanges and metatarsals are fully visible

B. The phalanges are fully visible, but the metatarsals are only partially visible

C. The metatarsals are fully visible, but the phalanges are only partially visible

D. Neither the phalanges nor the metatarsals are clearly visible

3. Use a white background. Find an optimal view. Render at 1280x1280. Do not show a color bar or coordinate axes.

4. Set the viewpoint parameters as: [-576.41, -264.41, -153.48] to position; [127.5, 127.5, 127.5] to focal point; [-0.52, 0.38, 0.76] to camera up direction.

5. Save your work:

Save the visualization image as "foot/results/{agent_mode}/foot.png".

Save the answers to the analysis questions in plain text as "foot/results/{agent_mode}/answers.txt".

(Optional, but must save if use paraview) Save the paraview state as "foot/results/{agent_mode}/foot.pvsm".

(Optional, but must save if use pvpython script) Save the python script as "foot/results/{agent_mode}/foot.py".

(Optional, but must save if use VTK) Save the cxx code script as "foot/results/{agent_mode}/foot.cxx"

Do not save any other files, and always save the visualization image and the text file.

🖼️ Visualization Comparison

Ground Truth

Agent Result

📏 Vision Evaluation Rubrics

📝 Text-Based Q&A Evaluation

📊 Detailed Metrics

Visualization Quality

18/20

Output Generation

5/5

Efficiency

10/10

Completed in 32.30 seconds (excellent)

PSNR

26.59 dB

SSIM

0.9869

LPIPS

0.0412

Text Q&A Score

2/10

20.0%

Input Tokens

772

Output Tokens

1,843

Total Tokens

2,615

Total Cost

$0.0300

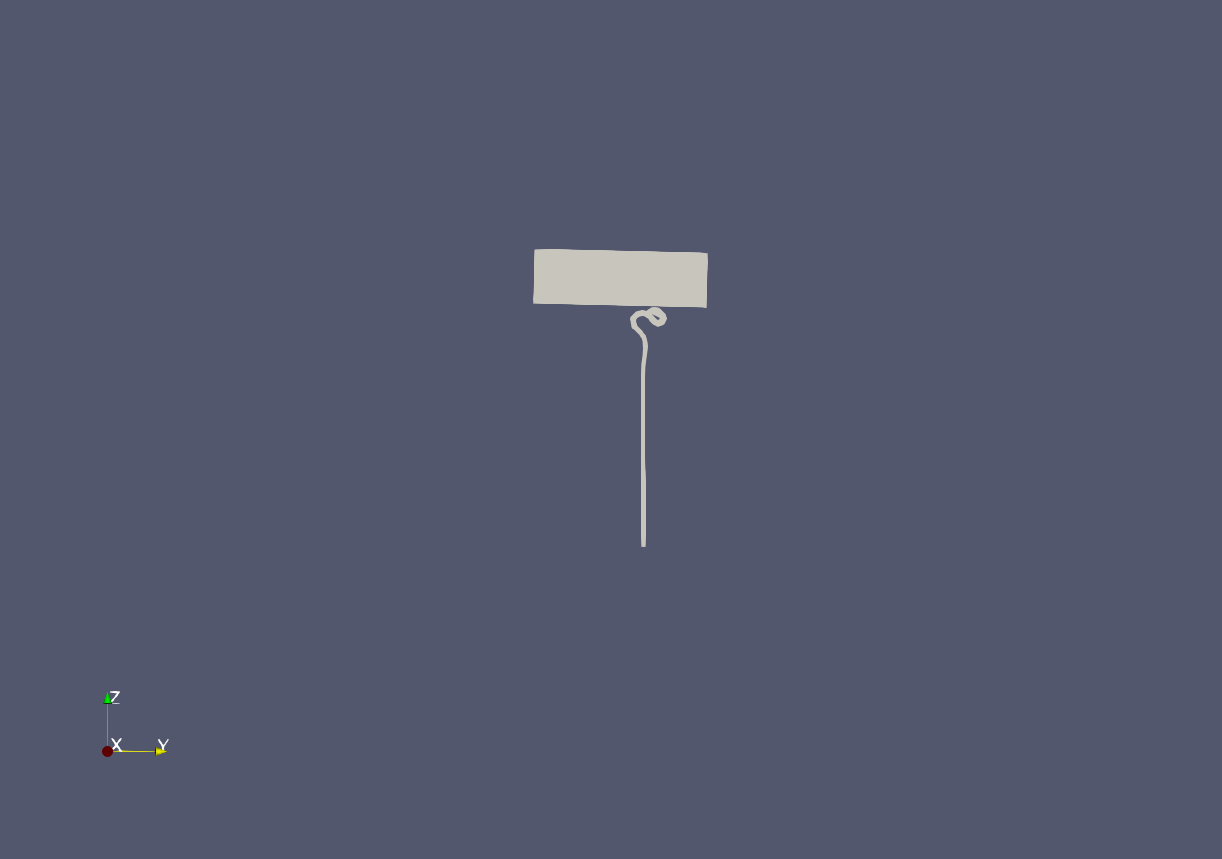

📝 import-gltf

45/55 (81.8%)

📋 Task Description

Load the "BlueGrayBackground" palette.

Read the file "import-gltf/data/import-gltf.glb" and import the nodes "/assembly/Axle", "assembly/OuterRing/Torus002", and "assembly/OuterRing/MiddleRing/InnerRing".

Set the layout size to 300x300 pixels.

Point the camera in the positive Y direction and zoom to fit.

Make sure all views are rendered, then save a screenshot to "import-gltf/results/{agent_mode}/import-gltf.png".

(Optional, but must save if use paraview) Save the paraview state as "import-gltf/results/{agent_mode}/import-gltf.pvsm".

(Optional, but must save if use python script) Save the python script as "import-gltf/results/{agent_mode}/import-gltf.py".

Do not save any other files, and always save the visualization image.

🖼️ Visualization Comparison

Ground Truth

Agent Result

📏 Vision Evaluation Rubrics

📊 Detailed Metrics

Visualization Quality

30/40

Output Generation

5/5

Efficiency

10/10

Completed in 39.90 seconds (excellent)

Input Tokens

3,189

Output Tokens

3,133

Total Tokens

6,322

Total Cost

$0.0566

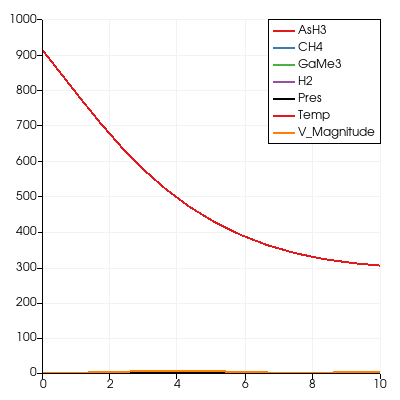

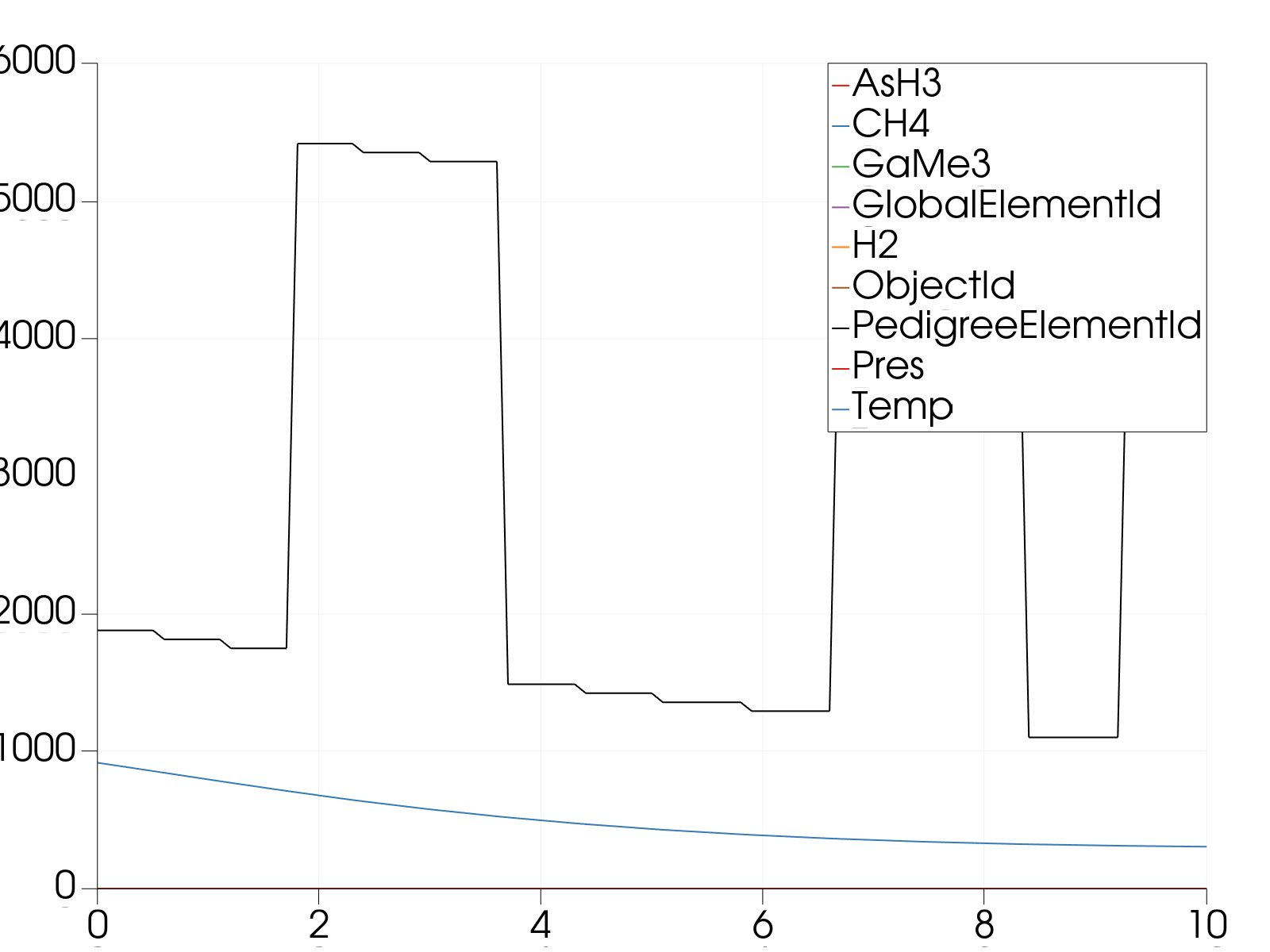

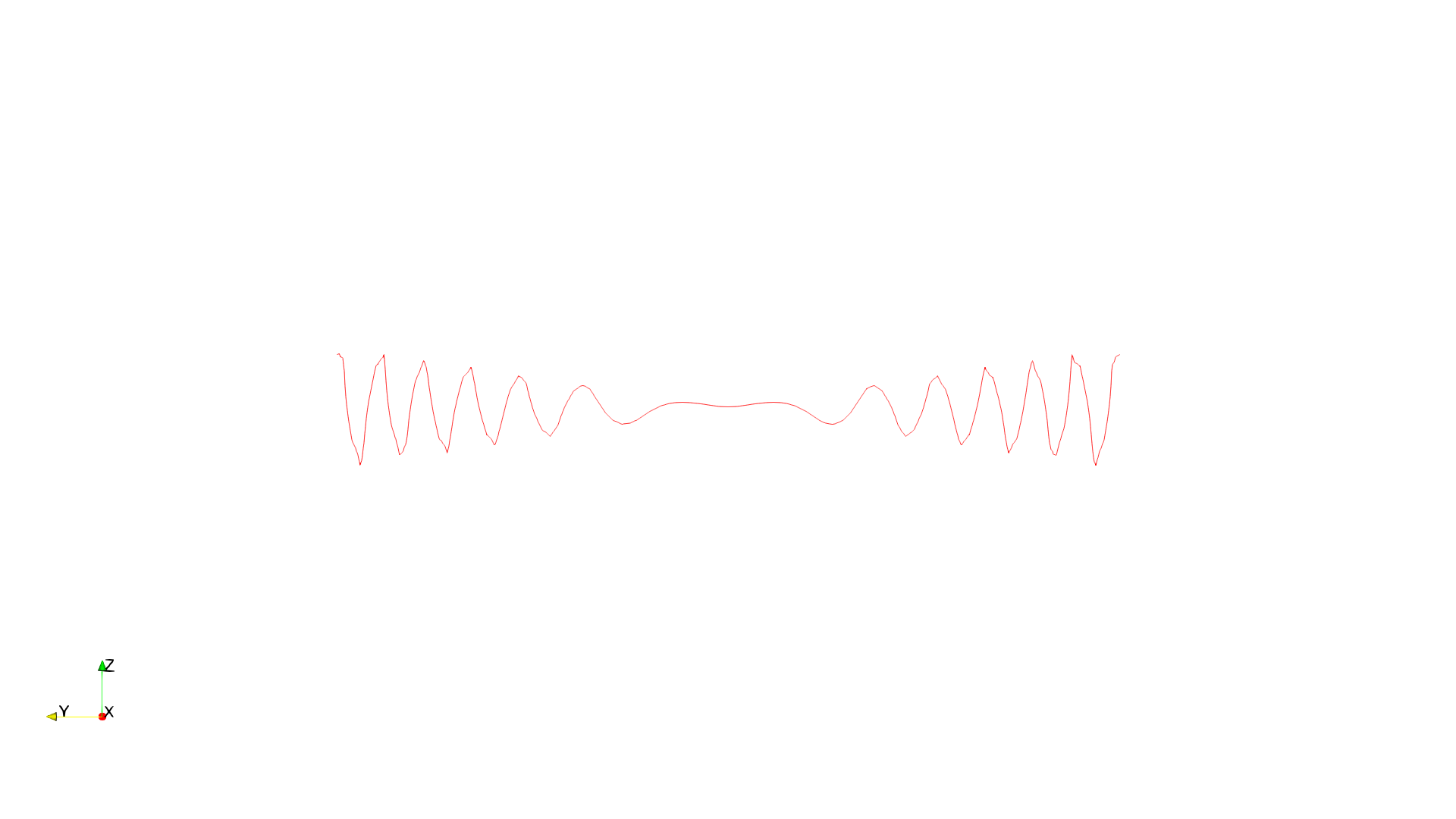

📝 line-plot

32/55 (58.2%)

📋 Task Description

Read the dataset in the file "line-plot/data/line-plot.ex2", and print the number of components and the range of all the variables.

Show a default view of the dataset, colored by the variable Pres.

Create a line plot over all the variables in the dataset, from (0,0,0) to (0,0,10).

Write the values of the line plot in the file "line-plot/results/{agent_mode}/line-plot.csv", and save a screenshot of the line plot in "line-plot/results/{agent_mode}/line-plot.png".

(Optional, but must save if use paraview) Save the paraview state as "line-plot/results/{agent_mode}/line-plot.pvsm".

(Optional, but must save if use python script) Save the python script as "line-plot/results/{agent_mode}/line-plot.py".

Do not save any other files, and always save the visualization image.

🖼️ Visualization Comparison

Ground Truth

Agent Result

📏 Vision Evaluation Rubrics

📊 Detailed Metrics

Visualization Quality

17/40

Output Generation

5/5

Efficiency

10/10

Completed in 53.51 seconds (excellent)

Total Cost

$0.0009

📝 lobster

⚠️ LOW SCORE21/55 (38.2%)

📋 Task Description

Task:

Load the Lobster dataset from "lobster/data/lobster_301x324x56_uint8.raw", the information about this dataset:

Lobster

Description: CT scan of a lobster contained in a block of resin.

Data Type: uint8

Data Byte Order: little Endian

Data Spacing: 1x1x1.4

Data Extent: 301x324x56

Data loading is very important, make sure you correctly load the dataset according to their features.

Visualize the scanned specimen:

1. Create an isosurface at the specimen boundary, find a proper isovalue to show the whole structure.

2. Use natural colors appropriate for the specimen (red-orange for lobster)

3. Analyze the visualization and answer the following questions:

Q1: Based on the isosurface visualization of the lobster specimen, how many walking legs are visible?

A. 6 walking legs

B. 7 walking legs

C. 8 walking legs

D. 10 walking legs

4. Use a white background. Find an optimal view. Render at 1280x1280. Do not show a color bar or coordinate axes.

5. Set the viewpoint parameters as: [543.52, -957.0, 1007.87] to position; [150.0, 161.5, 38.5] to focal point; [-0.15, 0.62, 0.77] to camera up direction.

6. Save your work:

Save the visualization image as "lobster/results/{agent_mode}/lobster.png".

Save the answers to the analysis questions in plain text as "lobster/results/{agent_mode}/answers.txt".

(Optional, but must save if use paraview) Save the paraview state as "lobster/results/{agent_mode}/lobster.pvsm".

(Optional, but must save if use pvpython script) Save the python script as "lobster/results/{agent_mode}/lobster.py".

(Optional, but must save if use VTK) Save the cxx code script as "lobster/results/{agent_mode}/lobster.cxx"

Do not save any other files, and always save the visualization image and the text file.

🖼️ Visualization Comparison

Ground Truth

Agent Result

📏 Vision Evaluation Rubrics

📝 Text-Based Q&A Evaluation

📊 Detailed Metrics

Visualization Quality

6/30

Output Generation

5/5

Efficiency

10/10

Completed in 37.60 seconds (excellent)

PSNR

18.27 dB

SSIM

0.9352

LPIPS

0.0861

Text Q&A Score

0/10

0.0%

Input Tokens

1,975

Output Tokens

2,475

Total Tokens

4,450

Total Cost

$0.0430

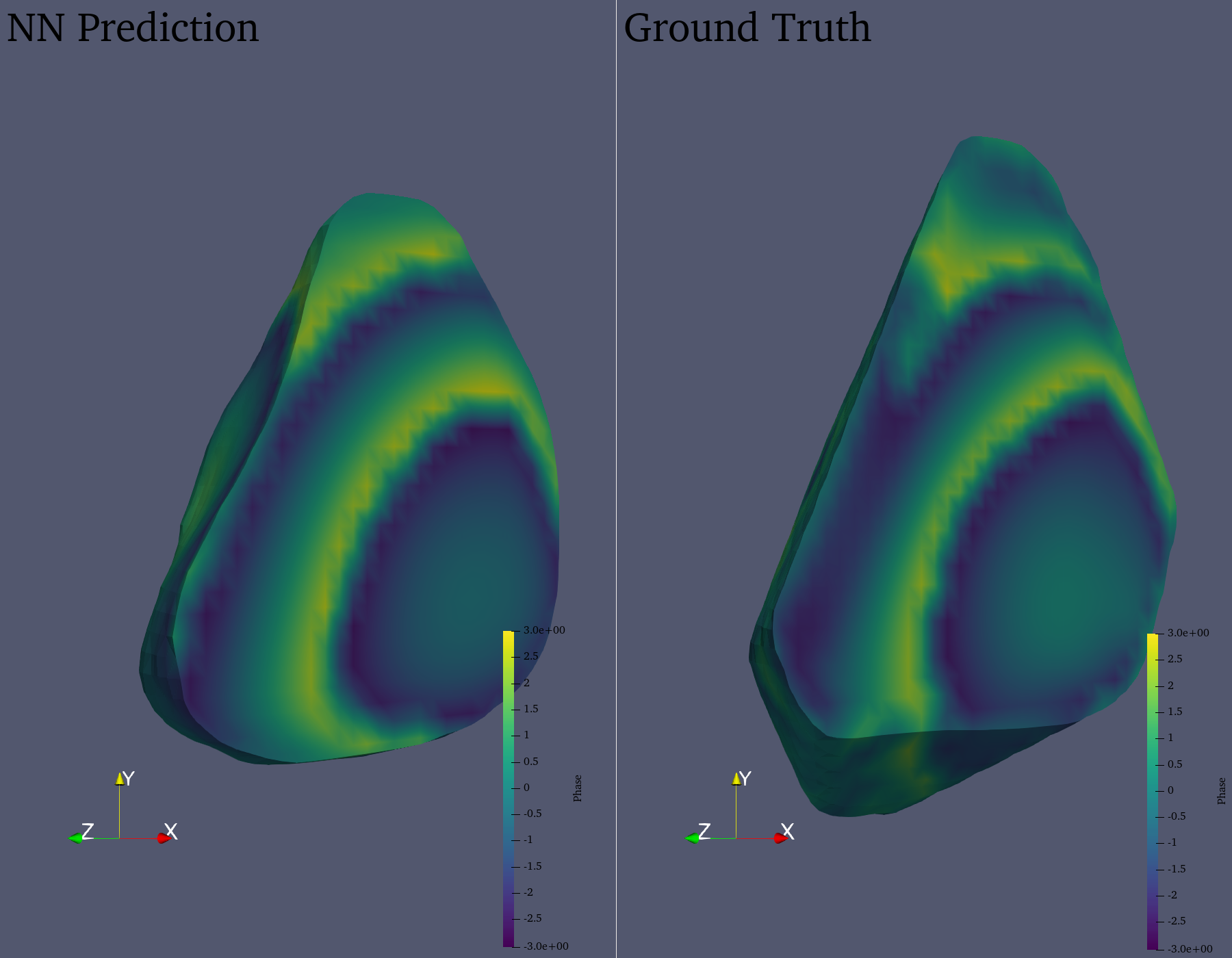

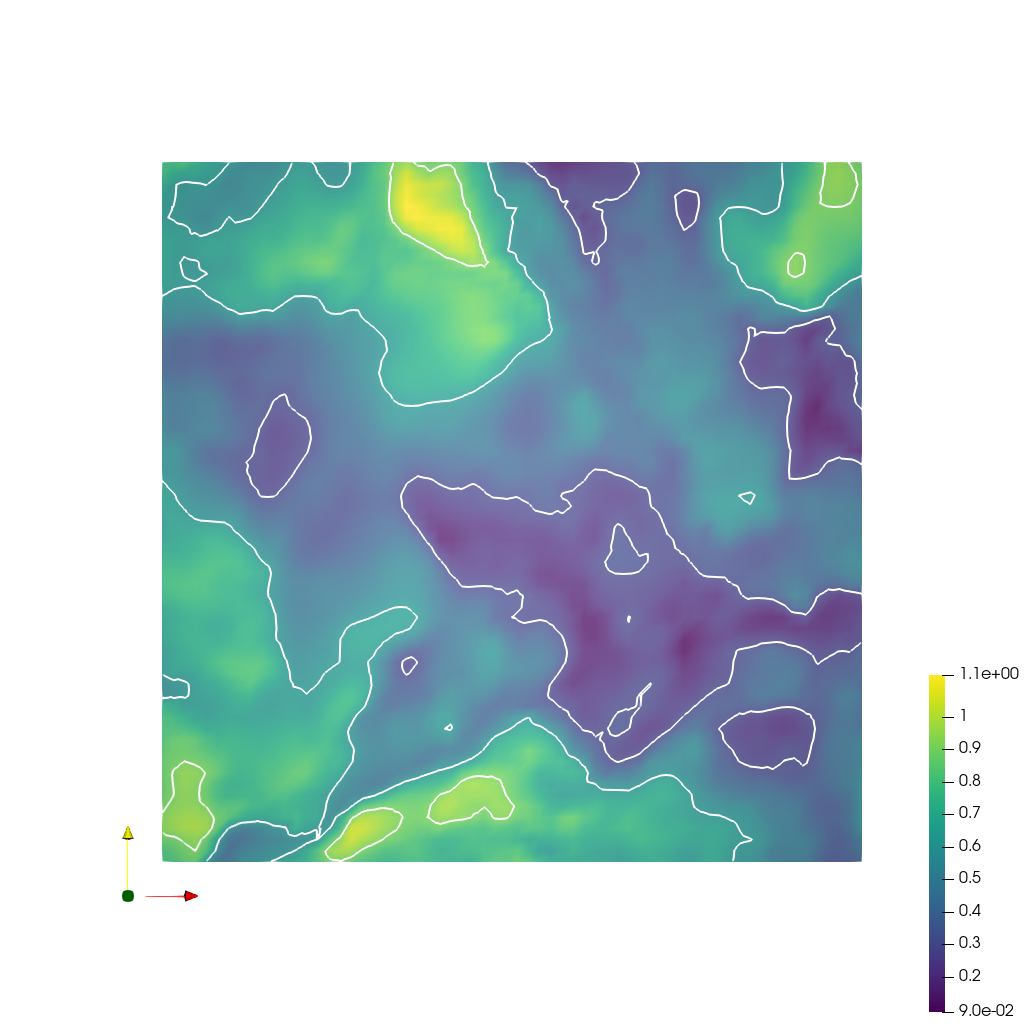

📝 materials

❌ FAILED0/55 (0.0%)

📋 Task Description

Compare two datasets in two views side by side each 900 pixels wide x 1400 pixels high.

Read the dataset "materials/data/materials_prediction.vtr" in the left view and "materials/data/materials_ground_truth.vtr" in the right view.

In both views, convert the "Intensity" and "Phase" variables from cell to point data.

In both views, take an isovolume of the "Intensity" variable in the range of [0.2, 1.0], clipped with a plane at (32.0, 32.0, 32.0) and +x normal direction.

Color both views with the Viridis (matplotlib) color map for the "Phase" variable, scaled to the data range, including a colormap legend in both views.

Label the left view "NN Prediction" and the right view "Ground Truth".

Orient the camera to look in the (-1, 0, -1) direction, with the datasets fitting in the views.

Save the visualization image as "materials/results/{agent_mode}/materials.png".

(Optional, but must save if use paraview) Save the paraview state as "materials/results/{agent_mode}/materials.pvsm".

(Optional, but must save if use python script) Save the python script as "materials/results/{agent_mode}/materials.py".

Do not save any other files, and always save the visualization image.

🖼️ Visualization Comparison

Ground Truth

Agent Result

📏 Vision Evaluation Rubrics

📊 Detailed Metrics

Visualization Quality

0/40

Output Generation

5/5

Efficiency

8/10

Completed in 110.29 seconds (good)

Input Tokens

9,079

Output Tokens

10,577

Total Tokens

19,656

Total Cost

$0.1859

📝 mhd-magfield_streamribbon

❌ FAILED0/55 (0.0%)

📋 Task Description

Load the MHD magnetic field dataset from "mhd-magfield_streamribbon/data/mhd-magfield_streamribbon.vti" (VTI format, 128x128x128 grid with components bx, by, bz).

Generate a stream ribbon seeded from a line source along the y-axis at x=64, z=64 (from y=20 to y=108), with 30 seed points.

The stream ribbon should be traced along the magnetic field lines.

Color the stream ribbon by magnetic field magnitude using the 'Cool to Warm' colormap. Enable surface lighting with specular reflection for better 3D perception.

Add a color bar labeled 'Magnetic Field Magnitude'.

Use a white background. Set an isometric camera view. Render at 1024x1024 resolution.

Set the viewpoint parameters as: [200.0, 200.0, 200.0] to position; [63.5, 63.5, 63.5] to focal point; [0.0, 0.0, 1.0] to camera up direction.

Save the visualization image as "mhd-magfield_streamribbon/results/{agent_mode}/mhd-magfield_streamribbon.png".

(Optional, but must save if use paraview) Save the paraview state as "mhd-magfield_streamribbon/results/{agent_mode}/mhd-magfield_streamribbon.pvsm".

(Optional, but must save if use pvpython script) Save the python script as "mhd-magfield_streamribbon/results/{agent_mode}/mhd-magfield_streamribbon.py".

(Optional, but must save if use VTK) Save the cxx code script as "mhd-magfield_streamribbon/results/{agent_mode}/mhd-magfield_streamribbon.cxx"

Do not save any other files, and always save the visualization image.

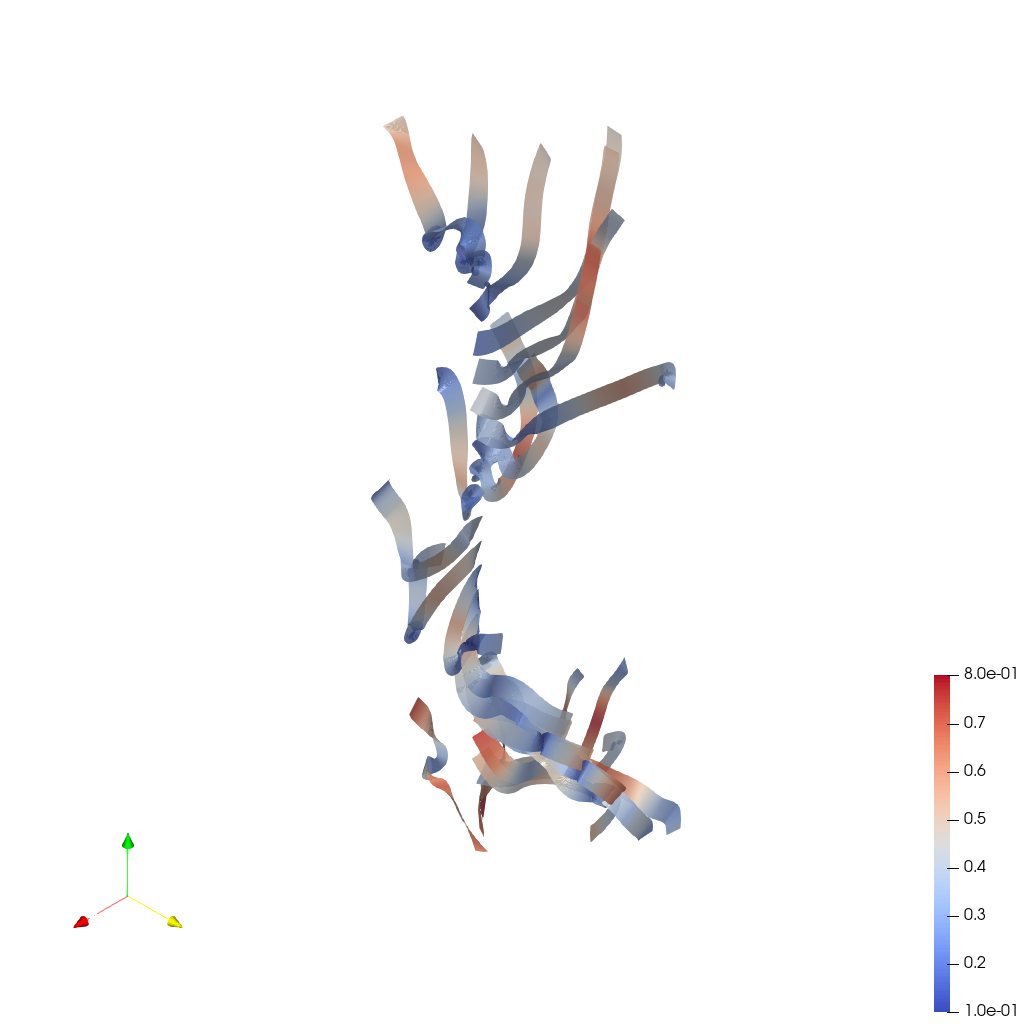

🖼️ Visualization Comparison

Ground Truth

Agent Result

📏 Vision Evaluation Rubrics

📊 Detailed Metrics

Visualization Quality

0/40

Output Generation

5/5

Efficiency

10/10

Completed in 41.61 seconds (excellent)

Total Cost

$0.0014

📝 mhd-turbulence_pathline

❌ FAILED0/55 (0.0%)

📋 Task Description

Load the MHD turbulence velocity field time series "mhd-turbulence_pathline/data/mhd-turbulence_pathline_{timestep}.vti", where "timestep" in {0000, 0010, 0020, 0030, 0040} (5 timesteps, VTI format, 128x128x128 grid each).

Compute true pathlines by tracking particles through the time-varying velocity field using the ParticlePath filter. Apply TemporalShiftScale (scale=20) and TemporalInterpolator (interval=0.5) to extend particle travel and smooth trajectories.

Seed 26 points along a line on the z-axis at x=64, y=64 (from z=20 to z=108). Use static seeds with termination time 80.

Render pathlines as tubes with radius 0.3. Color by velocity magnitude using the 'Viridis (matplotlib)' colormap.

Add a color bar for velocity magnitude. Set the viewpoint parameters as: [200.0, 200.0, 200.0] to position; [63.5, 63.5, 63.5] to focal point; [0.0, 0.0, 1.0] to camera up direction.

Use a white background. Set an isometric camera view. Render at 1024x1024.

Save the visualization image as "mhd-turbulence_pathline/results/{agent_mode}/mhd-turbulence_pathline.png".

(Optional, but must save if use paraview) Save the paraview state as "mhd-turbulence_pathline/results/{agent_mode}/mhd-turbulence_pathline.pvsm".

(Optional, but must save if use pvpython script) Save the python script as "mhd-turbulence_pathline/results/{agent_mode}/mhd-turbulence_pathline.py".

(Optional, but must save if use VTK) Save the cxx code script as "mhd-turbulence_pathline/results/{agent_mode}/mhd-turbulence_pathline.cxx"

Do not save any other files, and always save the visualization image.

🖼️ Visualization Comparison

Ground Truth

Agent Result

📏 Vision Evaluation Rubrics

📊 Detailed Metrics

Visualization Quality

0/40

Output Generation

5/5

Efficiency

8/10

Completed in 97.34 seconds (good)

Input Tokens

6,675

Output Tokens

7,201

Total Tokens

13,876

Total Cost

$0.1280

📝 mhd-turbulence_pathribbon

❌ FAILED0/45 (0.0%)

📋 Task Description

Load the MHD turbulence velocity field time series "mhd-turbulence_pathribbon/data/mhd-turbulence_pathribbon_{timestep}.vti", where "timestep" in {0000, 0010, 0020, 0030, 0040} (5 timesteps, VTI format, 128x128x128 grid each).

Compute true pathlines by tracking particles through the time-varying velocity field using the ParticlePath filter. Apply TemporalShiftScale (scale=20) and TemporalInterpolator (interval=0.1) for dense, smooth trajectories.

Seed 26 points along a line on the z-axis at x=64, y=64 (from z=20 to z=108). Use static seeds with termination time 80.

Create ribbon surfaces from the pathlines using the Ribbon filter with width 1.5 and a fixed default normal to prevent twisting. Apply Smooth filter (500 iterations) and generate surface normals for smooth shading.

Set surface opacity to 0.85. Color by velocity magnitude using the 'Cool to Warm' colormap (range 0.1-0.8). Add specular highlights (0.5).

Add a color bar for velocity magnitude. Use a white background. Set an isometric camera view. Render at 1024x1024.

Set the viewpoint parameters as: [200.0, 200.0, 200.0] to position; [63.5, 63.5, 63.5] to focal point; [0.0, 0.0, 1.0] to camera up direction.

Save the visualization image as "mhd-turbulence_pathribbon/results/{agent_mode}/mhd-turbulence_pathribbon.png".

(Optional, but must save if use paraview) Save the paraview state as "mhd-turbulence_pathribbon/results/{agent_mode}/mhd-turbulence_pathribbon.pvsm".

(Optional, but must save if use pvpython script) Save the python script as "mhd-turbulence_pathribbon/results/{agent_mode}/mhd-turbulence_pathribbon.py".

(Optional, but must save if use VTK) Save the cxx code script as "mhd-turbulence_pathribbon/results/{agent_mode}/mhd-turbulence_pathribbon.cxx"

Do not save any other files, and always save the visualization image.

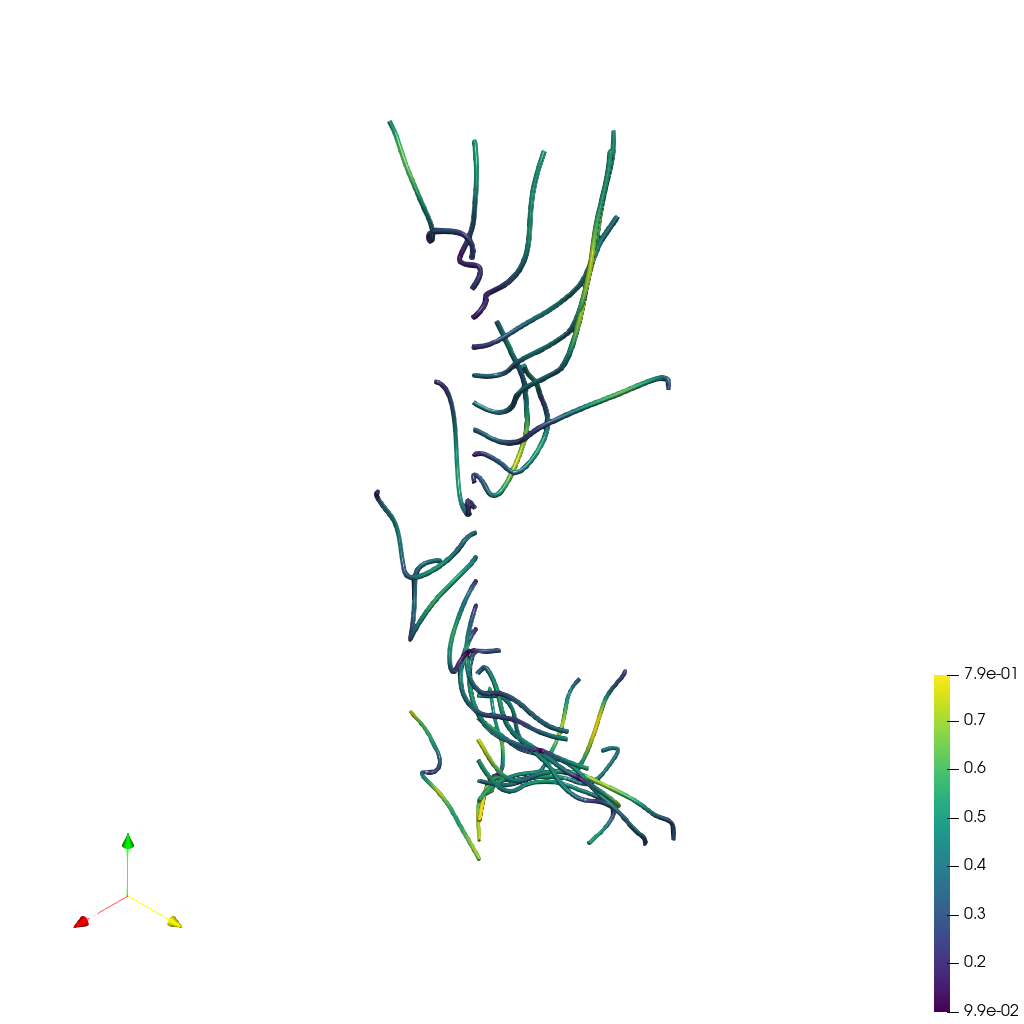

🖼️ Visualization Comparison

Ground Truth

Agent Result

📏 Vision Evaluation Rubrics

📊 Detailed Metrics

Visualization Quality

0/30

Output Generation

5/5

Efficiency

8/10

Completed in 92.13 seconds (good)

Input Tokens

6,663

Output Tokens

7,097

Total Tokens

13,760

Total Cost

$0.1264

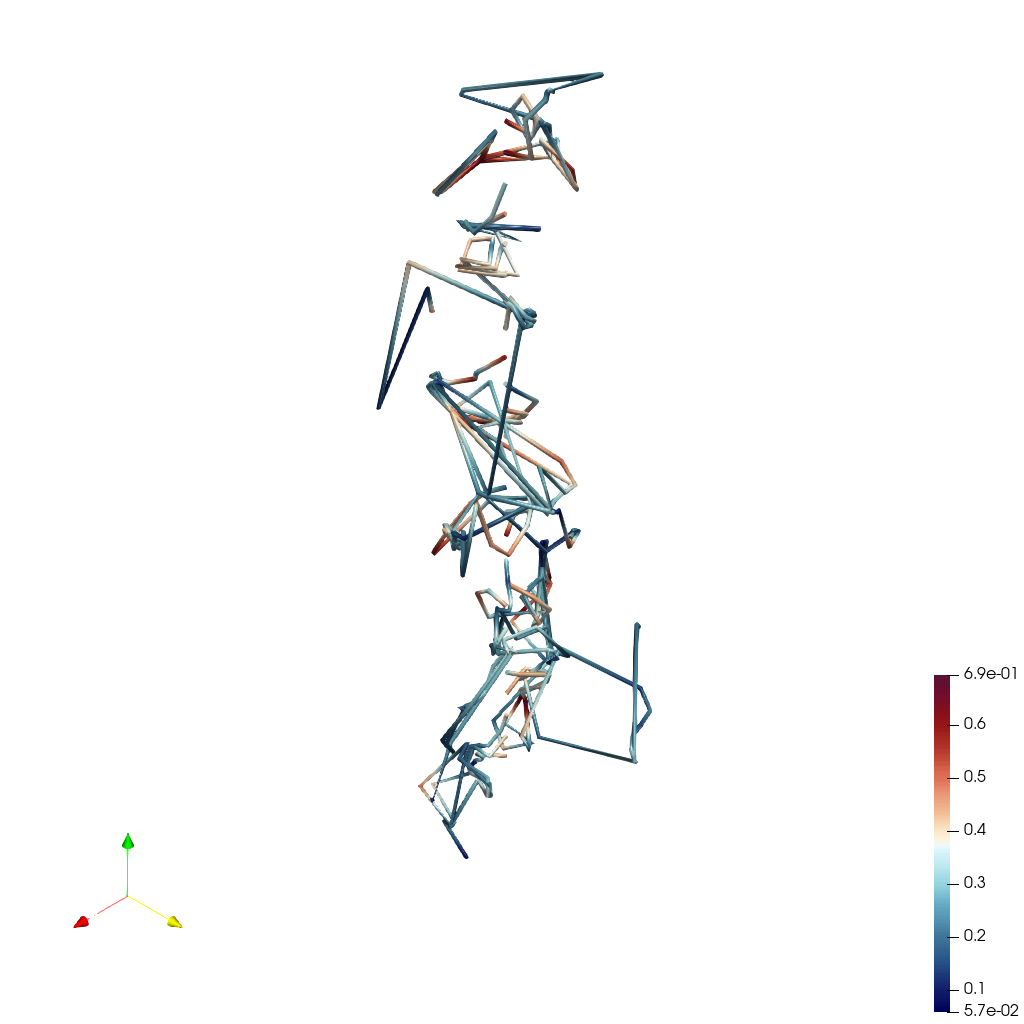

📝 mhd-turbulence_streamline

❌ FAILED0/55 (0.0%)

📋 Task Description

Load the MHD turbulence velocity field dataset "mhd-turbulence_streamline/data/mhd-turbulence_streamline.vti" (VTI format, 128x128x128 grid).

Generate 3D streamlines seeded from a line source along the z-axis at x=64, y=64 (from z=0 to z=127), with 50 seed points.

Color the streamlines by velocity magnitude using the 'Turbo' colormap. Set streamline tube radius to 0.3 using the Tube filter.

Add a color bar labeled 'Velocity Magnitude'. Use a white background. Set an isometric camera view. Render at 1024x1024.

Set the viewpoint parameters as: [200.0, 200.0, 200.0] to position; [63.5, 63.5, 63.5] to focal point; [0.0, 0.0, 1.0] to camera up direction.

Save the visualization image as "mhd-turbulence_streamline/results/{agent_mode}/mhd-turbulence_streamline.png".

(Optional, but must save if use paraview) Save the paraview state as "mhd-turbulence_streamline/results/{agent_mode}/mhd-turbulence_streamline.pvsm".

(Optional, but must save if use pvpython script) Save the python script as "mhd-turbulence_streamline/results/{agent_mode}/mhd-turbulence_streamline.py".

(Optional, but must save if use VTK) Save the cxx code script as "mhd-turbulence_streamline/results/{agent_mode}/mhd-turbulence_streamline.cxx"

Do not save any other files, and always save the visualization image.

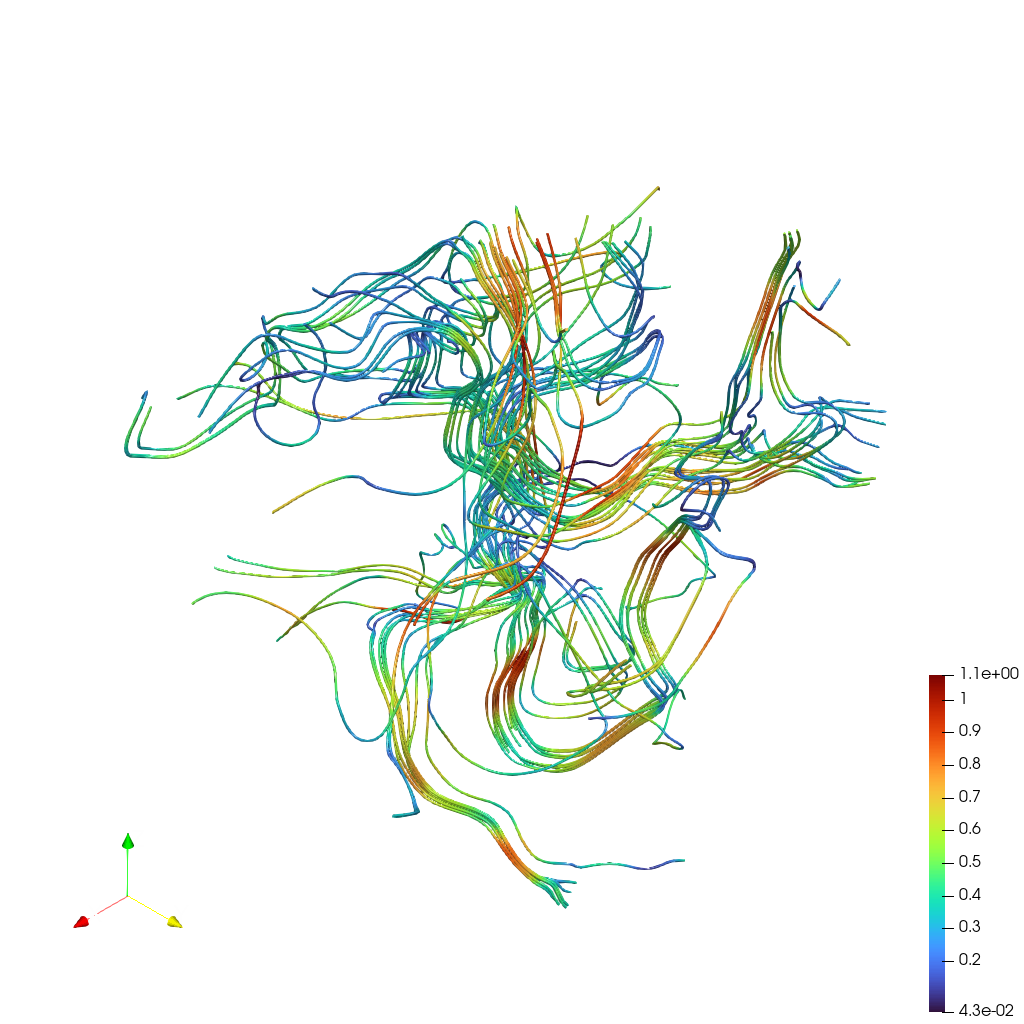

🖼️ Visualization Comparison

Ground Truth

Agent Result

📏 Vision Evaluation Rubrics

📊 Detailed Metrics

Visualization Quality

3/40

Output Generation

5/5

Efficiency

10/10

Completed in 40.71 seconds (excellent)

PSNR

16.91 dB

SSIM

0.8986

LPIPS

0.1935

Input Tokens

1,854

Output Tokens

2,651

Total Tokens

4,505

Total Cost

$0.0453

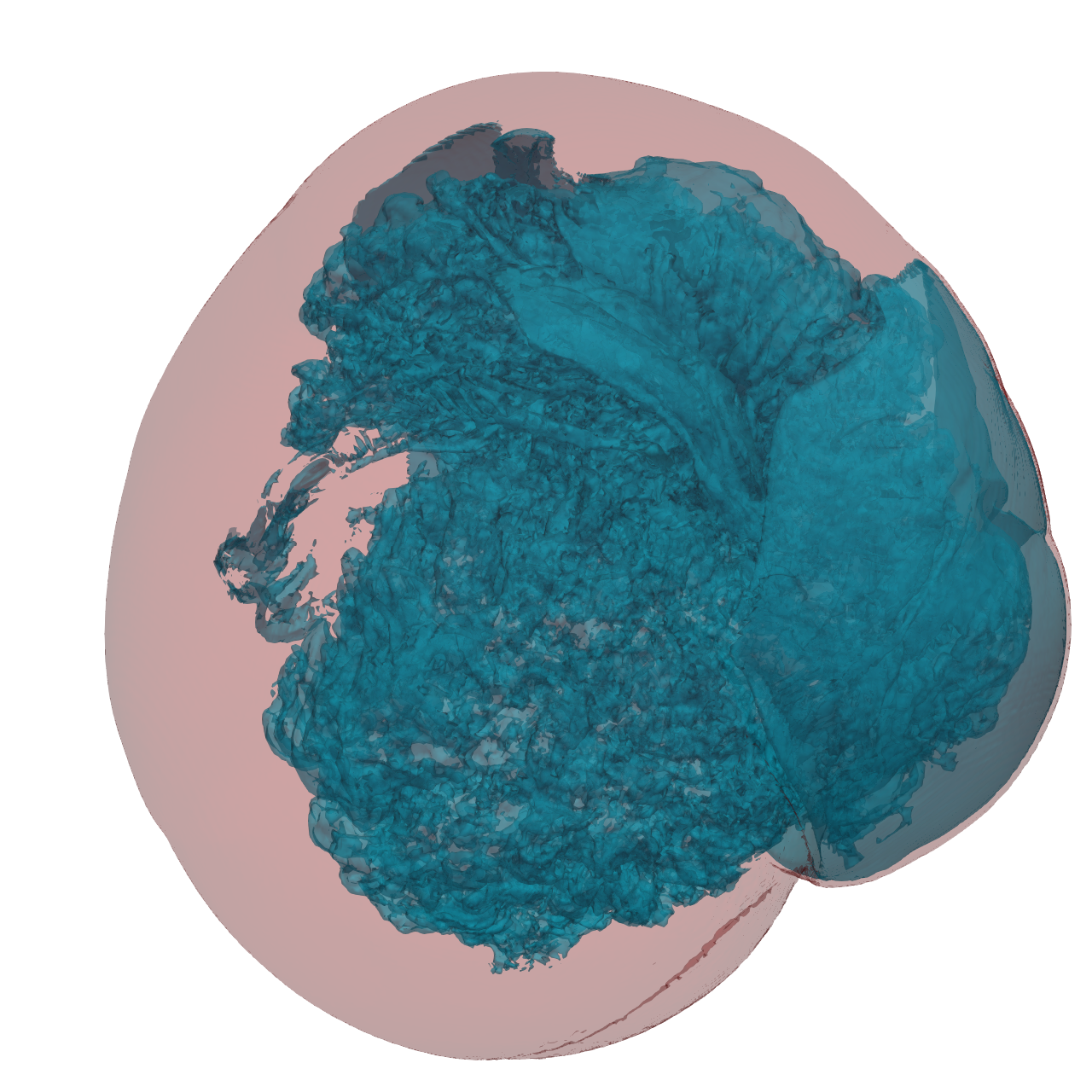

📝 miranda

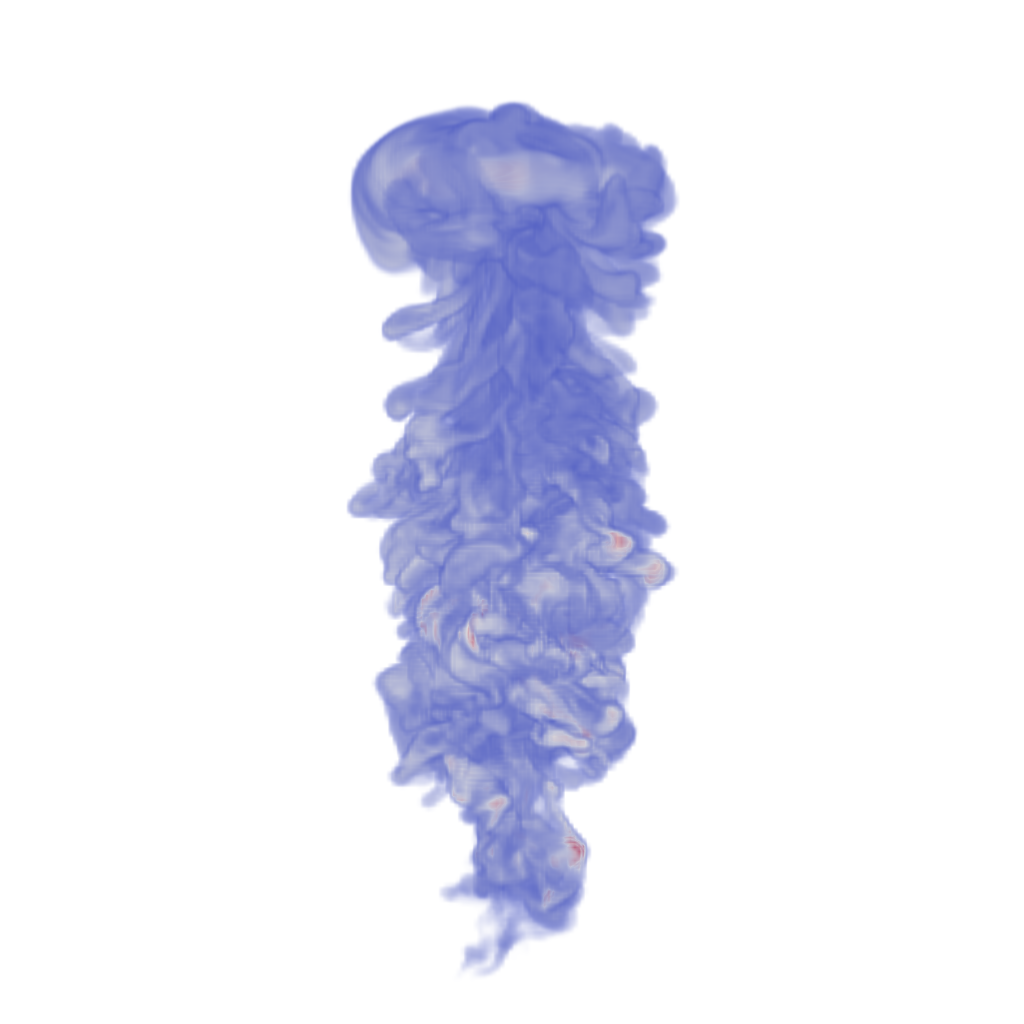

❌ FAILED0/45 (0.0%)

📋 Task Description

Task:

Load the Rayleigh-Taylor Instability dataset from "miranda/data/miranda_256x256x256_float32.vtk".

Generate a visualization image of the Rayleigh-Taylor Instability dataset, a time step of a density field in a simulation of the mixing transition in Rayleigh-Taylor instability, with the following visualization settings:

1) Create volume rendering

2) Set the opacity transfer function as a ramp function from value 0 to 1 of the volumetric data, assigning opacity 0 to value 0 and assigning opacity 1 to value 1.

3) Set the color transfer function following the 7 rainbow colors and assign a red color [1.0, 0.0, 0.0] to the highest value, a purple color [0.5, 0.0, 1.0] to the lowest value.

4) Set the viewpoint parameters as: [650, 650, 650] to position; [128, 128, 128] to focal point; [1, 0, 0] to camera up direction

5) Volume rendering ray casting sample distance is 0.1

6) White background

7) Visualization image resolution is 1024x1024

8) Don't show color/scalar bar or coordinate axes.

Save the visualization image as "miranda/results/{agent_mode}/miranda.png".

(Optional, but must save if use paraview) Save the paraview state as "miranda/results/{agent_mode}/miranda.pvsm".

(Optional, but must save if use pvpython script) Save the python script as "miranda/results/{agent_mode}/miranda.py".

(Optional, but must save if use VTK) Save the cxx code script as "miranda/results/{agent_mode}/miranda.cxx"

Do not save any other files, and always save the visualization image.

🖼️ Visualization Comparison

Ground Truth

Agent Result

📏 Vision Evaluation Rubrics

📊 Detailed Metrics

Visualization Quality

0/30

Output Generation

5/5

Efficiency

10/10

Completed in 42.41 seconds (excellent)

Input Tokens

1,763

Output Tokens

2,207

Total Tokens

3,970

Total Cost

$0.0384

📝 ml-dvr

❌ FAILED0/55 (0.0%)

📋 Task Description

I would like to use ParaView to visualize a dataset.

Read in the file named "ml-dvr/data/ml-dvr.vtk".

Generate a volume rendering using the default transfer function.

Rotate the view to an isometric direction.

Save a screenshot of the result in the filename "ml-dvr/results/{agent_mode}/ml-dvr.png".

The rendered view and saved screenshot should be 1920 x 1080 pixels.

(Optional, but must save if use paraview) Save the paraview state as "ml-dvr/results/{agent_mode}/ml-dvr.pvsm".

(Optional, but must save if use python script) Save the python script as "ml-dvr/results/{agent_mode}/ml-dvr.py".

Do not save any other files, and always save the visualization image.

🖼️ Visualization Comparison

Ground Truth

Agent Result

📏 Vision Evaluation Rubrics

📊 Detailed Metrics

Visualization Quality

1/40

Output Generation

5/5

Efficiency

10/10

Completed in 23.25 seconds (excellent)

Input Tokens

413

Output Tokens

758

Total Tokens

1,171

Total Cost

$0.0126

📝 ml-iso

55/55 (100.0%)

📋 Task Description

Read in the file named "ml-iso/data/ml-iso.vtk", and generate an isosurface of the variable var0 at value 0.5.

Use a white background color. Save a screenshot of the result, size 1920 x 1080 pixels, in "ml-iso/results/{agent_mode}/ml-iso.png".

(Optional, but must save if use paraview) Save the paraview state as "ml-iso/results/{agent_mode}/ml-iso.pvsm".

(Optional, but must save if use python script) Save the python script as "ml-iso/results/{agent_mode}/ml-iso.py".

Do not save any other files, and always save the visualization image.

🖼️ Visualization Comparison

Ground Truth

Agent Result

📏 Vision Evaluation Rubrics

📊 Detailed Metrics

Visualization Quality

40/40

Output Generation

5/5

Efficiency

10/10

Completed in 30.46 seconds (excellent)

Input Tokens

385

Output Tokens

1,546

Total Tokens

1,931

Total Cost

$0.0243

📝 ml-slice-iso

29/55 (52.7%)

📋 Task Description

Please generate a ParaView Python script for the following operations.

Read in the file named "ml-slice-iso/data/ml-slice-iso.vtk".

Slice the volume in a plane parallel to the y-z plane at x=0.

Take a contour through the slice at the value 0.5.

Color the contour red. Use a white background.

Rotate the view to look at the +x direction.

Save a screenshot of the result in the filename "ml-slice-iso/results/{agent_mode}/ml-slice-iso.png".

The rendered view and saved screenshot should be 1920 x 1080 pixels.

(Optional, but must save if use paraview) Save the paraview state as "ml-slice-iso/results/{agent_mode}/ml-slice-iso.pvsm".

(Optional, but must save if use python script) Save the python script as "ml-slice-iso/results/{agent_mode}/ml-slice-iso.py".

Do not save any other files, and always save the visualization image.

🖼️ Visualization Comparison

Ground Truth

Agent Result

📏 Vision Evaluation Rubrics

📊 Detailed Metrics

Visualization Quality

14/40

Output Generation

5/5

Efficiency

10/10

Completed in 35.74 seconds (excellent)

Input Tokens

459

Output Tokens

2,373

Total Tokens

2,832

Total Cost

$0.0370

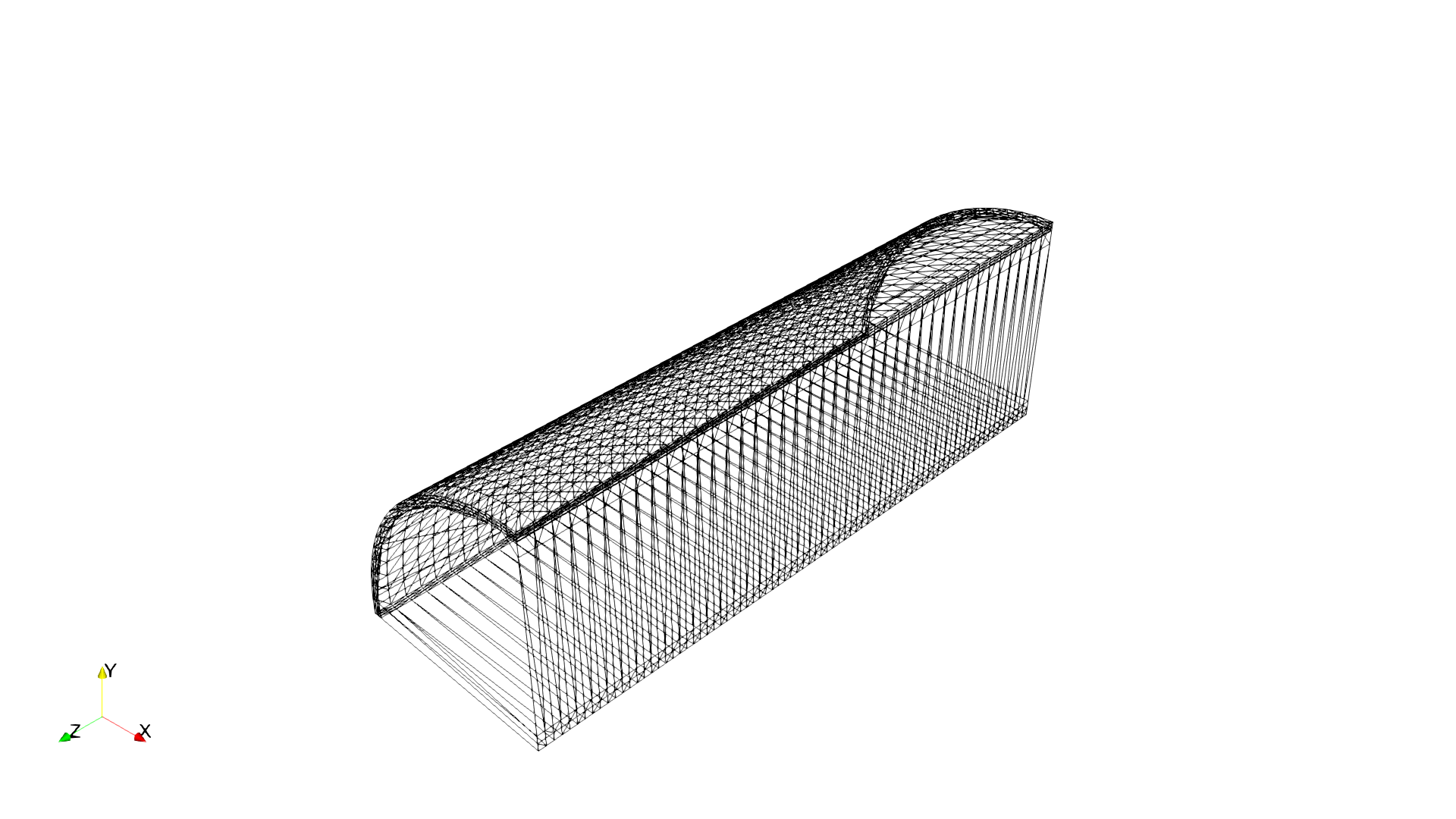

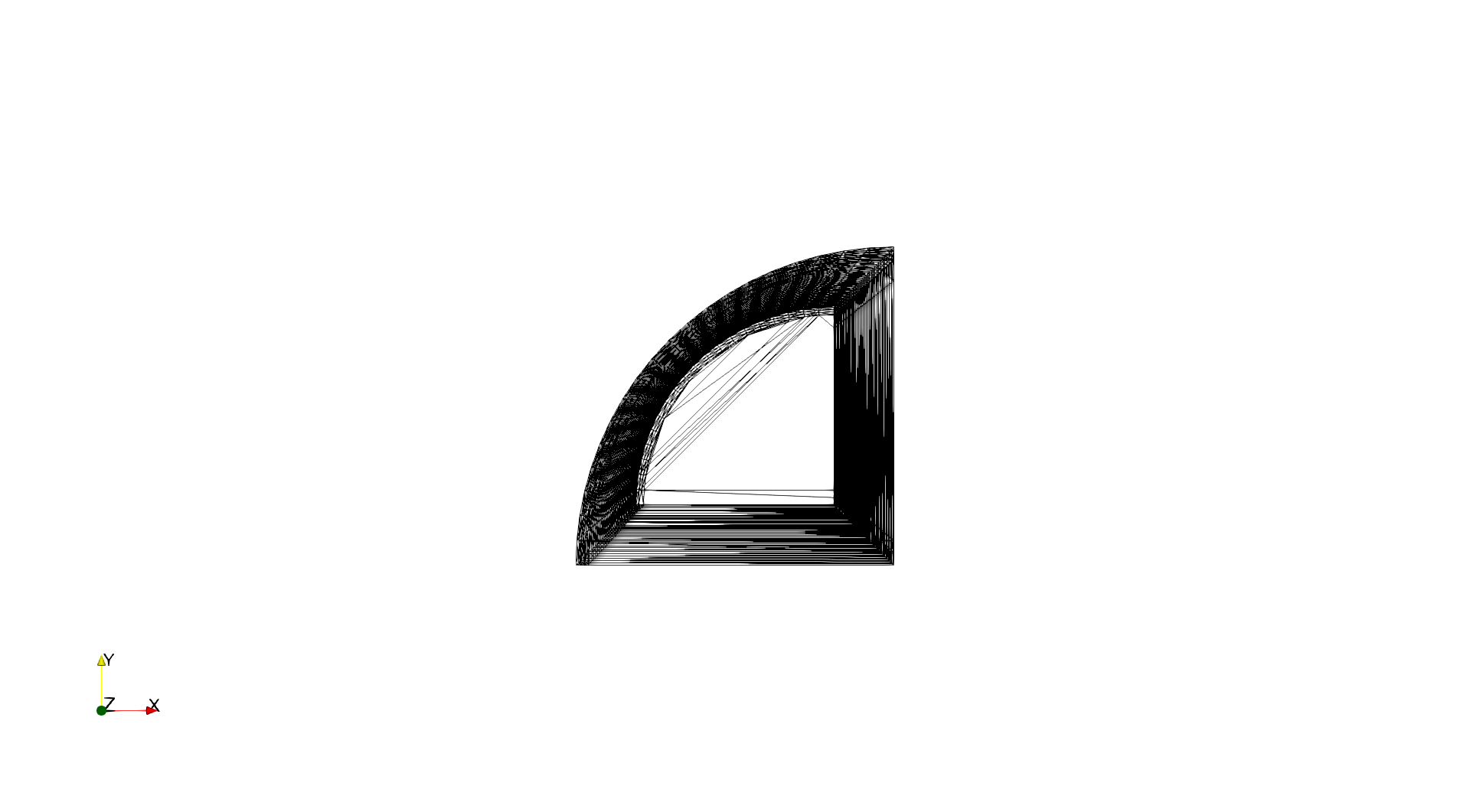

📝 points-surf-clip

39/55 (70.9%)

📋 Task Description

I would like to use ParaView to visualize a dataset.

Read in the file named "points-surf-clip/data/points-surf-clip.ex2".

Generate an 3d Delaunay triangulation of the dataset.

Clip the data with a y-z plane at x=0, keeping the -x half of the data and removing the +x half.

Render the image as a wireframe.

Save a screenshot of the result in the filename "points-surf-clip/results/{agent_mode}/points-surf-clip.png".

The rendered view and saved screenshot should be 1920 x 1080 pixels. Use a white background color.

(Optional, but must save if use paraview) Save the paraview state as "points-surf-clip/results/{agent_mode}/points-surf-clip.pvsm".

(Optional, but must save if use python script) Save the python script as "points-surf-clip/results/{agent_mode}/points-surf-clip.py".

Do not save any other files, and always save the visualization image.

🖼️ Visualization Comparison

Ground Truth

Agent Result

📏 Vision Evaluation Rubrics

📊 Detailed Metrics

Visualization Quality

24/40

Output Generation

5/5

Efficiency

10/10

Completed in 22.22 seconds (excellent)

Input Tokens

462

Output Tokens

1,526

Total Tokens

1,988

Total Cost

$0.0243

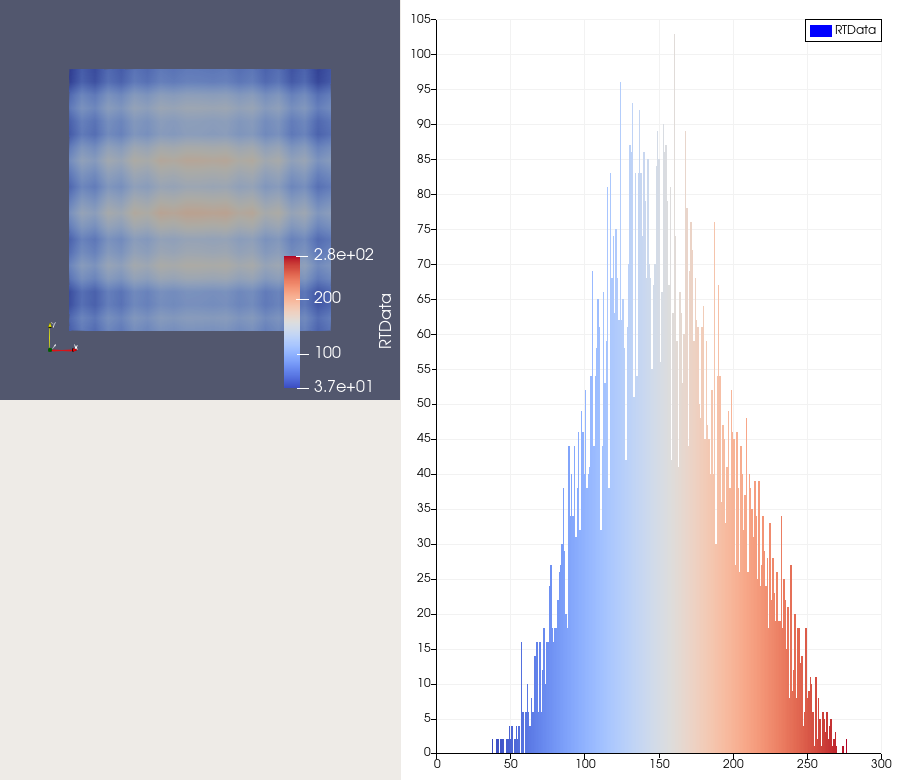

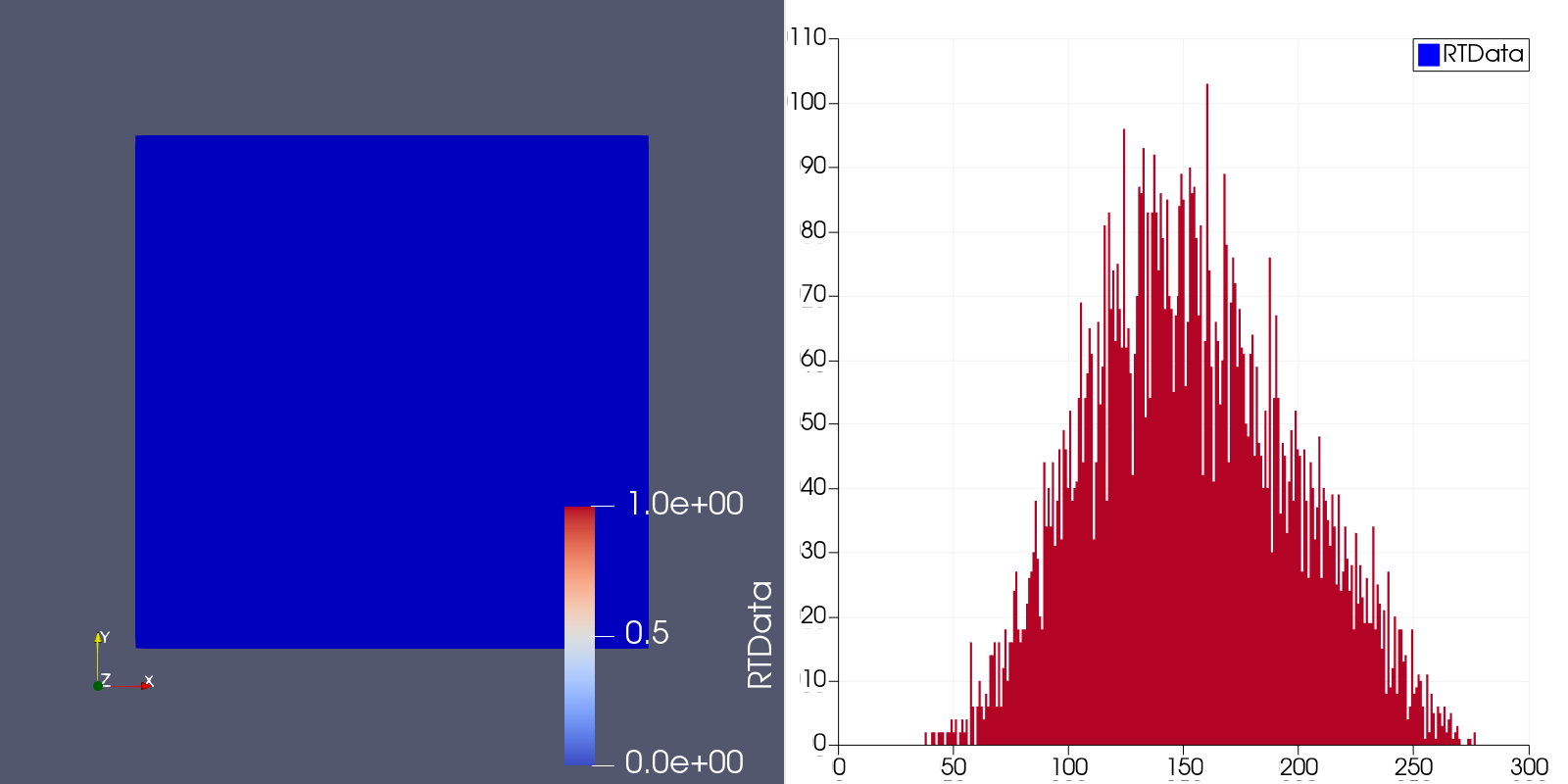

📝 render-histogram

40/55 (72.7%)

📋 Task Description

Create a wavelet object and render it as a surface colored by RTDATA with a visible color bar.

Rescale the colors to the data range and use the 'Cool to Warm' color map.

Next, split the view horizontally to the right and create a histogram view from the wavelet RTDATA.

Apply the same 'Cool to Warm' color map to the histogram.

Save a screenshot of both views (wavelet rendering on the left and histogram on the right) in the file "render-histogram/results/{agent_mode}/render-histogram.png".

(Optional, but must save if use paraview) Save the paraview state as "render-histogram/results/{agent_mode}/render-histogram.pvsm".

(Optional, but must save if use python script) Save the python script as "render-histogram/results/{agent_mode}/render-histogram.py".

Do not save any other files, and always save the visualization image.

🖼️ Visualization Comparison

Ground Truth

Agent Result

📏 Vision Evaluation Rubrics

📊 Detailed Metrics

Visualization Quality

25/40

Output Generation

5/5

Efficiency

10/10

Completed in 27.52 seconds (excellent)

Input Tokens

1,301

Output Tokens

1,748

Total Tokens

3,049

Total Cost

$0.0301

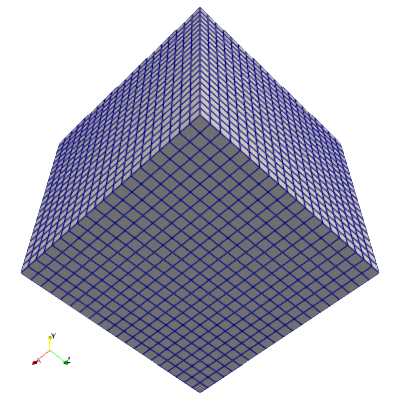

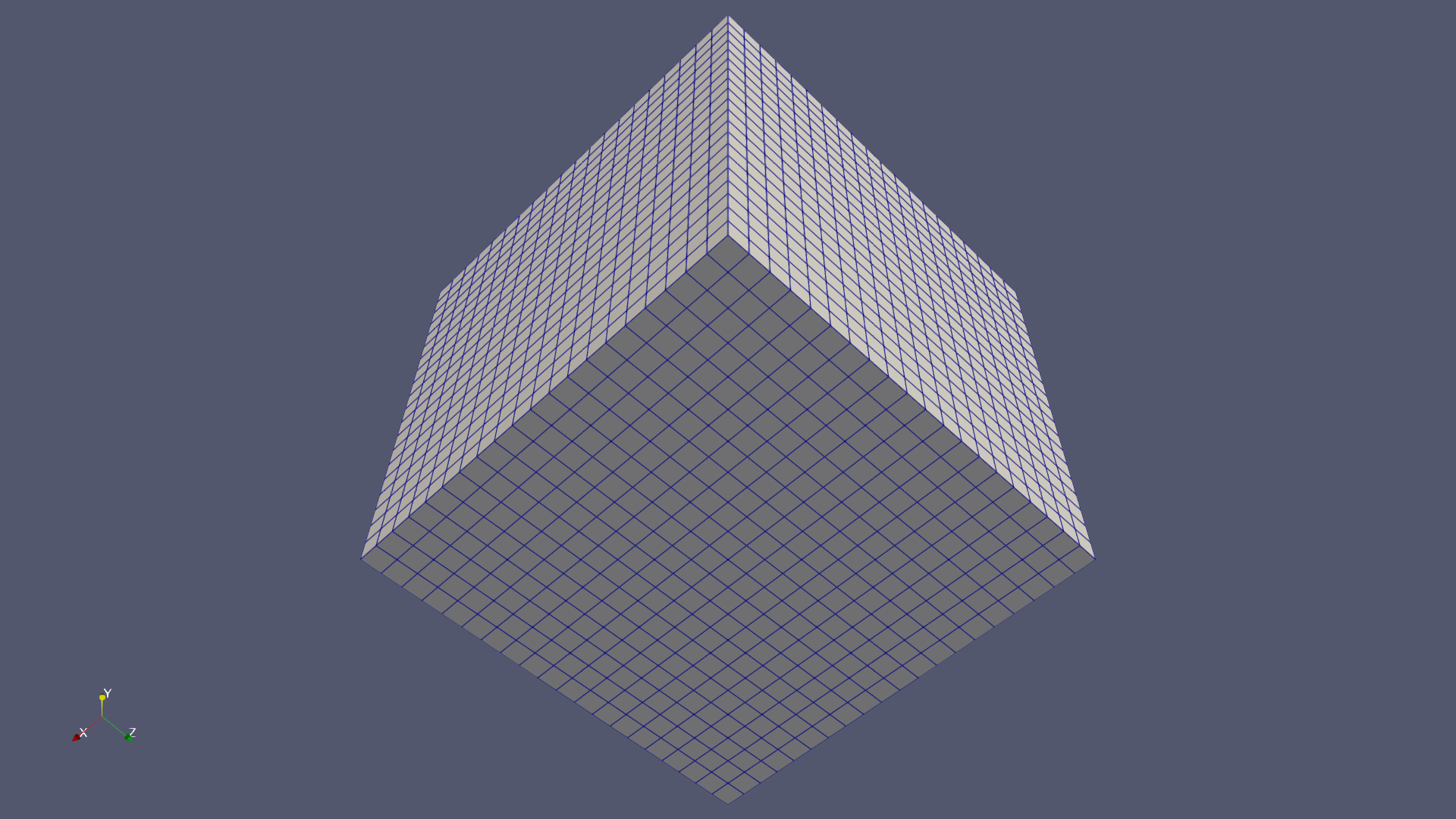

📝 reset-camera-direction

53/55 (96.4%)

📋 Task Description

Create a Wavelet object, set its representation to "Surface with Edges", and set the camera direction to [0.5, 1, 0.5].

Save a screenshot to the file "reset-camera-direction/results/{agent_mode}/reset-camera-direction.png".

(Optional, but must save if use paraview) Save the paraview state as "reset-camera-direction/results/{agent_mode}/reset-camera-direction.pvsm".

(Optional, but must save if use python script) Save the python script as "reset-camera-direction/results/{agent_mode}/reset-camera-direction.py".

Do not save any other files, and always save the visualization image.

🖼️ Visualization Comparison

Ground Truth

Agent Result

📏 Vision Evaluation Rubrics

📊 Detailed Metrics

Visualization Quality

38/40

Output Generation

5/5

Efficiency

10/10

Completed in 15.09 seconds (excellent)

Input Tokens

374

Output Tokens

1,023

Total Tokens

1,397

Total Cost

$0.0165

📝 richtmyer

❌ FAILED0/45 (0.0%)

📋 Task Description

Task:

Load the Richtmyer dataset from "richtmyer/data/richtmyer_256x256x240_float32.vtk".

Generate a visualization image of the Richtmyer dataset, Entropy field (timestep 160) of Richtmyer-Meshkov instability simulation, with the following visualization settings:

1) Create volume rendering

2) Set the opacity transfer function as a ramp function from value 0.05 to 1 of the volumetric data, assigning opacity 0 to value less than 0.05 and assigning opacity 1 to value 1.

3) Set the color transfer function following the 7 rainbow colors and assign a red color [1.0, 0.0, 0.0] to the highest value, a purple color [0.5, 0.0, 1.0] to the lowest value.

4) Visualization image resolution is 1024x1024

5) Set the viewpoint parameters as: [420, 420, -550] to position; [128, 128, 150] to focal point; [-1, -1, 1] to camera up direction

6) Turn on the shade and set the ambient, diffuse and specular as 1.0

7) White background. Volume rendering ray casting sample distance is 0.1

8) Don't show color/scalar bar or coordinate axes.

Save the visualization image as "richtmyer/results/{agent_mode}/richtmyer.png".

(Optional, but must save if use paraview) Save the paraview state as "richtmyer/results/{agent_mode}/richtmyer.pvsm".

(Optional, but must save if use pvpython script) Save the python script as "richtmyer/results/{agent_mode}/richtmyer.py".

(Optional, but must save if use VTK) Save the cxx code script as "richtmyer/results/{agent_mode}/richtmyer.cxx"

Do not save any other files, and always save the visualization image.

🖼️ Visualization Comparison

Ground Truth

Agent Result

📏 Vision Evaluation Rubrics

📊 Detailed Metrics

Visualization Quality

0/30

Output Generation

5/5

Efficiency

10/10

Completed in 42.10 seconds (excellent)

Input Tokens

1,806

Output Tokens

2,256

Total Tokens

4,062

Total Cost

$0.0393

📝 rotstrat

❌ FAILED0/45 (0.0%)

📋 Task Description

Task:

Load the rotstrat dataset from "rotstrat/data/rotstrat_256x256x256_float32.vtk".

Generate a visualization image of the Rotstrat dataset, temperature field of a direct numerical simulation of rotating stratified turbulence, with the following visualization settings:

1) Create volume rendering

2) Set the opacity transfer function as a step function jumping from 0 to 1 at value 0.12

3) Set the color transfer function to assign a warm red color [0.71, 0.02, 0.15] to the highest value, a cool color [0.23, 0.29, 0.75] to the lowest value, and a grey color[0.87, 0.87, 0.87] to the midrange value

4) Set the viewpoint parameters as: [800, 128, 128] to position; [0, 128, 128] to focal point; [0, 1, 0] to camera up direction

5) Volume rendering ray casting sample distance is 0.1

6) White background

7) Visualization image resolution is 1024x1024

8) Don't show color/scalar bar or coordinate axes.

Save the visualization image as "rotstrat/results/{agent_mode}/rotstrat.png".

(Optional, but must save if use paraview) Save the paraview state as "rotstrat/results/{agent_mode}/rotstrat.pvsm".

(Optional, but must save if use pvpython script) Save the python script as "rotstrat/results/{agent_mode}/rotstrat.py".

(Optional, but must save if use VTK) Save the cxx code script as "rotstrat/results/{agent_mode}/rotstrat.cxx"

Do not save any other files, and always save the visualization image.

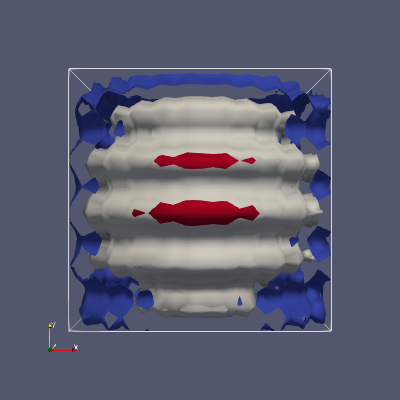

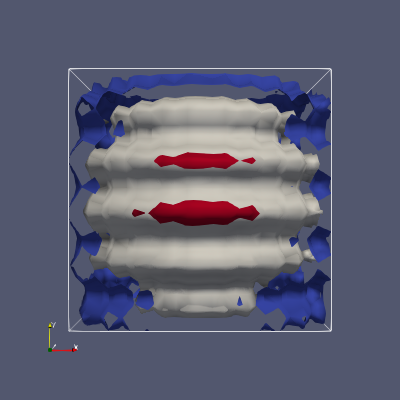

🖼️ Visualization Comparison

Ground Truth

Agent Result

📏 Vision Evaluation Rubrics

📊 Detailed Metrics

Visualization Quality

1/30

Output Generation

5/5

Efficiency

10/10

Completed in 23.44 seconds (excellent)

Input Tokens

641

Output Tokens

1,116

Total Tokens

1,757

Total Cost

$0.0187

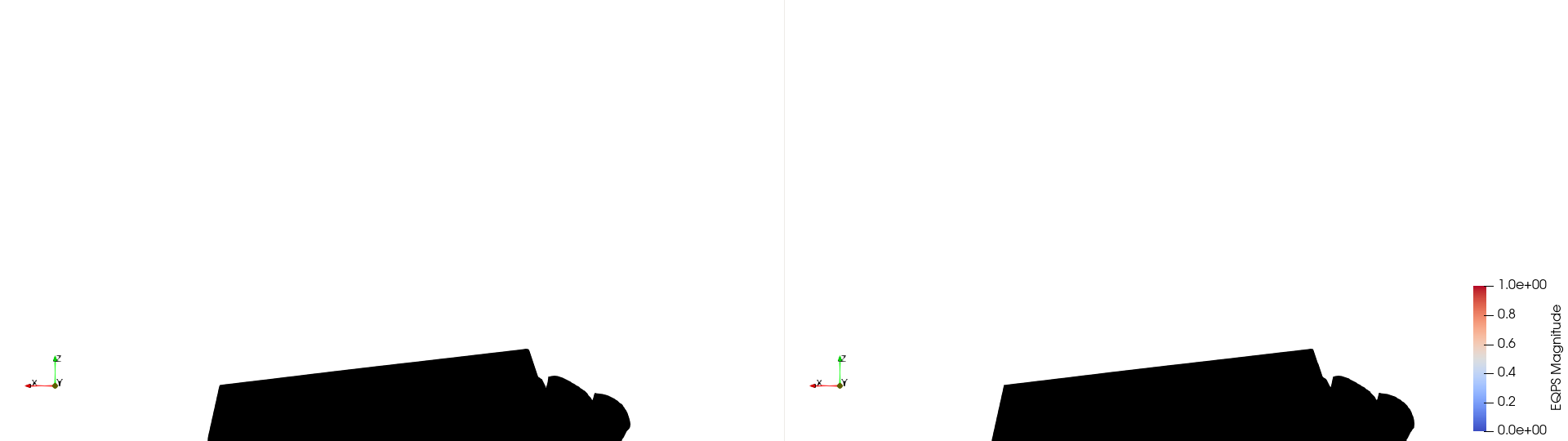

📝 rti-velocity_glyph

⚠️ LOW SCORE27/55 (49.1%)

📋 Task Description

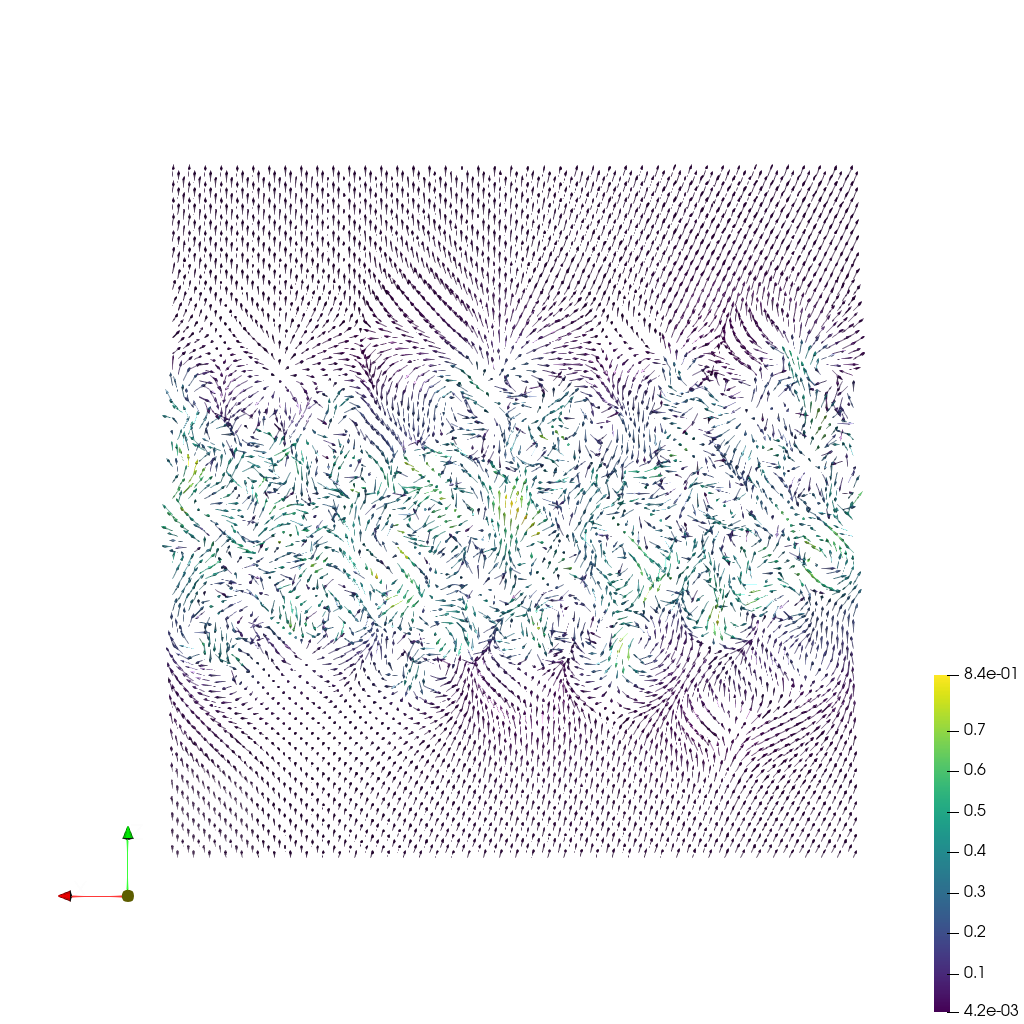

Load the Rayleigh-Taylor instability velocity field dataset from "rti-velocity_glyph/data/rti-velocity_glyph.vti" (VTI format, 128x128x128 grid).

Create a slice at y=64 through the volume.

Place arrow glyphs on the slice, oriented by the velocity vector. Use uniform arrow size (no magnitude scaling, scale factor 3.0).

Color the arrows by velocity magnitude using the 'Viridis (matplotlib)' colormap. Use a sampling stride of 3.

Add a color bar labeled 'Velocity Magnitude'.

Use a white background. Set the camera to view along the negative y-axis. Render at 1024x1024.

Set the viewpoint parameters as: [63.5, 250.0, 63.5] to position; [63.5, 64.0, 63.5] to focal point; [0.0, 0.0, 1.0] to camera up direction.

Save the visualization image as "rti-velocity_glyph/results/{agent_mode}/rti-velocity_glyph.png".

(Optional, but must save if use paraview) Save the paraview state as "rti-velocity_glyph/results/{agent_mode}/rti-velocity_glyph.pvsm".

(Optional, but must save if use pvpython script) Save the python script as "rti-velocity_glyph/results/{agent_mode}/rti-velocity_glyph.py".

(Optional, but must save if use VTK) Save the cxx code script as "rti-velocity_glyph/results/{agent_mode}/rti-velocity_glyph.cxx"

Do not save any other files, and always save the visualization image.

🖼️ Visualization Comparison

Ground Truth

Agent Result

📏 Vision Evaluation Rubrics

📊 Detailed Metrics

Visualization Quality

12/40

Output Generation

5/5

Efficiency

10/10

Completed in 20.84 seconds (excellent)

PSNR

17.50 dB

SSIM

0.9124

LPIPS

0.0892

Input Tokens

639

Output Tokens

1,317

Total Tokens

1,956

Total Cost

$0.0217

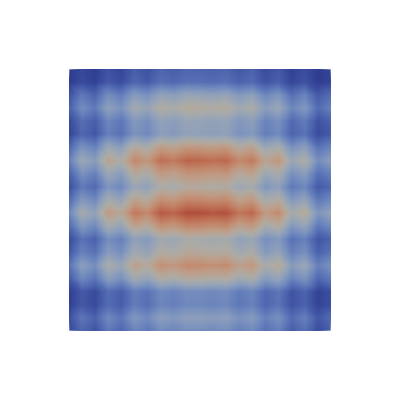

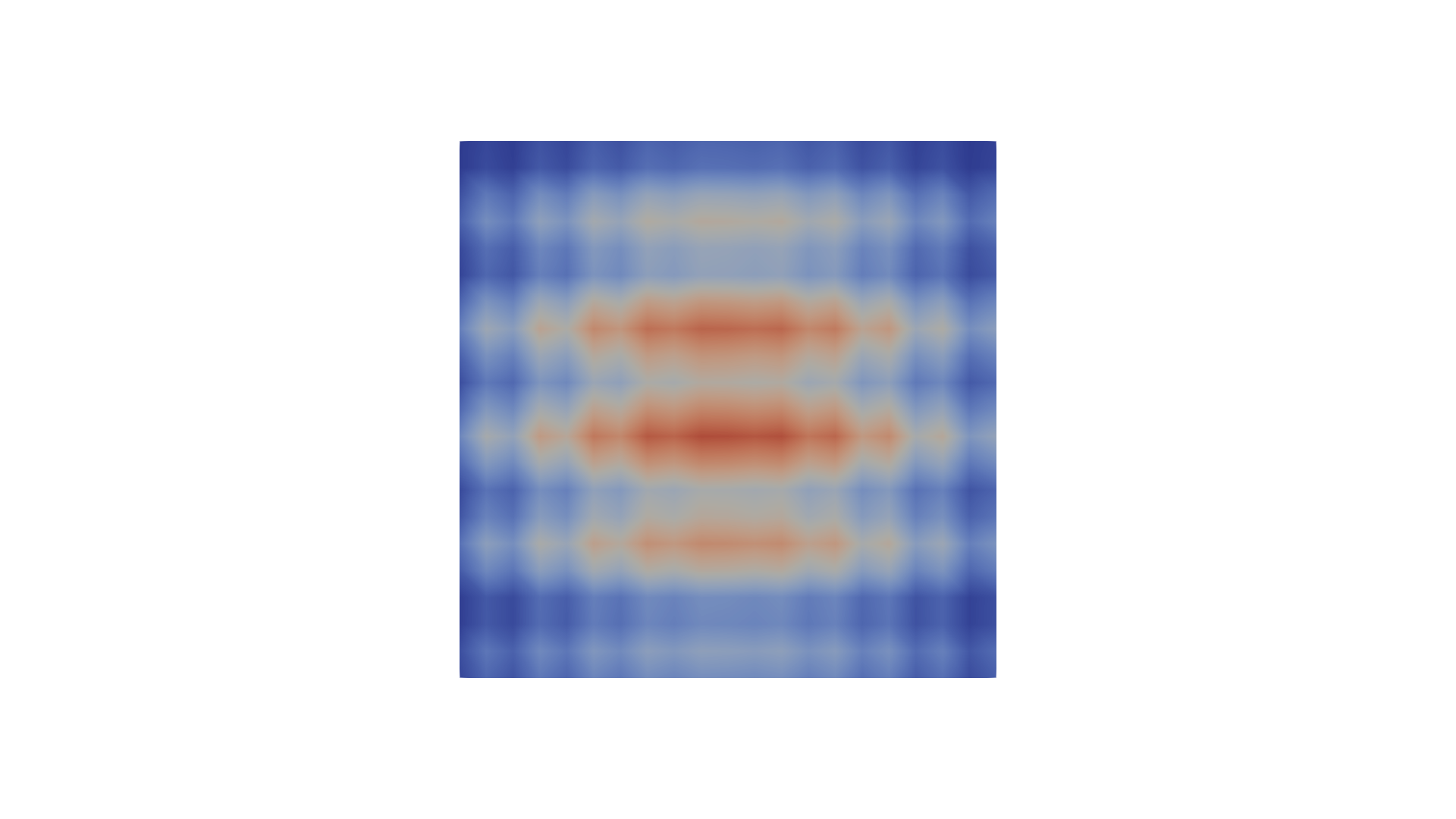

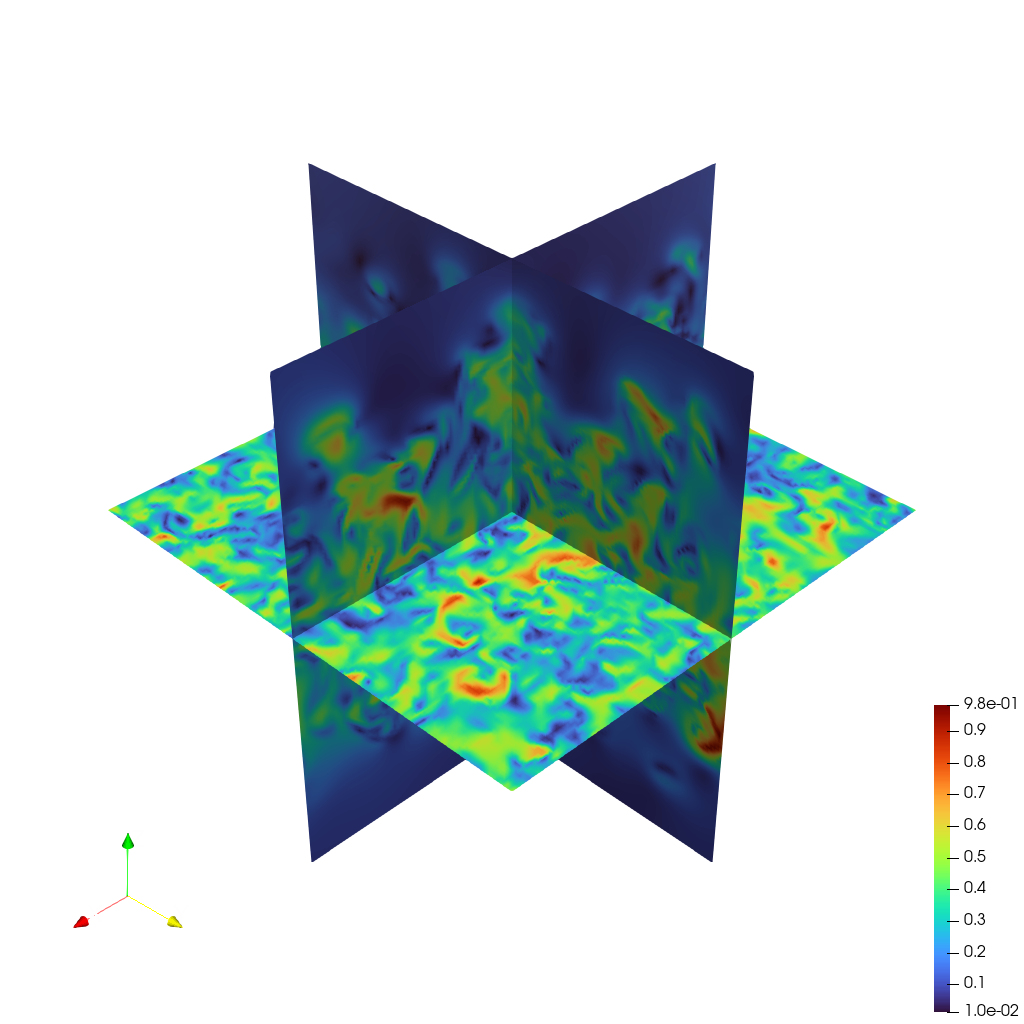

📝 rti-velocity_slices

26/45 (57.8%)

📋 Task Description

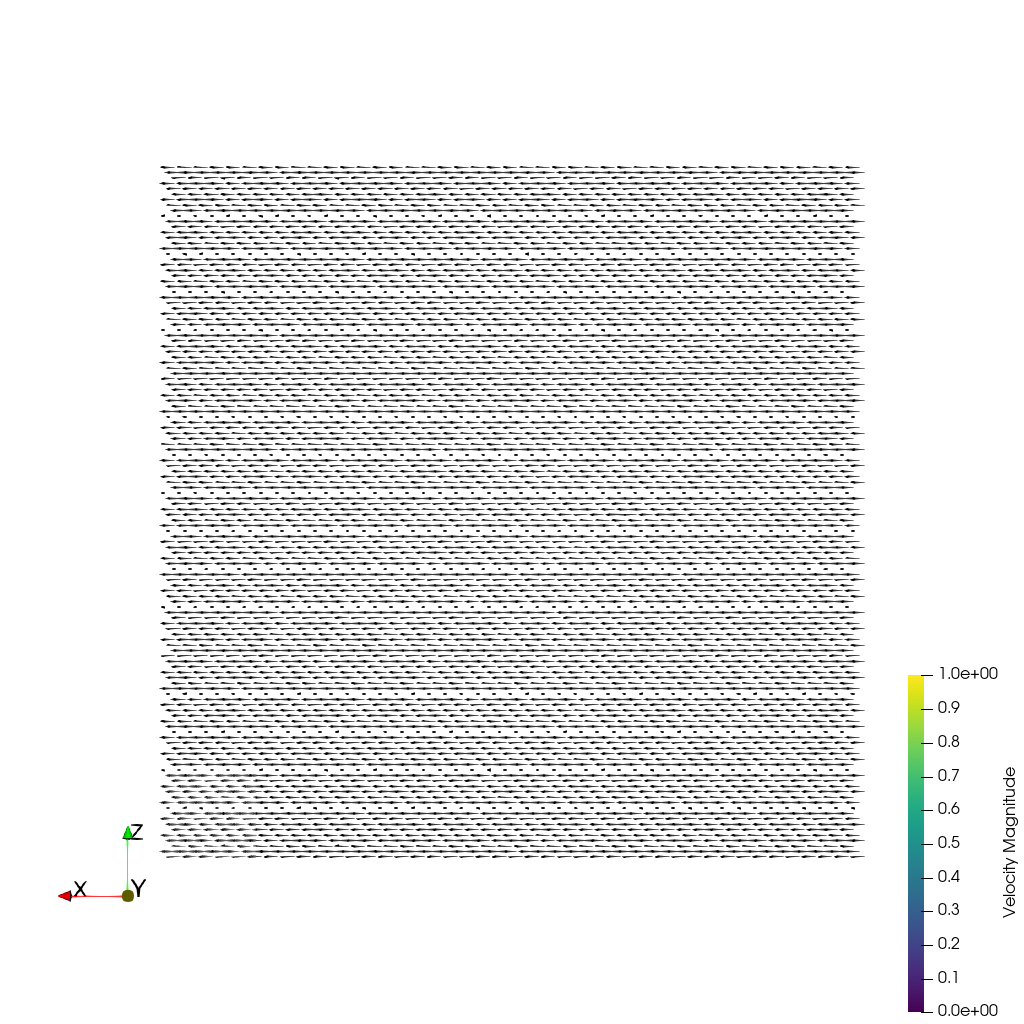

Load the Rayleigh-Taylor instability velocity field from "rti-velocity_slices/data/rti-velocity_slices.vti" (VTI format, 128x128x128).

Create three orthogonal slices: at x=64 (YZ-plane), y=64 (XZ-plane), and z=64 (XY-plane).

Color all three slices by velocity magnitude using the 'Turbo' colormap.

Add a color bar labeled 'Velocity Magnitude'.

Use a white background. Set an isometric camera view that shows all three slices. Render at 1024x1024.

Set the viewpoint parameters as: [200.0, 200.0, 200.0] to position; [63.5, 63.5, 63.5] to focal point; [0.0, 0.0, 1.0] to camera up direction.

Save the visualization image as "rti-velocity_slices/results/{agent_mode}/rti-velocity_slices.png".

(Optional, but must save if use paraview) Save the paraview state as "rti-velocity_slices/results/{agent_mode}/rti-velocity_slices.pvsm".

(Optional, but must save if use pvpython script) Save the python script as "rti-velocity_slices/results/{agent_mode}/rti-velocity_slices.py".

(Optional, but must save if use VTK) Save the cxx code script as "rti-velocity_slices/results/{agent_mode}/rti-velocity_slices.cxx"

Do not save any other files, and always save the visualization image.

🖼️ Visualization Comparison

Ground Truth

Agent Result

📏 Vision Evaluation Rubrics

📊 Detailed Metrics

Visualization Quality

11/30

Output Generation

5/5

Efficiency

10/10

Completed in 22.08 seconds (excellent)

PSNR

11.75 dB

SSIM

0.8099

LPIPS

0.2148

Input Tokens

613

Output Tokens

1,378

Total Tokens

1,991

Total Cost

$0.0225

📝 rti-velocity_streakline

❌ FAILED0/45 (0.0%)

📋 Task Description

Load the Rayleigh–Taylor instability velocity field time series from "rti-velocity_streakline/data/rti-velocity_streakline_{timestep}.nc", where "timestep" in {0030, 0031, 0032, 0033, 0034, 0035, 0036, 0037, 0038, 0039, 0040} (11 timesteps, NetCDF format, 128×128×128 grid each, with separate vx, vy, vz arrays).

Construct the time-varying velocity field u(x,t) by merging vx, vy, vz into a single vector field named "velocity", and compute the velocity magnitude "magnitude" = |velocity| for coloring.

Compute streaklines as a discrete approximation of continuous particle injection: continuously release particles from fixed seed points at every sub-timestep into the time-varying velocity field using the StreakLine filter. Apply TemporalShiftScale (scale=20) to extend particle travel time, and apply TemporalInterpolator with a sub-timestep interval of 0.25 (or smaller) to approximate continuous injection over time.

Seed 26 static points along a line on the z-axis at x=64, y=64 (from z=20 to z=108). Use StaticSeeds=True, ForceReinjectionEveryNSteps=1 (reinjection at every sub-timestep), and set TerminationTime=200.

Render the resulting streaklines as tubes with radius 0.3. Color the tubes by velocity magnitude ("magnitude") using the 'Cool to Warm (Extended)' colormap. Add a color bar for velocity magnitude.

Use a white background. Set an isometric camera view and render at 1024×1024.

Set the viewpoint parameters as: [200.0, 200.0, 200.0] to position; [63.5, 63.5, 63.5] to focal point; [0.0, 0.0, 1.0] to camera up direction.

Save the visualization image as "rti-velocity_streakline/results/{agent_mode}/rti-velocity_streakline.png".

(Optional, but must save if use paraview) Save the paraview state as "rti-velocity_streakline/results/{agent_mode}/rti-velocity_streakline.pvsm".

(Optional, but must save if use pvpython script) Save the python script as "rti-velocity_streakline/results/{agent_mode}/rti-velocity_streakline.py".

(Optional, but must save if use VTK) Save the cxx code script as "rti-velocity_streakline/results/{agent_mode}/rti-velocity_streakline.cxx"

Do not save any other files, and always save the visualization image.

🖼️ Visualization Comparison

Ground Truth

Agent Result

📏 Vision Evaluation Rubrics

📊 Detailed Metrics

Visualization Quality

0/30

Output Generation

5/5

Efficiency

8/10

Completed in 124.88 seconds (good)

Input Tokens

9,319

Output Tokens

9,907

Total Tokens

19,226

Total Cost

$0.1766

📝 save-transparent

19/35 (54.3%)

📋 Task Description

I would like to use ParaView to visualize a dataset.

Create a wavelet object and show it. Color the rendering by the variable ‘RTData’.

Render the wavelet as a surface. Hide the color bar.

Next, set the layout size to be 300 pixels by 300 pixels.

Next, move the camera with the following settings. The camera position should be [30.273897726939246, 40.8733980301544, 43.48927935675712]. The camera view up should be [-0.3634544237682163, 0.7916848767068606, -0.49105594165731975]. The camera parallel scale should be 17.320508075688775.

Save a screenshot to the file “save-transparent/results/{agent_mode}/save-transparent.png”, set the image resolution to 300x300, and set the background to transparent.

(Optional, but must save if use paraview) Save the paraview state as “save-transparent/results/{agent_mode}/save-transparent.pvsm”.

(Optional, but must save if use python script) Save the python script as “save-transparent/results/{agent_mode}/save-transparent.py”.

Do not save any other files, and always save the visualization image.

🖼️ Visualization Comparison

Ground Truth

Agent Result

📏 Vision Evaluation Rubrics

📊 Detailed Metrics

Visualization Quality

14/20

Output Generation

5/5

Efficiency

0/10

No test result found

Total Cost

$0.0011

📝 shrink-sphere

⚠️ LOW SCORE20/55 (36.4%)

📋 Task Description

Create a default sphere and then hide it.

Create a shrink filter from the sphere.

Double the sphere's theta resolution.

Divide the shrink filter's shrink factor in half.

Extract a wireframe from the sphere.

Group the shrink filter and wireframe together and show them.

Save a screenshot of the result in the filename "shrink-sphere/results/{agent_mode}/shrink-sphere.png".

The rendered view and saved screenshot should be 1920 x 1080 pixels and have a white background.

(Optional, but must save if use paraview) Save the paraview state as "shrink-sphere/results/{agent_mode}/shrink-sphere.pvsm".

(Optional, but must save if use python script) Save the python script as "shrink-sphere/results/{agent_mode}/shrink-sphere.py".

Do not save any other files, and always save the visualization image.

🖼️ Visualization Comparison

Ground Truth

Agent Result

📏 Vision Evaluation Rubrics

📊 Detailed Metrics

Visualization Quality

5/40

Output Generation

5/5

Efficiency

10/10

Completed in 18.20 seconds (excellent)

Input Tokens

424

Output Tokens

1,345

Total Tokens

1,769

Total Cost

$0.0214

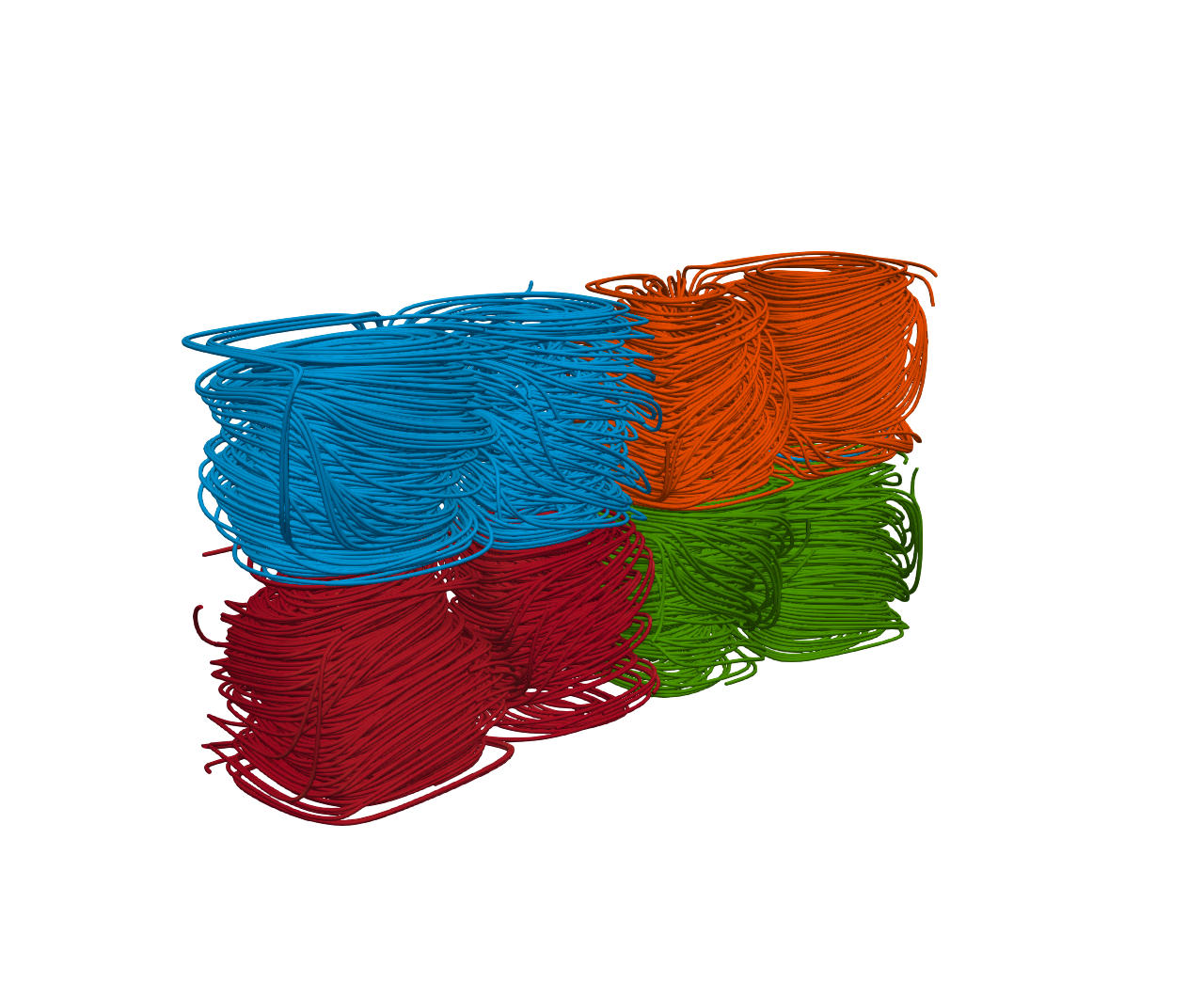

📝 solar-plume

43/55 (78.2%)

📋 Task Description

Task:

Load the solar plume dataset from "solar-plume/data/solar-plume_126x126x512_float32_scalar3.raw", the information about this dataset:

solar-plume (Vector)

Data Scalar Type: float

Data Byte Order: little Endian

Data Extent: 126x126x512

Number of Scalar Components: 3

Data loading is very important, make sure you correctly load the dataset according to their features.

Add a "stream tracer" filter under the solar plume data to display streamline, set the "Seed type" to "Point Cloud" and set the center of point cloud to 3D position [50, 50, 320] with a radius 30, then hide the point cloud sphere.

Add a "tube" filter under the "stream tracer" filter to enhance the streamline visualization. Set the radius to 0.5. In the pipeline browser panel, hide everything except the "tube" filter.

Please think step by step and make sure to fulfill all the visualization goals mentioned above.

Set the viewpoint parameters as: [62.51, -984.78, 255.45] to position; [62.51, 62.46, 255.45] to focal point; [0, 0, 1] to camera up direction.

Use a white background. Find an optimal view. Render at 1280x1280. Do not show a color bar or coordinate axes.

Save the visualization image as "solar-plume/results/{agent_mode}/solar-plume.png".

(Optional, but must save if use paraview) Save the paraview state as "solar-plume/results/{agent_mode}/solar-plume.pvsm".

(Optional, but must save if use pvpython script) Save the python script as "solar-plume/results/{agent_mode}/solar-plume.py".

(Optional, but must save if use VTK) Save the cxx code script as "solar-plume/results/{agent_mode}/solar-plume.cxx"

Do not save any other files, and always save the visualization image.

🖼️ Visualization Comparison

Ground Truth

Agent Result

📏 Vision Evaluation Rubrics

📊 Detailed Metrics

Visualization Quality

28/40

Output Generation

5/5

Efficiency

10/10

Completed in 34.73 seconds (excellent)

Input Tokens

1,809

Output Tokens

2,290

Total Tokens

4,099

Total Cost

$0.0398

📝 stream-glyph

38/55 (69.1%)

📋 Task Description

I would like to use ParaView to visualize a dataset.

Read in the file named "stream-glyph/data/stream-glyph.ex2".

Trace streamlines of the V data array seeded from a default point cloud.

Render the streamlines with tubes.

Add cone glyphs to the streamlines.

Color the streamlines and glyphs by the Temp data array.

View the result in the +X direction.

Save a screenshot of the result in the filename "stream-glyph/results/{agent_mode}/stream-glyph.png".

The rendered view and saved screenshot should be 1920 x 1080 pixels.

(Optional, but must save if use paraview) Save the paraview state as "stream-glyph/results/{agent_mode}/stream-glyph.pvsm".

(Optional, but must save if use python script) Save the python script as "stream-glyph/results/{agent_mode}/stream-glyph.py".

Do not save any other files, and always save the visualization image.

🖼️ Visualization Comparison

Ground Truth

Agent Result

📏 Vision Evaluation Rubrics

📊 Detailed Metrics

Visualization Quality

23/40

Output Generation

5/5

Efficiency

10/10

Completed in 42.91 seconds (excellent)

Input Tokens

1,992

Output Tokens

2,946

Total Tokens

4,938

Total Cost

$0.0502

📝 subseries-of-time-series

⚠️ LOW SCORE19/45 (42.2%)

📋 Task Description

Read the file "subseries-of-time-series/data/subseries-of-time-series.ex2". Load two element blocks: the first is called 'Unnamed block ID: 1 Type: HEX', the second is called 'Unnamed block ID: 2 Type: HEX'.

Next, slice this object with a plane with origin at [0.21706008911132812, 4.0, -5.110947132110596] and normal direction [1.0, 0.0, 0.0]. The plane should have no offset.

Next, save this time series to a collection of .vtm files. The base file name for the time series is "subseries-of-time-series/results/{agent_mode}/canslices.vtm" and the suffix is '_%d'. Only save time steps with index between 10 and 20 inclusive, counting by 3.

Next, load the files "subseries-of-time-series/results/{agent_mode}/canslices_10.vtm", "subseries-of-time-series/results/{agent_mode}/canslices_13.vtm", "subseries-of-time-series/results/{agent_mode}/canslices_16.vtm", and "subseries-of-time-series/results/{agent_mode}/canslices_19.vtm" in multi-block format.

Finally, show the multi-block data set you just loaded.

Save a screenshot to the file "subseries-of-time-series/results/{agent_mode}/subseries-of-time-series.png".

(Optional, but must save if use paraview) Save the paraview state as "subseries-of-time-series/results/{agent_mode}/subseries-of-time-series.pvsm".

(Optional, but must save if use python script) Save the python script as "subseries-of-time-series/results/{agent_mode}/subseries-of-time-series.py".

Do not save any other files, and always save the visualization image.

🖼️ Visualization Comparison

Ground Truth

Agent Result

📏 Vision Evaluation Rubrics

📊 Detailed Metrics

Visualization Quality

4/30

Output Generation

5/5

Efficiency

10/10

Completed in 46.45 seconds (excellent)

Input Tokens

2,728

Output Tokens

3,907

Total Tokens

6,635

Total Cost

$0.0668

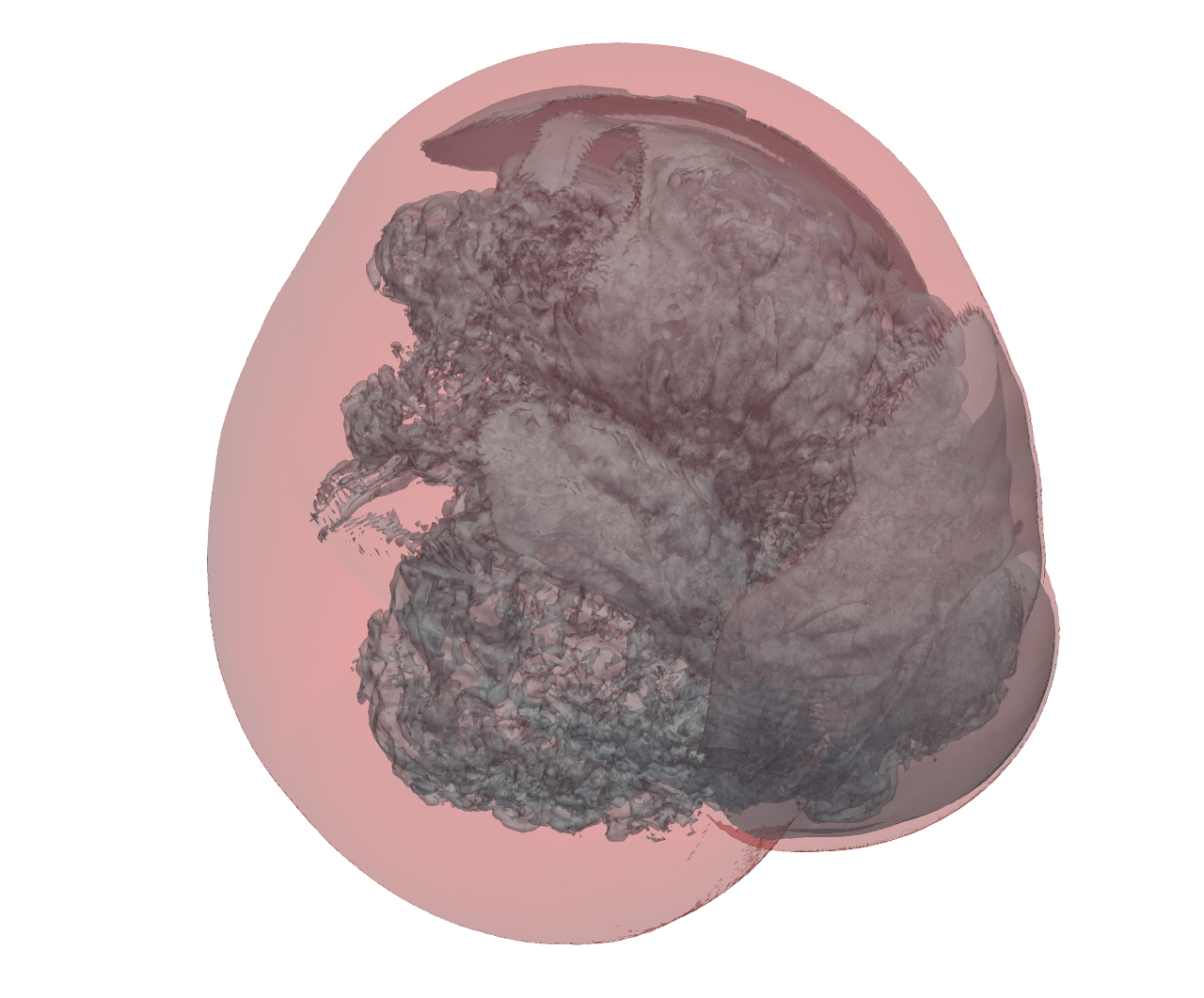

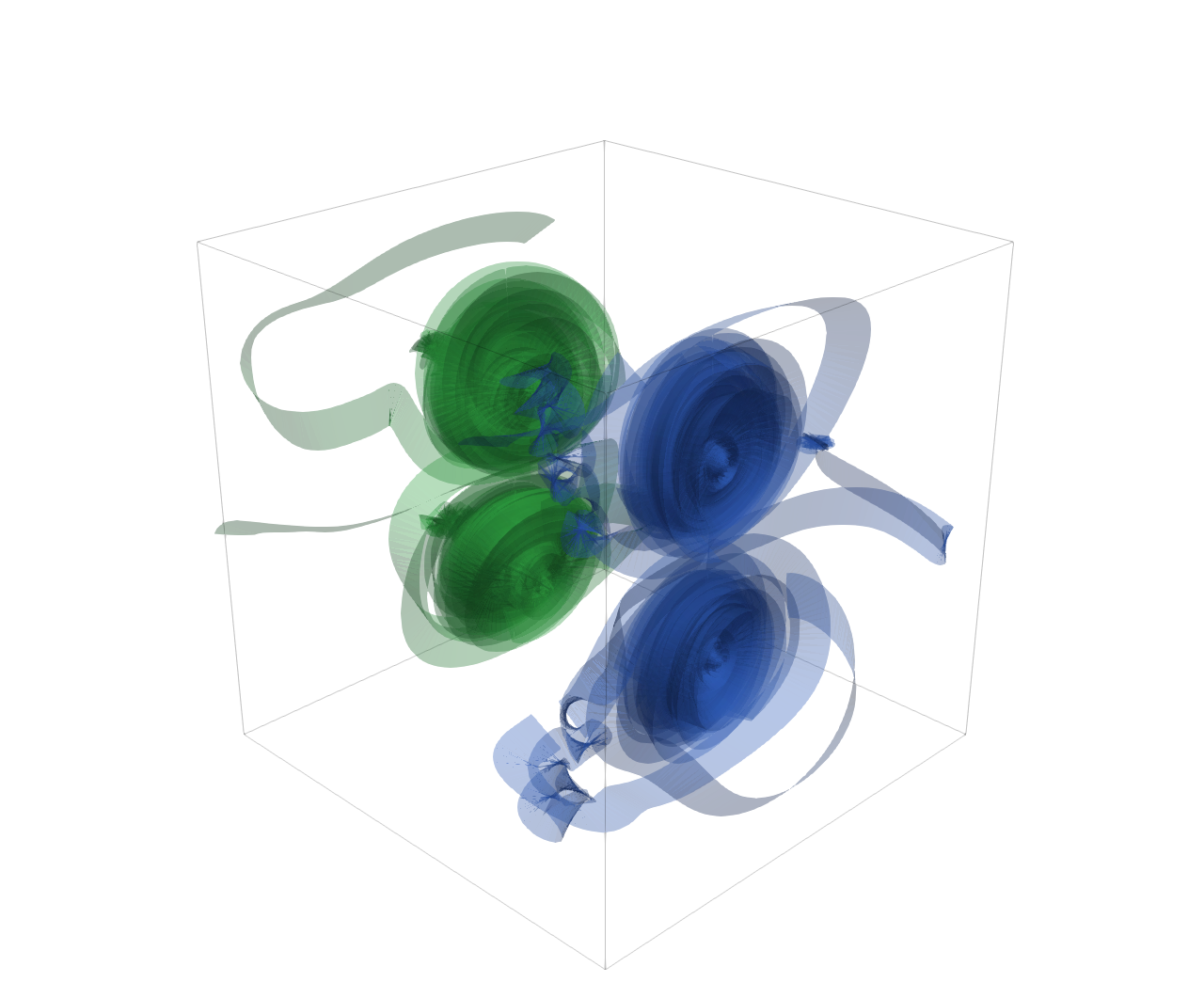

📝 supernova_isosurface

31/45 (68.9%)

📋 Task Description

Task:

Load the supernova dataset from "supernova_isosurface/data/supernova_isosurface_256x256x256_float32.raw", the information about this dataset:

supernova (Scalar)

Data Scalar Type: float

Data Byte Order: little Endian

Data Spacing: 1x1x1

Data Extent: 256x256x256

Data loading is very important, make sure you correctly load the dataset according to their features.

Then visualize it and extract two isosurfaces. One of them use color red, showing areas with low density (isovalue 40 and opacity 0.2), while the other use color light blue, showing areas with high density (isovalue 150 and opacity 0.4).

Please think step by step and make sure to fulfill all the visualization goals mentioned above. Only make the two isosurfaces visible.

Use a white background. Find an optimal view. Render at 1280x1280. Do not show a color bar or coordinate axes.

Set the viewpoint parameters as: [567.97, 80.17, 167.28] to position; [125.09, 108.83, 121.01] to focal point; [-0.11, -0.86, 0.50] to camera up direction.

Save the visualization image as "supernova_isosurface/results/{agent_mode}/supernova_isosurface.png".

(Optional, but must save if use paraview) Save the paraview state as "supernova_isosurface/results/{agent_mode}/supernova_isosurface.pvsm".

(Optional, but must save if use pvpython script) Save the python script as "supernova_isosurface/results/{agent_mode}/supernova_isosurface.py".

(Optional, but must save if use VTK) Save the cxx code script as "supernova_isosurface/results/{agent_mode}/supernova_isosurface.cxx"

Do not save any other files, and always save the visualization image.

🖼️ Visualization Comparison

Ground Truth

Agent Result

📏 Vision Evaluation Rubrics

📊 Detailed Metrics

Visualization Quality

16/30

Output Generation

5/5

Efficiency

10/10

Completed in 38.72 seconds (excellent)

PSNR

22.81 dB

SSIM

0.9761

LPIPS

0.0608

Input Tokens

2,060

Output Tokens

2,752

Total Tokens

4,812

Total Cost

$0.0475

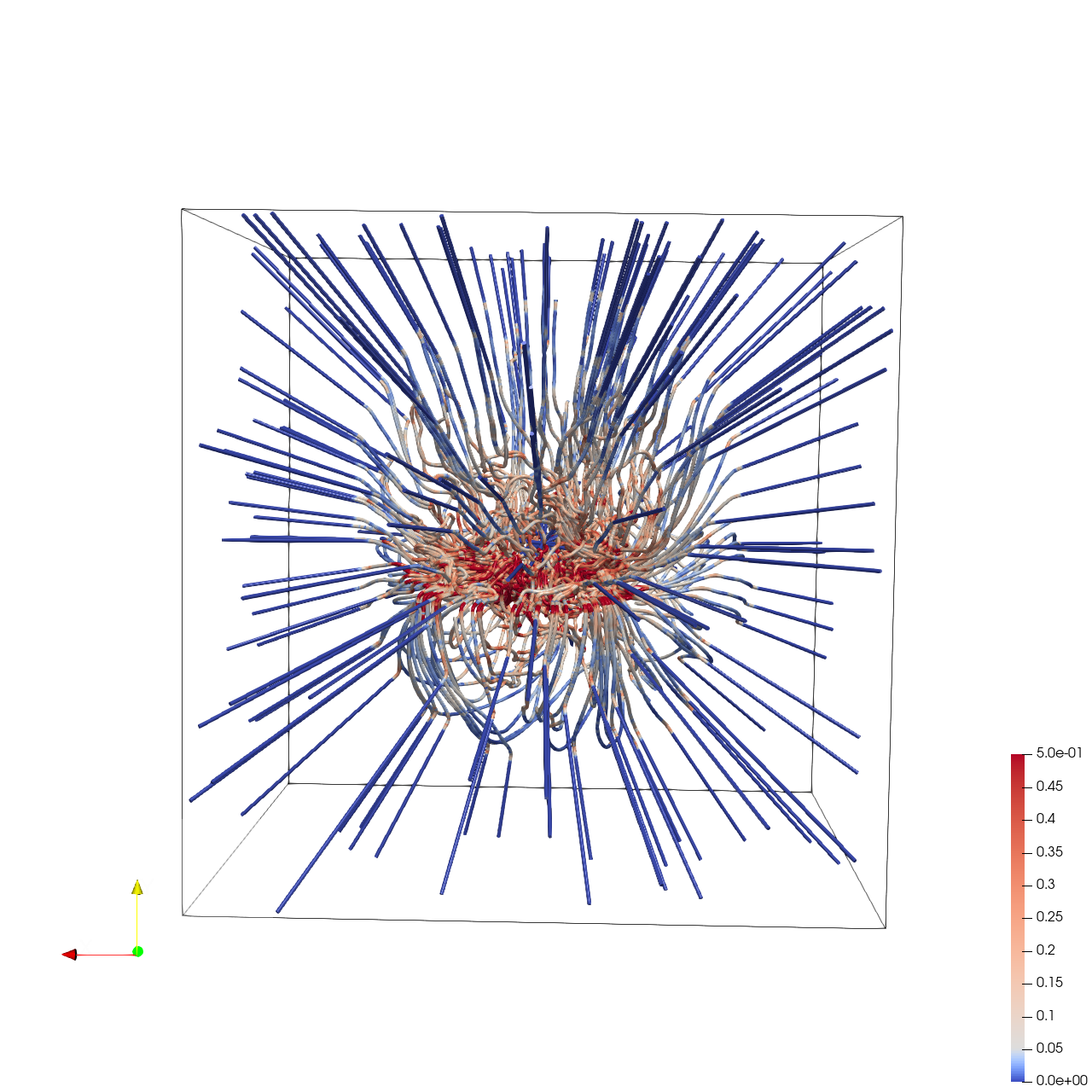

📝 supernova_streamline

❌ FAILED0/45 (0.0%)

📋 Task Description

Load the Supernova velocity vector field from "supernova_streamline/data/supernova_streamline_100x100x100_float32_scalar3.raw", the information about this dataset:

Supernova Velocity (Vector)

Data Scalar Type: float

Data Byte Order: Little Endian

Data Extent: 100x100x100

Number of Scalar Components: 3

Data loading is very important, make sure you correctly load the dataset according to their features.

Create streamlines using a "Stream Tracer" filter with "Point Cloud" seed type. Set the seed center to [50, 50, 50], with 200 seed points and a radius of 45.0. Set maximum streamline length to 100.0.

Add a "Tube" filter on the stream tracer. Set tube radius to 0.3 with 12 sides.

Color the tubes by Vorticity magnitude using a diverging colormap with the following RGB control points:

- Value 0.0 -> RGB(0.231, 0.298, 0.753) (blue)

- Value 0.05 -> RGB(0.865, 0.865, 0.865) (white)

- Value 0.5 -> RGB(0.706, 0.016, 0.149) (red)

Show the dataset bounding box as an outline (black).

In the pipeline browser panel, hide the stream tracer and only show the tube filter and the outline.

Use a white background. Render at 1280x1280.

Set the viewpoint parameters as: [41.38, 73.91, -282.0] to position; [49.45, 49.50, 49.49] to focal point; [0.01, 1.0, 0.07] to camera up direction.

Save the visualization image as "supernova_streamline/results/{agent_mode}/supernova_streamline.png".

(Optional, but must save if use paraview) Save the paraview state as "supernova_streamline/results/{agent_mode}/supernova_streamline.pvsm".

(Optional, but must save if use pvpython script) Save the python script as "supernova_streamline/results/{agent_mode}/supernova_streamline.py".

(Optional, but must save if use VTK) Save the cxx code script as "supernova_streamline/results/{agent_mode}/supernova_streamline.cxx"

Do not save any other files, and always save the visualization image.

🖼️ Visualization Comparison

Ground Truth

Agent Result

📏 Vision Evaluation Rubrics

📊 Detailed Metrics

Visualization Quality

1/30

Output Generation

5/5

Efficiency

9/10

Completed in 60.16 seconds (very good)

PSNR

15.72 dB

SSIM

0.8534

LPIPS

0.2168

Input Tokens

3,734

Output Tokens

4,310

Total Tokens

8,044

Total Cost

$0.0759

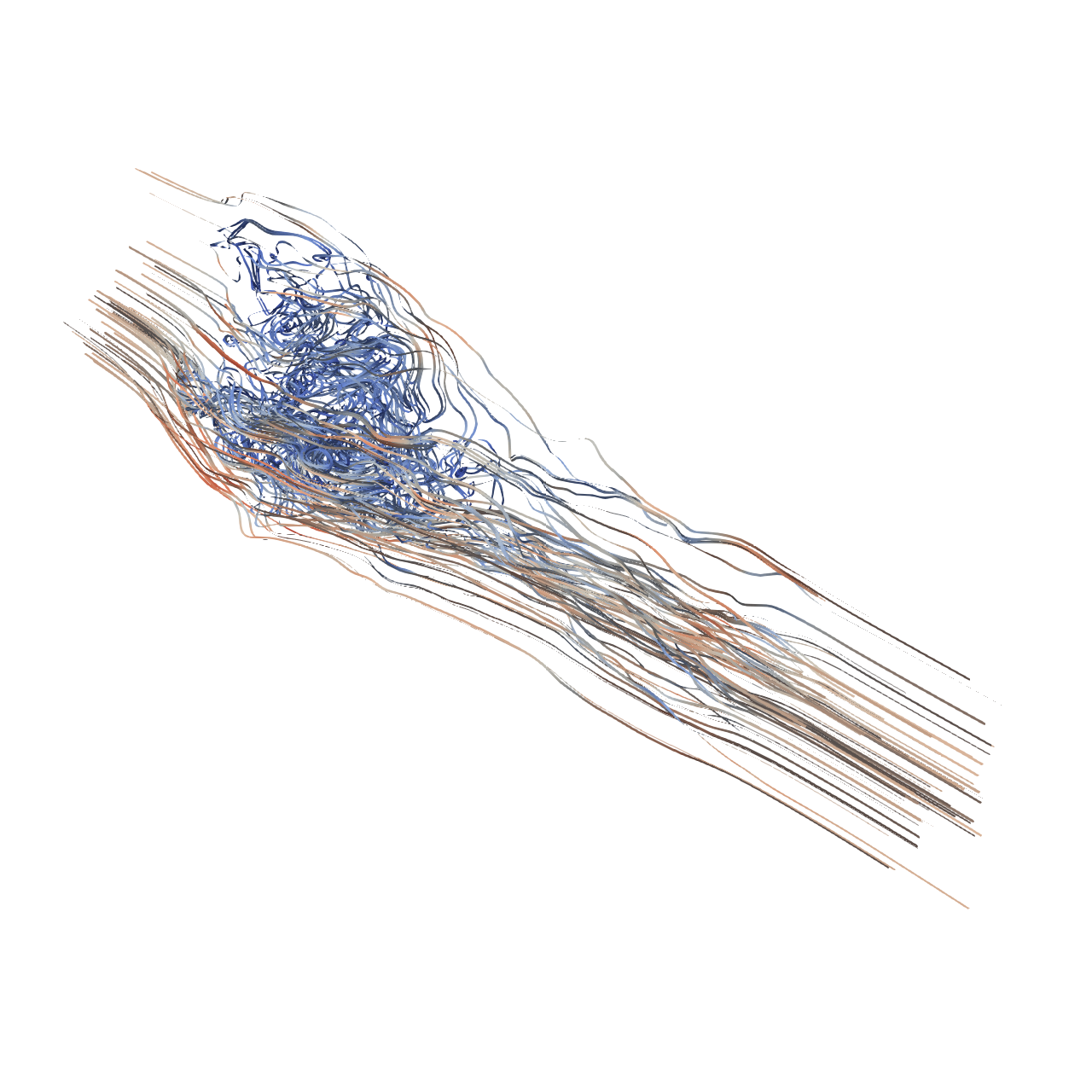

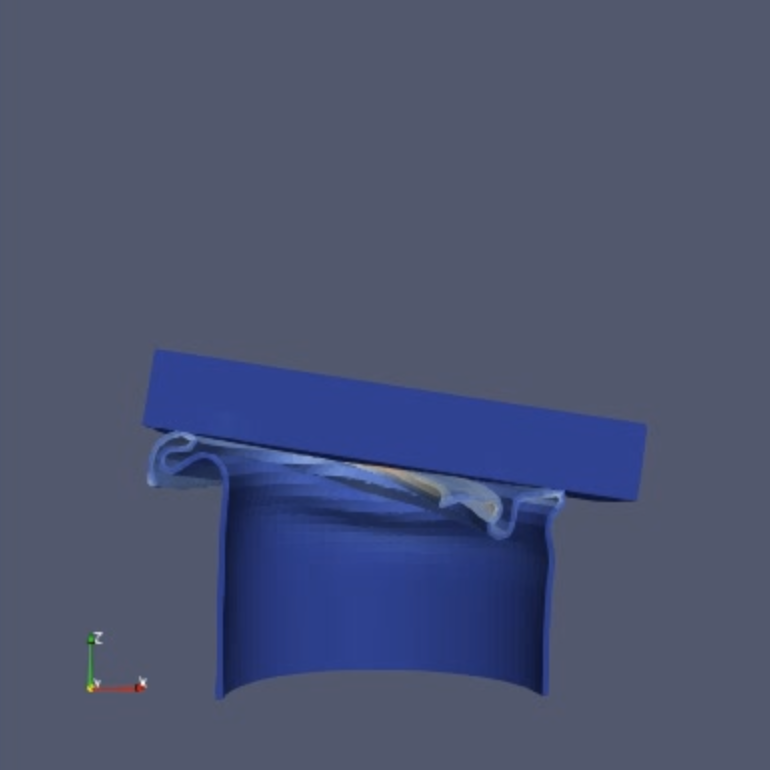

📝 tangaroa_streamribbon

❌ FAILED0/55 (0.0%)

📋 Task Description

Task:

Load the tangaroa dataset from "tangaroa_streamribbon_300x180x120_float32_scalar3.raw", the information about this dataset:

tangaroa (Vector)

Data Scalar Type: float

Data Byte Order: little Endian

Data Extent: 300x180x120

Number of Scalar Components: 3

Data loading is very important, make sure you correctly load the dataset according to their features.

Apply "streamline tracer" filter, set the "Seed Type" to point cloud, turn off the "show sphere", set the center to [81.6814, 80.708, 23.5093], and radius to 29.9

Add "Ribbon" filter to the streamline tracer results and set width to 0.3, set the Display representation to Surface.

In pipeline browser panel, hide everything except the ribbon filter results.

Please think step by step and make sure to fulfill all the visualization goals mentioned above.

Use a white background. Find an optimal view. Render at 1280x1280. Do not show a color bar or coordinate axes.

Set the viewpoint parameters as: [372.27, 278.87, 214.44] to position; [169.85, 76.46, 12.02] to focal point; [-0.41, 0.82, -0.41] to camera up direction.

Save the visualization image as "tangaroa_streamribbon/results/{agent_mode}/tangaroa_streamribbon.png".

(Optional, but must save if use paraview) Save the paraview state as "tangaroa_streamribbon/results/{agent_mode}/tangaroa_streamribbon.pvsm".

(Optional, but must save if use pvpython script) Save the python script as "tangaroa_streamribbon/results/{agent_mode}/tangaroa_streamribbon.py".